Full article

Does keeping less memory produce better work? This decision brief pits two models against each other: UK content teams using scoped memory, a system that retains only relevant decisions, and those relying on uncurated recall. Limiting the scope of a system’s memory demonstrably cuts duplication, tightens approvals, and improves output. For editorial operations leads, the goal is not more tools, but making existing ones smarter by remembering less, selectively.

What is being decided

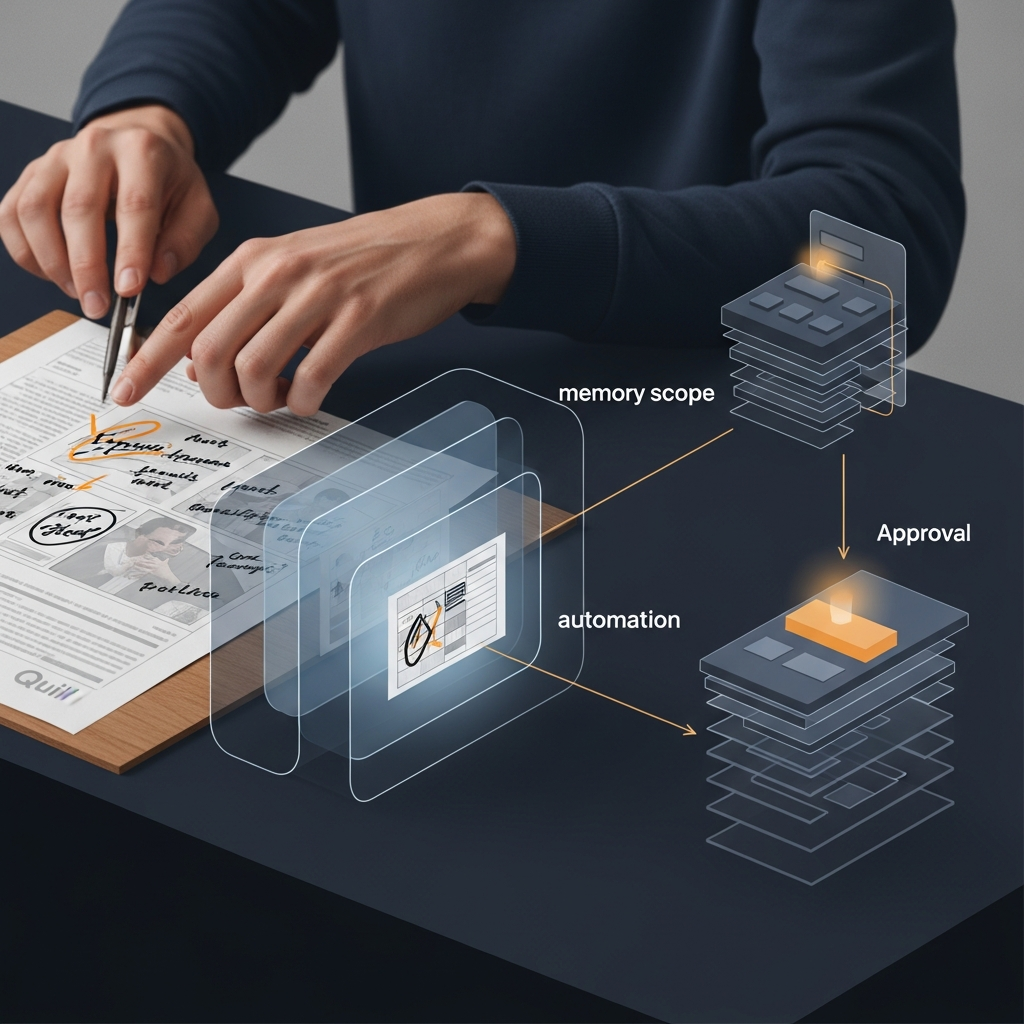

The decision is not whether memory matters, but what deserves to stay in scope. A scoped memory curates an automation system’s history, focusing it on high-value signals like approved templates and compliance checkpoints while filtering out noise. Teams that automate drafting but leave memory ungoverned see the consequences quickly: reused old phrasing, revived campaign logic, and superseded policy blended into current work. If a platform cannot explain its decisions based on clear memory cues, it does not deserve your budget. Unfiltered recall directly causes a spike in approval delays and duplicate briefing. The trade-off is clear: a broad memory preserves nuance but invites stale instructions; a tighter memory enforces a cleaner operating context.

Comparative view

Comparing the two operating models reveals a clear efficiency gap.

| Metric | Scoped memory | Uncurated memory |

|---|---|---|

| Average review cycles per piece | 2.1 | 3.8 |

| Time to final approval | 48 hours | 72 hours |

| Signal reuse rate | 65% | 30% |

The numbers show that fewer review cycles deliver drafts closer to the brief. Faster approvals reduce the conflict between automated output and live policy. A higher signal reuse rate confirms relevant inputs are being routed correctly, not just stored. Resistance often stems from a fear of losing control, where established habits are mistaken for reliable governance. Automation without measurable uplift is theatre, not strategy.

Operational impacts

Scoped memory embeds approval governance directly into drafting. Configuring a system to remember only the last three campaign styles and current brand guidelines, for example, can cut asset approval times substantially. The operational impacts appear across the workflow. Briefing tightens by cutting duplicate inputs and keeping sanctioned versions available. Drafting improves first-pass accuracy by keeping the system inside current lanes, a sensible trade for creative range when review time is expensive. Approval runs smoother because drafts built from active rules are easier to inspect, a critical factor in regulated environments. Recovery from failure is faster, as tracing a decision’s influences is simpler with a scoped memory. A practical rule set is surprisingly modest: keep current brand and legal guidance, recent approved exemplars, and routing logic for signal-led publishing; archive stale trend reports and retired campaigns.

Recommendation and next step

For most UK content teams, the better operating model is a scoped memory with clear review ownership. Start by auditing what your system retains and why. Most teams find clutter, from duplicated briefs to outdated examples. Define retention rules tied to decisions, like keeping the last quarter's approved patterns while archiving retired ones. Then, measure the effects in the queue: review cycles, approval times, and duplicate draft rates.

A pilot is the least painful route. Choose one channel or content type, keep the test narrow, and conduct weekly memory log reviews. If the figures do not improve, adjust the scope. Quill is built for this disciplined approach, combining signal-led publishing with human approval automation and clear audit trails. If your workflow carries too much memory and not enough control, we can work through the practical next steps together.