Full article

Lucozade Energy’s 32% sales uplift from the Halo activation isn't magic; it's evidence. Evidence that when you tie a gamified AR mechanic to a straightforward commercial goal, and execute it with discipline, it can move the needle. This benchmark matters because it cuts through the hype and shows what a well-designed activation looks like in live market conditions, not in a pitch deck.

Context

That 32% figure carries more weight than soft engagement claims because sales movement is a tougher standard. The mechanic used Halo’s recognisable IP to link a physical product to a digital experience, but its real strength was restraint. Spectacle can make a proposal feel bigger, yet each extra step slows the audience down. Here, the path was direct: buy, scan, unlock, receive value.

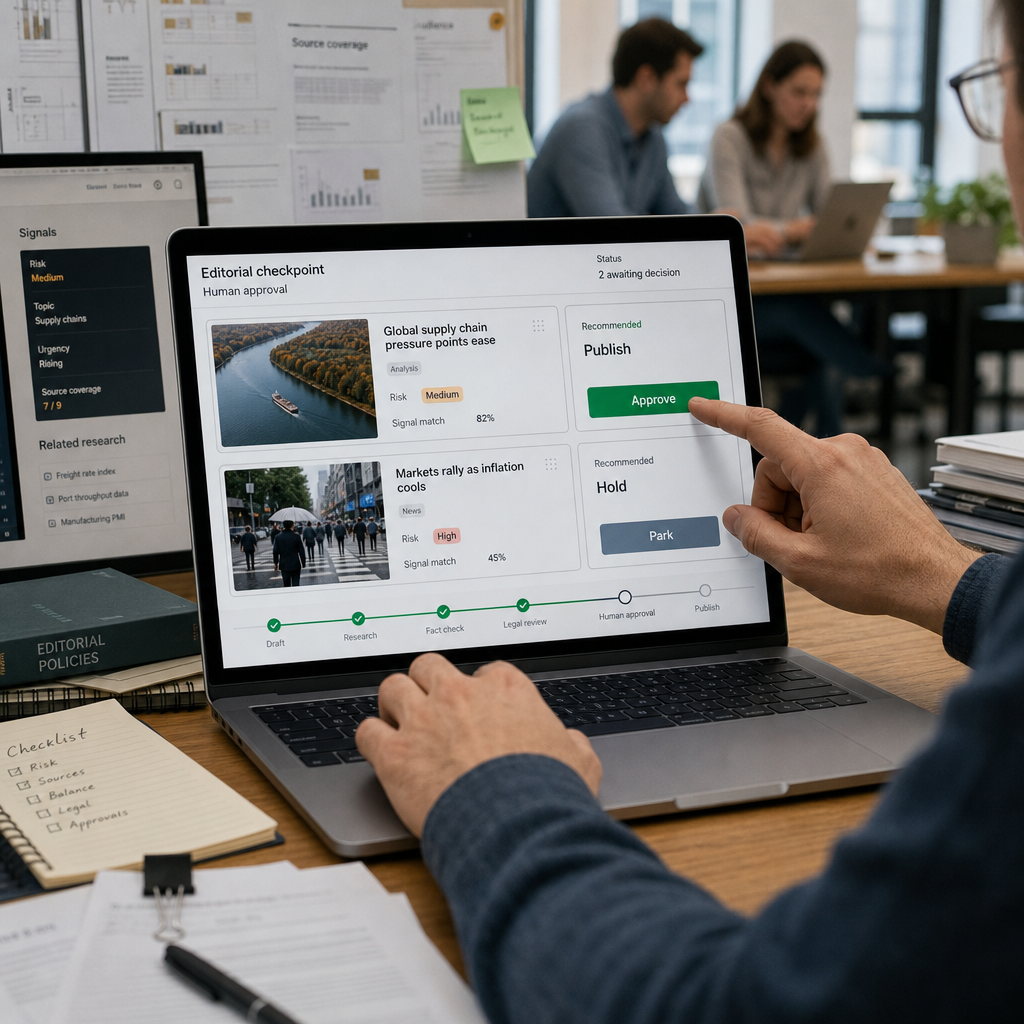

This discipline marks a shift. Around 2020, many AR activations were approved on novelty alone. That phase has passed. Brand and CRM leads now ask harder questions about cost, operational load, and repeatability. The focus has moved from one-off stunts to connected programmes where the mechanic must work across packaging, mobile, compliance, and reporting.

What is changing

We're moving from isolated experiments to repeatable systems. Automation without measurable uplift is theatre, not strategy. If a platform cannot explain its decisions, it does not deserve your budget.

Last Tuesday, in our studio in Abbey Mead, I reviewed a client’s AR mechanic draft. It had three hidden verification layers. The room went quiet as we realised no one would bother, participation would crater before the first reward. That’s when it hit me: simplicity isn't optional; it's the price of entry.

Public results support this. For the Google Pixel launch, we deployed 812 assets while reducing cost per asset by 23.5%, by designing modular from the start. Ribena’s activation overshot its entry goal by 258% because audience fit and reward logic were prioritised over novelty. Both cases show systems built for fairness and scale, not just flash.

Implications

First, stop buying mystery. Before launch, ask how uplift will be measured, which data source will be used, and who signs off the reporting. Slippery answers mean a structural problem, not a creative one. Our MAIA framework forces this alignment early, before budgets lock.

Second, acknowledge the trade-off between features and friction. Audiences have become less forgiving of hidden terms. The route from entry to reward should be clear enough for a consumer to explain it in one sentence. I still don’t fully understand why some complex mechanics fail on trust alone, but I’ve observed that a leaner entry flow almost always boosts participation, which is more valuable than a richer dataset from a tiny audience.

Privacy-wise, collect only what you need, explain why, and keep the path clear. It’s better for GDPR, trust, and often conversion.

Actions to consider

When weighing up mechanics, run these four checks to ground the discussion.

- Map the real-world journey. If it needs a flowchart, it's over complicated. Test it on someone outside the team, their confusion is your data.

- Choose the lead measure early. Pick the primary KPI, like sales uplift or email growth, and build reporting around it. Optimising for too many metrics often proves very little.

- Test for perceived fairness. A mechanic that feels auditable supports participation because people trust what they understand. This builds useful first-party data, which our DNA platform can enrich post-participation.

- Design for layers, not a single outcome. Can the mechanic support reward tiers or extend into loyalty? Thinking upfront about systems like ONECARD prevents dead ends.

For gamified AR, put your proposal beside the Lucozade Halo model: recognisable IP, simple on-pack invitation, low-friction mobile participation, clear reward logic, measurable outcome. If you’re adding steps without value, trim them. If the proof is vague, challenge it.

Ultimately, this case endures because it treated immersive tech as disciplined design, not a shiny detour. Cheers to that. Before committing your next campaign, hold its mechanics up to this benchmark for simplicity, fairness, and proof. If you want a candid partner to work through the trade-offs, book a chemistry session with the Holograph studio team. We’ll get the numbers on the table and build something that actually delivers.

Proof and original case study

This interpretation draws on a public Holograph case study. For the original source detail, see the original Holograph case study and more Holograph case studies.