Full article

Campaign spikes rarely break editorial quality first. They expose a weaker point: the approval gate wrapped around otherwise decent work. A queue built for ordinary weeks is suddenly expected to handle launch pressure, Black Friday volume or a fundraising push without changing its rules. That is where delay and drift start.

The shortest honest answer is this: Quill matters when a team needs approval logic that still holds under volume. The problem is usually not the draft. It is the handoff, the triage, the missing record of past decisions, and the absence of escalation before the queue starts backing up.

What is actually changing

The old assumption was that a human needed to see everything for the process to stay safe. Under pressure, that assumption turns expensive. When every draft lands in one queue and somebody has to inspect it before deciding where it goes, the queue stretches long before anyone has reviewed the copy properly.

That is the contradiction worth paying attention to. Manual triage feels cautious. In practice, it often burns reviewer time on repetitive classification work while high-risk pieces wait beside routine ones.

One content operations lead reported her team's queue delay tripled during a Black Friday push. The writing had not collapsed. The queue had. A UK finance publisher ran into the same pattern during a product launch: mean time to publish expanded because a person still had to read each draft, decide whether legal review was needed and assign it by hand. Faster drafting tools do not solve that failure. They feed it.

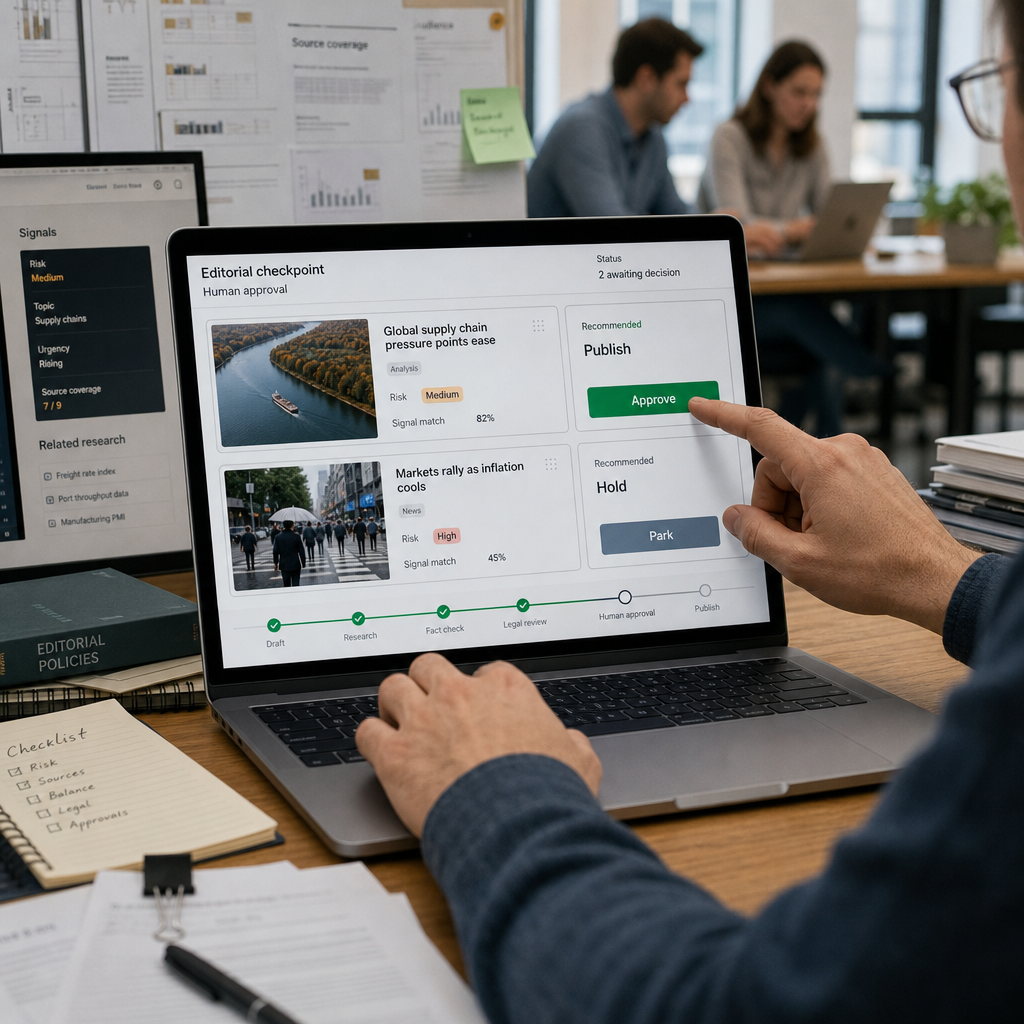

This is where editorial workflow automation either earns trust or exposes itself as theatre. If a platform cannot explain why a piece was routed to a given lane, it should not be making the decision. Signal-led routing by topic, risk, audience or publication urgency is not flashy, but it is defensible. It replaces triage by habit with triage by rule.

The trade-off map

A single queue is easy to explain. It is also where campaign pressure goes to pile up. Structured routing takes more work up front because someone has to define signals, service levels and exceptions. That setup cost is real. It is still cheaper than chaos once volume and content mix spike together.

The cleaner route is straightforward enough. A draft arrives. The system reads the available metadata and sends it into the right review lane before a human needs to intervene. Low-risk material from a trusted source can move to a light editorial check. Higher-risk content, including financial, medical or regulated claims, needs a lane with mandatory specialist review.

The point is not to strip people out. It is to stop senior reviewers spending half the day sorting work that never needed their attention. In a governed setup, human approval automation should reduce queue delay, duplicate drafting and avoidable back-and-forth. If those measures do not improve, the automation has not done much.

A UK retail publisher offers a useful example. During the Christmas push, they were running one queue for everything. Reviewers picked off the easier pieces first, which is what people do when the pile grows. The awkward work sat there and aged. Once the team split reviews into two lanes, a fast lane for standard product guides with a clear SLA and a slower lane for promotional finance content requiring two reviewers and legal sign-off, throughput improved without extra headcount. The fast lane moved. The slower lane stayed slower, but visible and governed rather than quietly buried.

Where approval quality actually slips

Quality usually slips when reviewers lose context, not simply when they get busy. A compliance reviewer may approve one version of a claim, then reject a near-identical version later because the earlier rationale has disappeared into email, chat or memory. That is not rigour. It is institutional forgetfulness with a serious face on.

An editorial memory system helps because it stores the reason behind the decision, not just the outcome. Not approved or rejected in isolation, but why, under what conditions, by whom and with which caveats. When a similar draft appears later, the reviewer can pull forward comparable decisions instead of rebuilding the logic from scratch.

One UK financial services publisher reduced duplicate review cycles by surfacing similar past approvals before the next review started. Nothing mystical there. No machine replacing judgement. Memory was doing the retrieval work so people could spend their effort on the decision.

Some teams resist this because precedent can feel like a quiet form of overreach. That concern is fair. In a well-designed system, memory should recall, not decide. The reviewer still owns the call. The harder part is cultural: once past decisions are visible, inconsistent ones become visible too. Better that than paying for the same argument every month.

What a resilient setup looks like

Approval gates that survive spikes tend to rely on three controls: signal-led routing, scoped memory and escalation rules that fire before the backlog turns ugly. Each solves a different failure. Together they keep memory, review discipline and delivery controls intact under pressure.

| Component | What it does | Observed outcome |

|---|---|---|

| Signal-led routing | Reads metadata and assigns the draft to the right lane | Triage time visibly reduced in practice |

| Editorial memory system | Surfaces prior approval decisions and rationale | Duplicate review cycles drop as precedent is shared |

| Escalation controls | Alerts or reassigns when a draft breaches SLA | Queue delay variance tightens instead of drifting |

The interaction matters. Routing without memory means reviewers still re-argue old calls. Memory without escalation leaves urgent work stranded in the correct lane, which is still stranded. Escalation without sensible routing just makes the noise louder. This is not abstract systems language. It is basic operational discipline: each control covers a different failure mode.

A UK charity publisher during its Christmas appeal showed the pattern clearly. Across two weeks, the team had dozens of articles spanning fundraising appeals, impact stories and donor thank-yous. In the governed setup, thank-yous went to a junior editor in a fast lane, appeals went to a senior content manager with precedent visible, and impact stories naming specific beneficiaries moved into legal review. The useful outcome was not glamour. It was that the work kept moving without somebody spending the weekend manually reshuffling drafts.

The proof-of-purchase parallel is useful here. Verification logic works when routine checks are handled consistently and exceptions are surfaced early. Editorial approvals are not identical, but the discipline travels well. Strict logic at the checkpoint can make the process lighter overall because fewer people are asked to inspect the wrong thing at the wrong time.

That is where Quill fits. Quill links signal triage, drafting, approval, imagery and delivery inside one governed workflow. The gain is not that it promises to automate judgement. It gives teams a way to design review paths, preserve human control and retain a record of why a decision was made. In regulated or reputationally sensitive publishing, that audit trail is not admin. It is part of the work.

Checklist before the next spike

Before the next campaign surge, keep the checklist short and fairly unforgiving:

- Define the routing signals. Start with three you can defend, such as topic, regulatory sensitivity and publication urgency. More signals can create false precision rather than better routing.

- Separate the lanes. Standard pieces and high-risk pieces should not compete in the same queue. A tidy queue can still hide a risky one.

- Store decision rationale. Keep the reason, not just the verdict. That is what stops teams replaying the same review debate.

- Set SLAs and escalation triggers. If a draft sits beyond the threshold, move it or flag it. Waiting politely is not a workflow.

- Test under load. Simulate double the usual volume before the real campaign. Pressure reveals weak approval logic quickly.

- Audit the misses. Any item that breaches SLA should be traced back to routing, memory, reviewer capacity or a bad rule. If you do not inspect the misses, you keep funding them.

The practical lesson is not complicated. Approval gates survive spikes when they are built around classification, context and exception handling, not around the hope that reviewers will somehow work faster in the busiest week of the quarter. Single queues look neat right up to the point they stop working.

If your current setup looks calm only because volume has been forgiving, that is the decision point. Quill is worth a proper look when the goal is to map the lanes, tighten approval logic and keep the workflow explainable under pressure. Cheers - if that sounds close to the problem on your side, let's have a sensible conversation.