Full article

Teams fixate on friction, but hidden costs from silent rejects often do more damage. Toxic data enters the CRM quietly, then surfaces as bounces, complaints and muddied reporting. Silent rejects seem tidy at the front end; they rarely stay tidy for long.

Block or route risky email entries at capture with rules you can explain, or let them in and hope downstream monitoring catches the damage early. For UK teams balancing deliverability, compliance and growth, that choice defines budget impact.

What is being decided

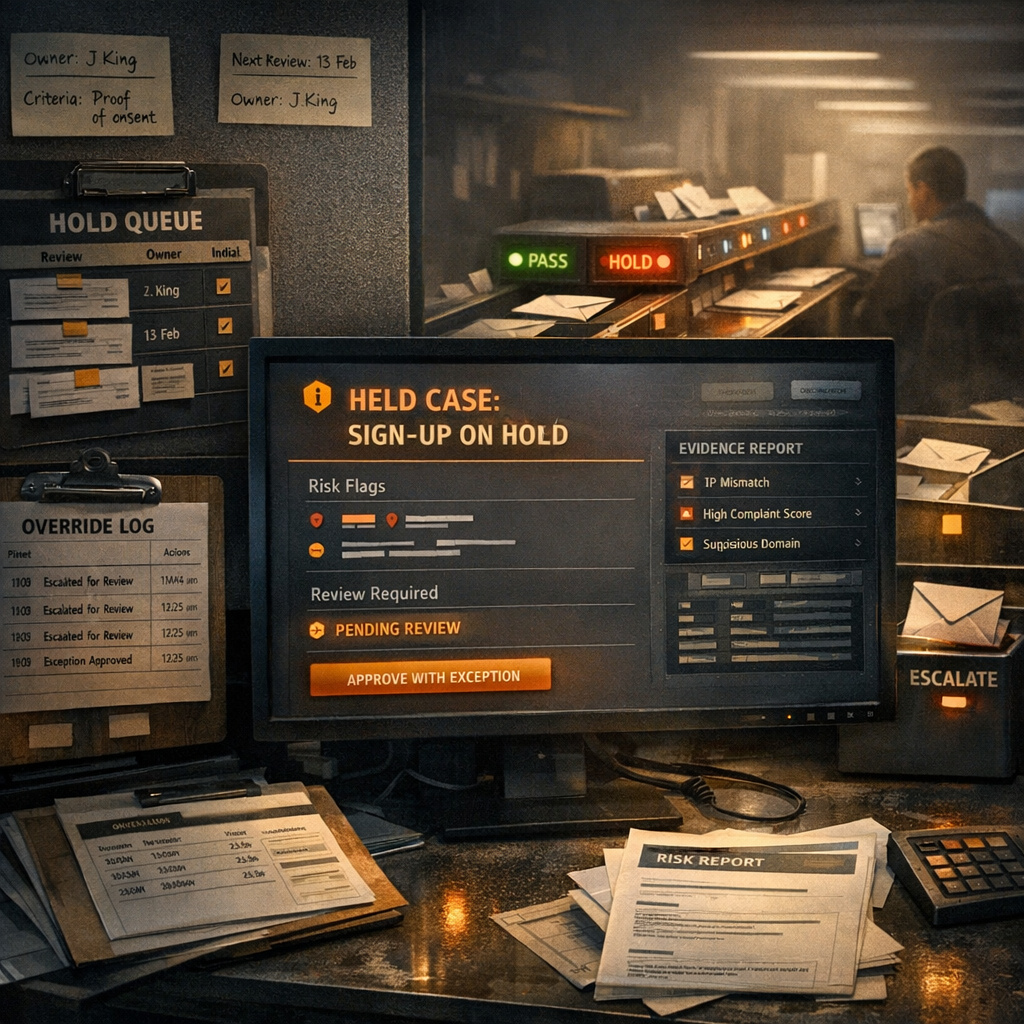

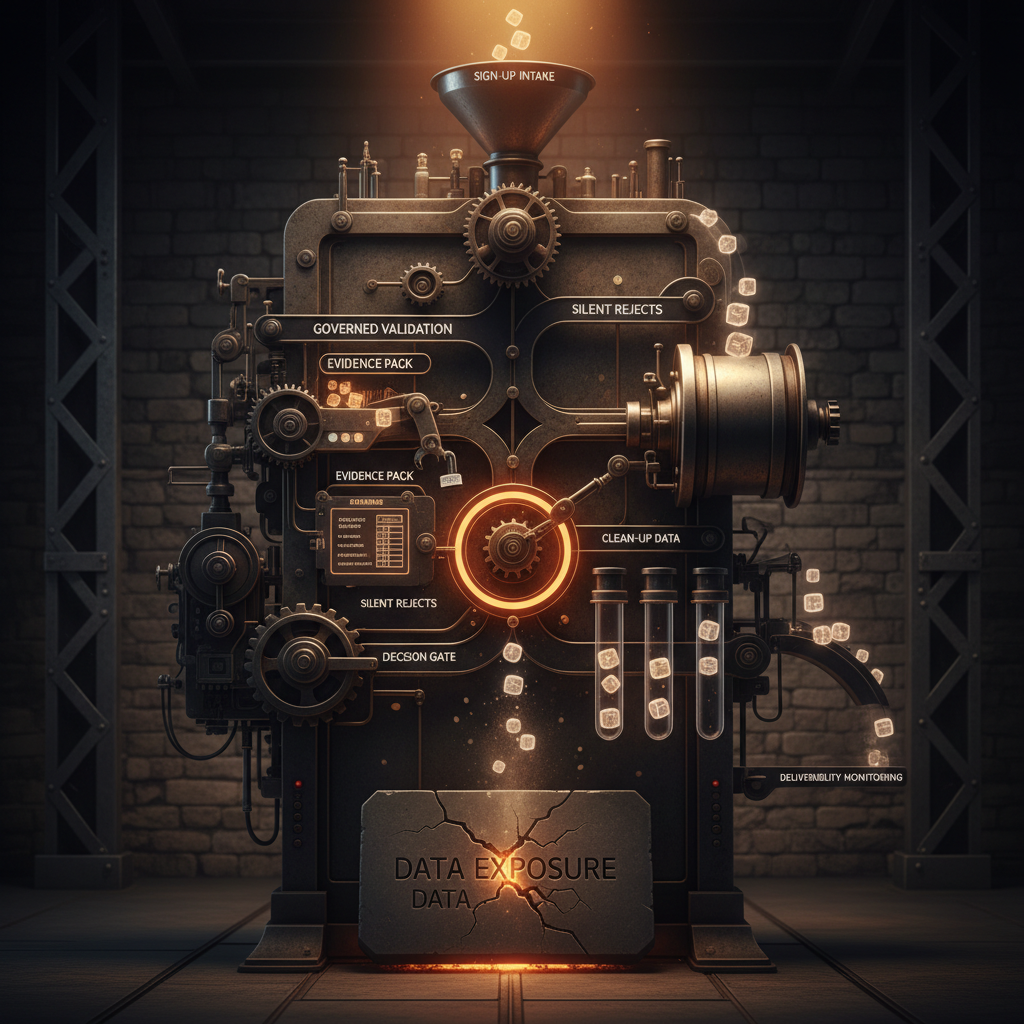

The practical choice sits between governed validation and silent rejection handling. Governed validation checks an address at point of entry, then accepts, flags for review or stops it from entering the system. Silent rejects take a softer route: the form accepts the entry, but problems surface later through bounce logs, complaint patterns, list cleaning and campaign underperformance.

Silent rejects appeal by avoiding visible interruption and immediate conversion anxiety. The trade-off is less charming. Toxic data reaches the CRM first, and by the time issues appear in campaign metrics, you pay for it in wasted sends, weaker audience insight and deliverability drag.

If a platform cannot explain its decisions, it does not deserve your budget. Automation without measurable uplift is theatre, not strategy.

Comparative view

The cleanest benchmark is not strict versus lenient. It is governed versus deferred. One approach makes a decision early with visible rules. The other postpones the decision and pushes the cost downstream.

In benchmarked tests, governed validation consistently removes a far higher proportion of fake entries, while silent reject approaches leave a significant volume of toxic data to be caught after sign-up. Earlier intervention cuts bad data before it harms sending performance.

Bounce management shows a similar split. Explicit validation materially reduces bounce rates against passive approaches. Catching a bad address after a send is not quality control. It is evidence that quality control was missing.

| Approach | Detection timing | Observed fake entry control | User experience trade-off | Operational risk |

|---|---|---|---|---|

| Governed validation | At sign-up | Significantly higher | Low friction when thresholds are tuned well | Lower bounce and audit risk, with clearer controls |

| Silent rejects | After capture | Lower and delayed | No visible friction at first | Higher clean-up cost and delayed deliverability impact |

Privacy arguments often overcomplicate this. Some teams assume silent handling is safer because less is surfaced to the user. That only holds if the validation layer itself is invasive or opaque. A privacy-preserving setup does the opposite: it validates quickly, stores nothing unnecessary and gives the business an audit trail for how decisions were made. That beats letting questionable data drift through the estate because nobody wanted a hard conversation about thresholds.

Some disposable domains evade universal detection consistently. Engines that combine multiple signals, rather than leaning on one static rule, create fewer false positives and hold up better as fraud patterns shift.

Operational impacts

Governed validation shifts effort to the front of the journey. Integration takes planning, thresholds need tuning and edge cases need a route other than a blunt rejection. The reward is that support, CRM and deliverability teams spend less time cleaning up the same mess later.

Silent reject models flip that burden. They look lighter during implementation, then become expensive in operation. Manual list scrubbing, complaint analysis, catch-all review and campaign troubleshooting all pile up because the system chose not to make a decision when the record first appeared.

EVE is built for that front-loaded model. It runs in sub-50ms, uses intelligent caching and can be deployed in privacy-conscious architectures with zero data retention. The useful part is what happens inside that response window: more than 30 detection methods, including keyboard-walk detection, entropy analysis, alias unmasking and behavioural fingerprinting, working together to sort obvious failures from review-worthy cases.

That creates a practical trade-off. Tune too tightly, and you risk annoying genuine users with edge-case addresses. Tune too loosely, and toxic data gets in, leaving CRM teams to inherit the problem weeks later. The sensible answer is to direct strictness where signals justify it: high-risk referral sources, competition entry flows, bot-prone campaigns and any form where list quality affects downstream revenue quickly.

Where silent rejects still make sense

Silent handling is not useless. It works when the cost of interrupting a genuine user is unusually high and the risk of immediate data pollution is lower. Long-consideration B2B forms can sometimes tolerate softer routing because downstream verification is already part of sales qualification. The same logic is far weaker for high-volume consumer acquisition, competitions or referral-heavy traffic, where poor inputs scale fast and degrade performance before anyone spots the pattern.

Low-friction journeys function when source quality is stable and the review path is real. Governed validation wins when volume, fraud pressure or deliverability sensitivity make delayed decisions expensive.

So yes, there is a place for a hybrid. But hybrid should not become an alibi for vague controls. You still need named thresholds, clear escalation rules and measurable outcomes.

Recommendation and next step

The strongest model for most UK teams is governed validation with selective review paths. Accept the clean records, stop the obvious failures, and route uncertain cases based on risk. Then use monitoring to refine the rules, not to compensate for the absence of rules.

Start with a short benchmark of your own operation. Compare source quality by form or referral path. Measure bounce rate, complaint rate and invalid or role-based address volume before and after capture. Review where catch-all domains, disposable services and typo patterns are entering the estate. If you cannot connect those signals to a rule set, you do not yet have deliverability control. You have post hoc observation.

EVE fits when you need those decisions to be fast, explainable and privacy-safe. It gives teams a way to reduce toxic data without turning sign-up into an obstacle course. The real trade-off to manage: a moment of intelligent control now, or a long tail of preventable damage later. If you want a clear view of where your current setup is leaking risk, have a 30-minute session with the EVE team and we'll walk through it properly. You'll come away with a practical read on your thresholds, likely weak spots and whether governed validation would earn its place in your stack.

Book a frictionless validation walkthrough with our solutions team.