Full article

The short answer: proof-of-purchase sign-ups should not be forced into a crude block-or-pass rule. It looks neat. In practice, it is how good customers get shut out, list quality gets distorted, and inbox risk stays poorly understood.

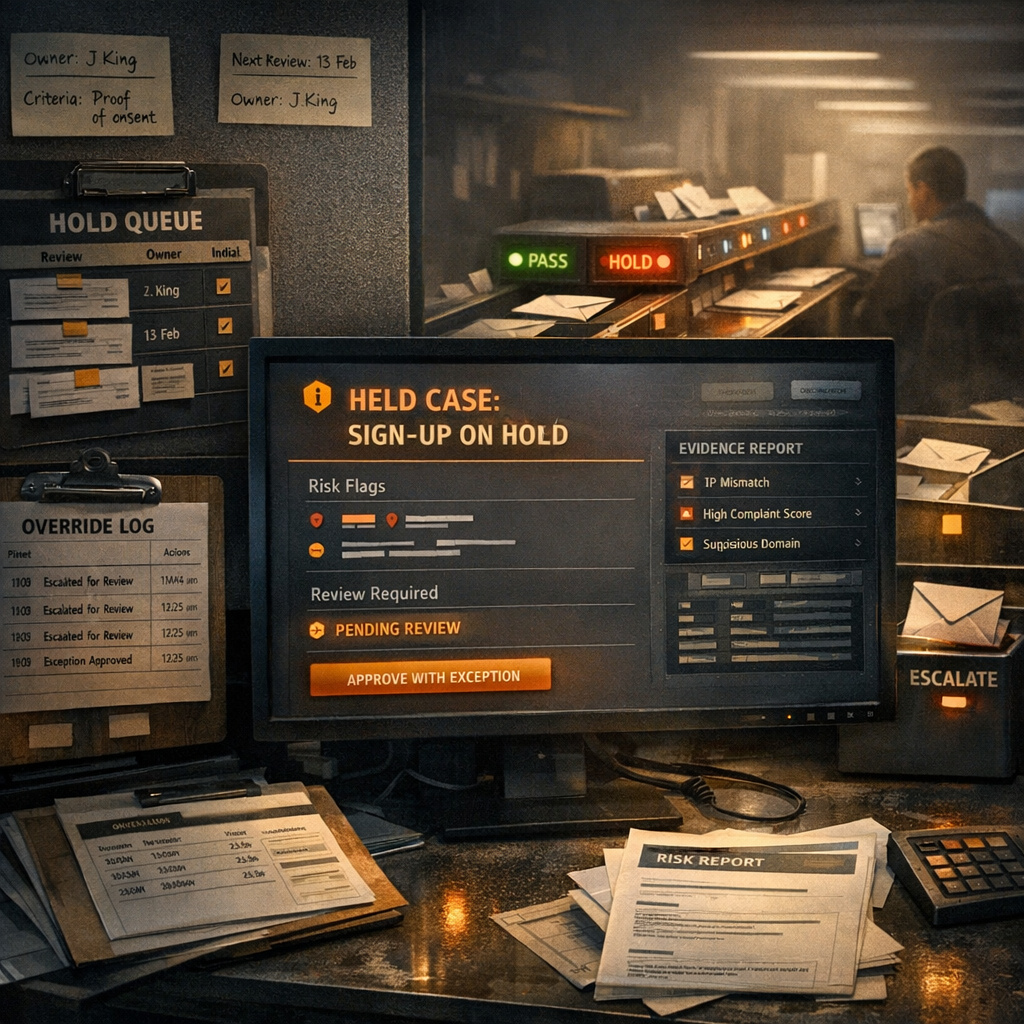

EVE changes the route, not just the label. Instead of reducing the email decision to valid or invalid, it supports automated email risk routing across pass, challenge, hold, review or stop, with the reasoning visible to the team. In proof-of-purchase journeys, that matters. The job is to protect deliverability without making honest claimants absorb the cost of every uncertain signal.

That tension is not a flaw in the process. It is the process. Tighter controls can protect sender reputation. They can also cut into acquisition quality when they trap people who should have passed. So the useful question is not how strict to be in the abstract. It is where to pass, where to challenge, where to hold, and where to stop.

Quick context

Proof-of-purchase sign-ups are awkward because they ask one flow to do two things at once: assess an email address and connect it to a purchase claim, registration or entitlement. The pressure shows up most in retail rewards, promotions and campaign windows where delay costs money and a false reject costs trust.

A common mistake is to treat uncertainty as fraud. A missing purchase match, a delayed feed or an unfamiliar domain might justify a challenge or a hold. None of those signals, by themselves, prove the sign-up should be stopped.

That is where EVE has a clear fit. It is an email judgement engine built for graded decisions rather than silent rejects. It can route a sign-up into pass, challenge, hold, review or stop in real time and keep the decision visible enough for operators to explain, test and change. If a threshold moves, there should be an owner, a date and a change log. Without those, the control is drifting.

The real comparison is not old technology versus new technology. It is governed automated judgement, with thresholds and exception handling, versus a patchwork of hard rejects, mailbox-quality drift and avoidable human handling. That is the trade-off worth measuring.

What risk or deliverability issue needs controlling

The issue is not simply whether a proof-of-purchase record looks suspicious. It is whether the route you choose improves inbox trust without sending too many legitimate sign-ups into friction, delay or loss.

That means looking past a single rule match. A stop decision may be justified when repeated failed purchase checks line up with known abuse patterns, or when the same signal class is already associated with complaints or soft bounce issues. A hold or challenge route suits incomplete but plausible cases, where confidence is not high enough for a clean pass but not low enough for a stop.

Static regex checks and allow-lists still have a role. For this problem, they are not enough on their own. Proof-of-purchase flows produce mixed evidence. Real-time email judgement is more useful when the question is consequence rather than syntax. Should this record proceed, pause, or stop because of what happens downstream? That is a route-state decision.

Explainable decisioning matters for the same reason. If an address is held, someone should be able to see why. If an override happens, the team should be able to trace who made it and what outcome they expected. Otherwise the queue goes dark, and dark queues do not stand up well when complaint rate, conversion or operator workload starts moving the wrong way.

Step-by-step approach

Start with the operating model, not the score. Before anyone debates threshold numbers, decide who owns policy changes, who handles exceptions, and when held or stopped cases will be reviewed. Teams vary on the detail. The principle does not: ownership and review dates need to exist before routing gets more sophisticated.

Then map the route states. In proof-of-purchase sign-ups, three usually do most of the work:

- Pass when purchase and email signals are low risk and acceptance criteria are met.

- Hold or challenge when the record is plausible but confidence is incomplete.

- Stop when the evidence is strong enough that allowing entry creates more downstream cost than value.

Keep the reason for each route concrete. Do not stop a sign-up because the team feels uneasy. Stop it because the evidence is specific and repeatable. Equally, do not build a hold queue with no release criteria. That is just a reject list with slower administration.

| Decision | Typical trigger | Owner | Checkpoint |

|---|---|---|---|

| Pass | Low-risk email signals and valid purchase match | System policy owner | Conversion rate and confirmation completion |

| Hold / challenge | Mixed signals, incomplete purchase evidence, or edge-case pattern | Fraud or data quality owner | Time to resolution and release rate |

| Stop | High-risk pattern or repeated failed checks with clear rule match | CRM lead for policy, operator for logging | Complaint rate, soft bounces, override volume |

Once the routes are defined, test them with paired comparisons. Put a stricter rule set beside a graded one on comparable traffic. The question is not which setting sounds safer in a meeting. It is which one gives the better balance of acquisition quality and inbox trust. At minimum, track:

- complaint rate

- soft bounce rate

- false positive rate on held or stopped sign-ups

- confirmation completion rate after a challenge step

If a hold policy reduces soft bounces but confirmation completion collapses, the control is moving cost rather than removing risk. If a stop rule catches obvious abuse and complaint rate improves without dragging down conversion, keep it. That is evidence, not instinct.

Where EVE fits best

EVE fits best where proof-of-purchase sign-ups sit between marketing performance and operational risk. Retail rewards, promotional claims and consumer registration flows are the obvious cases. These journeys punish both weak controls and blanket stops. One lets poor-quality records through. The other creates friction you feel later in conversion and service follow-up.

The product case is straightforward. EVE grades pass, challenge, hold, review or stop outcomes in real time and keeps the reasoning visible to the team. That makes it better suited to threshold tuning, exception handling and auditability than a static pass-fail layer. Details on the product are here: EVE. Wider implementation context sits here: Holograph solutions.

That does not remove the need for operating discipline. Holograph may own the implementation side where required, but the team running the sign-up journey still needs to own thresholds, exception rules and review cadence. Software can route the case. It cannot decide whether your acceptance criteria make operational sense.

Pitfalls to avoid

The first is over-correcting. One spike in bad entries does not justify turning the whole journey into a hard-stop exercise. Narrow stop rules can make sense for a defined abuse pattern. Broad ones usually catch too many legitimate users whose record is only incomplete or delayed.

The second is leaving a hold queue to fend for itself. If you add a hold state, define what release looks like, when the case expires and who escalates edge cases. Otherwise you get ad hoc decisions, weak audit trails and slow inconsistency.

The third is weak override discipline. Overrides are not a side door. They are controlled exceptions. Every release from hold should capture the reason, the operator and the outcome the team intends to watch. Without that, threshold changes become guesswork dressed up as judgement.

There is a simpler logic error underneath all of this: assuming tighter controls automatically create trust. Sometimes they do. Sometimes they create silent loss. Sender reputation improves when controls are targeted and measurable, not when every uncertain record is treated as a threat.

Checklist you can reuse

Keep the setup practical. If a control cannot be reviewed against a metric, it is too vague to run.

- Set policy ownership: name the owner for threshold changes and log the review date.

- Define acceptance criteria: state what must be true for a sign-up to pass, hold, challenge or stop.

- Track operational measures weekly: complaint rate, soft bounces, hold resolution time, and false positive rate are the minimum set.

- Keep an override log: record who released or stopped a sign-up, why, and what happened next.

- Review dependencies: if purchase checks rely on another feed or service, document the risk and mitigation before go-live.

- Run threshold comparisons: test one stricter setting against one more permissive setting on comparable traffic and keep the change log.

A simple cadence is enough if it is real. Weekly for queue health and exceptions. Monthly for threshold review. Quarterly for a broader policy check against campaign performance and deliverability controls.

Between review windows, acceptance criteria often need tightening. That is normal. The better test is consistency. Would two operators looking at the same held record reach the same outcome for the same reason? If not, the rule needs work.

What good execution looks like

Good execution is not a tidier queue. It is a system that makes the next decision easier to justify. You should be able to answer four questions quickly: what happened, why it happened, who owns the fix, and by when.

For proof-of-purchase sign-ups, that usually means:

- a documented threshold policy with dated revisions

- a short hold path for uncertain but recoverable cases

- clear stop conditions tied to observable risk

- weekly review of metrics that show whether inbox trust is improving

If one rule creates a surge in held records, inspect the rule. Do not mistake backlog for caution. If overrides are rising, either the threshold is off or the acceptance criteria are too loose to guide anyone properly. If complaint rate stays flat while conversion falls, the control may be costing more than it saves.

EVE is strongest when teams use it as a control system rather than a gate. The value is not only in scoring emails. It is in making automated email risk routing explainable, adjustable and accountable, without defaulting to manual review for every awkward case.

Closing guidance

The practical decision is not whether to be strict or lenient. It is where to challenge, where to hold, and where to pass so inbox trust improves without punishing legitimate sign-ups. Keep the policy measurable. Keep the owners visible. Keep the review cycle honest.

If you want to pressure-test a proof-of-purchase flow, EVE offers a more controlled way to route risk, explain decisions and tune thresholds without falling back on blunt rejects. If that is the issue on your side, a straightforward conversation is probably the next sensible step.

If this is on your roadmap, EVE can help you run a controlled pilot, measure the outcome, and scale only when the evidence is clear.