Full article

Executive summary: Teams often tighten sign-up controls to protect inbox trust, then lose legitimate customers in the same move. That is the contradiction. A hard reject looks clean because the route ends there. It also removes evidence. You lose the record, the context and, in many teams, any clear account of what good demand was turned away.

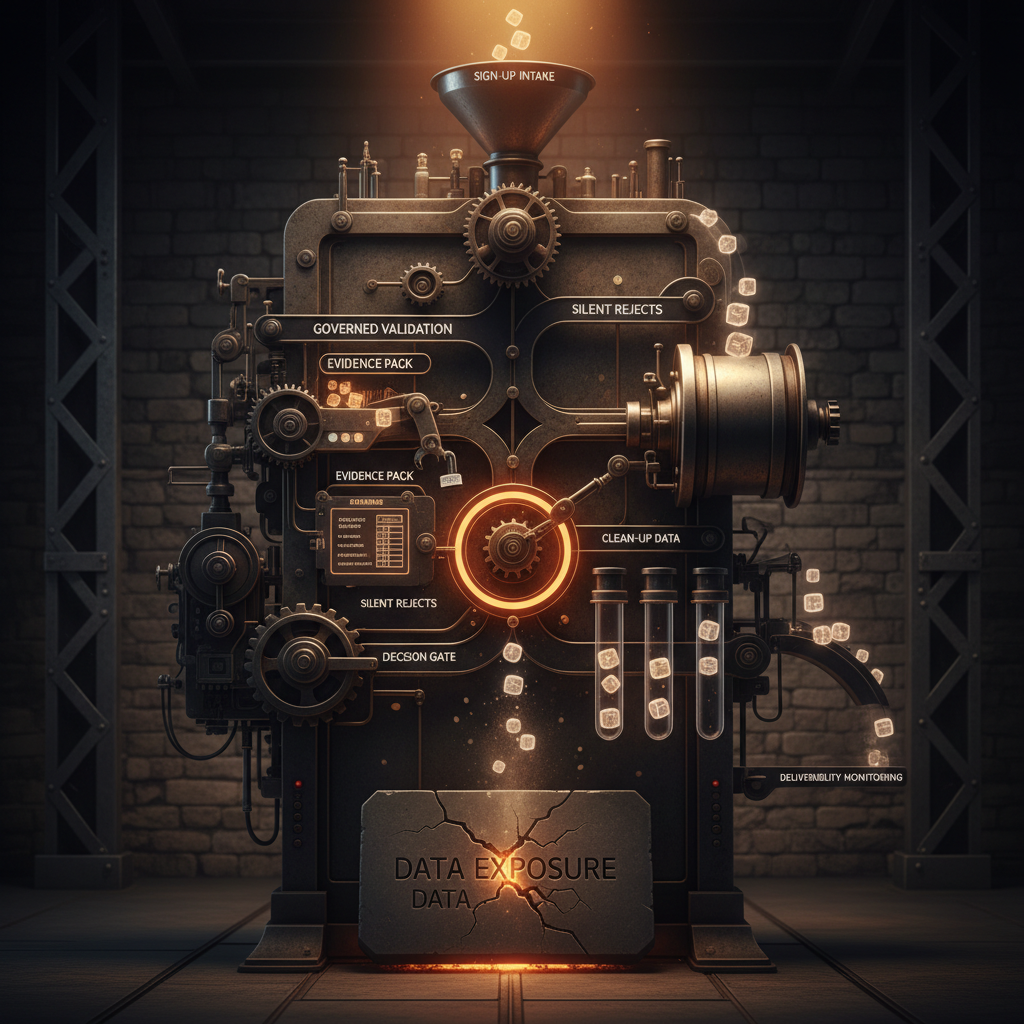

The shortest honest answer is this: EVE uses graded email judgement instead of a simple valid or invalid result. It sends sign-ups to pass, hold, challenge or stop through explainable decisioning. That changes the operating model. Teams can see why a record moved into each route, assign an owner to exceptions, and tune thresholds against outcomes they can measure, including hold-release rate, complaint rate and hard bounces.

For UK teams, that is the shift that matters. This is not smart scoring versus old tooling. It is governed routing with visible reasoning versus silent rejects and mailbox-quality drift. The proof question is plain enough: can you protect deliverability without blocking good users? EVE is built around that question, with real-time sign-up decisions and visible reasoning for the team, as set out in the EVE solution overview and broader Holograph solutions material.

The short answer

If a team is choosing between static checks and a real email judgement engine, start with the cost of uncertainty. Static regex checks, syntax rules and allow-lists can catch obvious failures. They are weak in the middle. A typo, a new corporate domain or an unusual but genuine address can be stopped as if it were clearly unsafe. The rule stays tidy. The route consequence does not.

EVE fits where a false positive has a commercial cost. Retail sign-ups, CRM capture, loyalty joins, promotions and reward flows all produce borderline records that do not justify an outright stop. In those journeys, automated email risk routing gives teams more control than a binary gate because it separates low risk, high risk and uncertain records into routes with consequences the business can own.

What risk or deliverability issue needs controlling

The issue is not whether an address passes a form-level check. It is whether sign-up controls protect sender trust without quietly cutting away legitimate acquisition. When uncertainty is treated as failure, the immediate report can look stricter. What follows is usually less flattering: reduced growth, weaker list quality and no reliable account of which legitimate users were blocked.

That is where graded routing starts to earn its place. Low-risk sign-ups pass. High-risk ones stop. The middle band goes to hold or challenge, where the friction is visible and the business can judge whether it is warranted. If that middle band is not measured, threshold setting slips into guesswork dressed up as control.

For UK teams running retail, CRM or reward flows, two checkpoints matter early. First, the share of sign-ups held or rejected at form level. Second, the share of held records later confirmed as legitimate. Those numbers tell you whether your controls are protecting trust or simply shrinking acquisition with more severe route rules.

Options and trade-offs

There are two distinct operating models here. The difference is not theoretical. It is what each model lets a team prove.

| Factor | Hard-reject model | Graded judgement model with EVE |

|---|---|---|

| Decision mechanism | Binary valid or invalid using fixed rules, syntax checks or blocklists. | Pass, hold, challenge or stop using automated email risk routing and adjustable thresholds. |

| What the team can see | Often limited. Support and CRM teams may only see that a sign-up failed. | Reason codes, scores and override history support explainable decisioning. |

| Conversion impact | Fast to enforce, but false positives can disappear without a trace. | Ambiguous cases are contained rather than discarded, which reduces avoidable loss. |

| Operational load | Little visibility into uncertain cases once a sign-up is rejected. | Needs a named owner for the hold queue, review rules and release criteria. |

| Control quality | Simple to apply, with limited scope to tune uncertain outcomes. | Thresholds, reasons and overrides can be reviewed against measurable outcomes over time. |

The weak point in the strict model is not that it rejects records. Sometimes it should. The weak point is that many teams cannot say which good sign-ups were blocked, who approved the rule or what metric justified the trade-off. If the owner, review date and acceptance criteria are missing, the control may still be live, but it is not well governed.

The case for graded judgement rests on evidence. Teams can compare hold volume with release volume, track engagement from released records, and test whether a threshold is too severe or too loose. That is a more useful benchmark than treating reject rate as success on its own.

Where EVE fits best

EVE is strongest where sign-up quality and sender trust have to be managed together. That includes acquisition journeys where the list is valuable enough to protect, but broad enough that unusual legitimate addresses will appear. CRM teams, fraud operators and data quality owners usually need the same thing here: visible reasoning, adjustable thresholds and exception handling that does not harden into a permanent review queue.

That distinction matters because the comparison is often framed badly. It is not really real-time email judgement versus fraud control. It is real-time judgement versus blunt blocking. The more useful question is which approach gives the team a clearer route to protect deliverability while keeping legitimate users in play.

Related products such as QuickThought, DNA and MAIA may support wider decisioning, data handling or orchestration needs, but EVE’s job in this context is narrow and clear: make sign-up route states visible, make threshold decisions explainable, and keep exception handling governable.

Where overrides help, and where they go wrong

An override helps when it is narrow, logged and time-bound. It causes trouble when it becomes a standing escape hatch for commercial pressure. That is why override policy belongs inside the operating model, not as a note in the margin.

The workable version is tighter than many teams allow. Define what can be overridden, who owns the decision, what evidence is required and when the temporary rule expires. A new partner domain, a known campaign source or a cluster of sign-ups later confirmed as benign may justify an exception. A weekly push to make the volume look healthier does not.

The override log needs to be plain and complete: reason code, owner, date, expiry or review date, and outcome. Two measures show whether the process is behaving. First, override volume as a share of total held sign-ups. Second, the bounce or complaint rate from overridden records compared with standard pass traffic. If overridden traffic performs materially worse, the answer is not more exceptions. It is threshold tuning.

There is a second control issue here. EVE sits in a market where email authentication and sender confidence are under closer scrutiny. If SPF, DKIM or DMARC controls are weak, tolerance for poor acquisition data falls quickly. That does not mean every uncertain address should be stopped. It means deliverability controls and sign-up routing need to be governed together, not split across teams working to different thresholds.

Risk and mitigation

The risks are predictable, which makes them easier to catch early.

- Risk: hold queues grow and nobody owns them. Mitigation: assign a CRM or data quality owner, set a review cadence, and report queue age weekly.

- Risk: thresholds are too severe, creating hidden conversion loss. Mitigation: compare reject, hold and release rates against downstream engagement and hard bounce performance.

- Risk: teams overuse overrides to rescue volume. Mitigation: require logged evidence, approval rules and expiry dates.

- Risk: the model becomes opaque to non-technical teams. Mitigation: expose reason codes and simple acceptance criteria in the operational dashboard.

The failure point is usually governance rather than the model. Teams can work with ambiguity if the owner is named, the review date exists and the measures are visible. Confidence drains away when held records sit untouched, exceptions appear without notes, or one campaign gets special treatment with no recorded basis.

Borderline records are where the work really sits. New domains, affiliate traffic, proof-of-purchase journeys and prefilled lead forms all produce awkward middle states. That is exactly why graded routing is more useful than a hard reject. It contains uncertainty without pretending it has been resolved.

Recommended path

The sensible route is a short pilot, not a transformation programme. Start with the last 90 days of failed sign-ups and classify what was rejected, what would have been held, and what evidence exists to estimate false positives. The owner should be the CRM lead or data quality owner. The output is a baseline: reject rate, estimated false-positive rate and current hard bounce rate from accepted sign-ups. Without that baseline, any benchmark claim is just theatre.

Then run EVE in parallel on one sign-up journey for one marketing cycle. Keep the scope tight: one source, one owner, one review rhythm. The checks should be explicit:

- volume routed to pass, hold, challenge and stop

- percentage of held sign-ups later released

- hard bounce rate and complaint rate by route

- engagement from released records compared with standard pass traffic

- override count, owner compliance and average queue age

If the pilot shows that held-and-released records perform close to standard pass traffic while hard rejects fall, there is evidence for wider rollout. If complaint or bounce performance worsens, tighten the threshold or narrow the override conditions. That is what a benchmark is for. It should end in a decision.

The watchpoint is simple. The visible risk is the bad address that gets through. The less visible loss is the good customer turned away with no record of the cost. EVE gives teams a way to make that trade-off visible, govern it properly and improve it over time. If you want to map the owners, dates and acceptance criteria for a pilot, contact EVE. We can help define thresholds, set override rules and establish a path to green without promising more than the evidence supports.