Full article

More memory should ease editorial decisions. Often, it slows them down. Approval-heavy teams falter not from missing history but from hauling stale context into choices that need current truth and readiness.

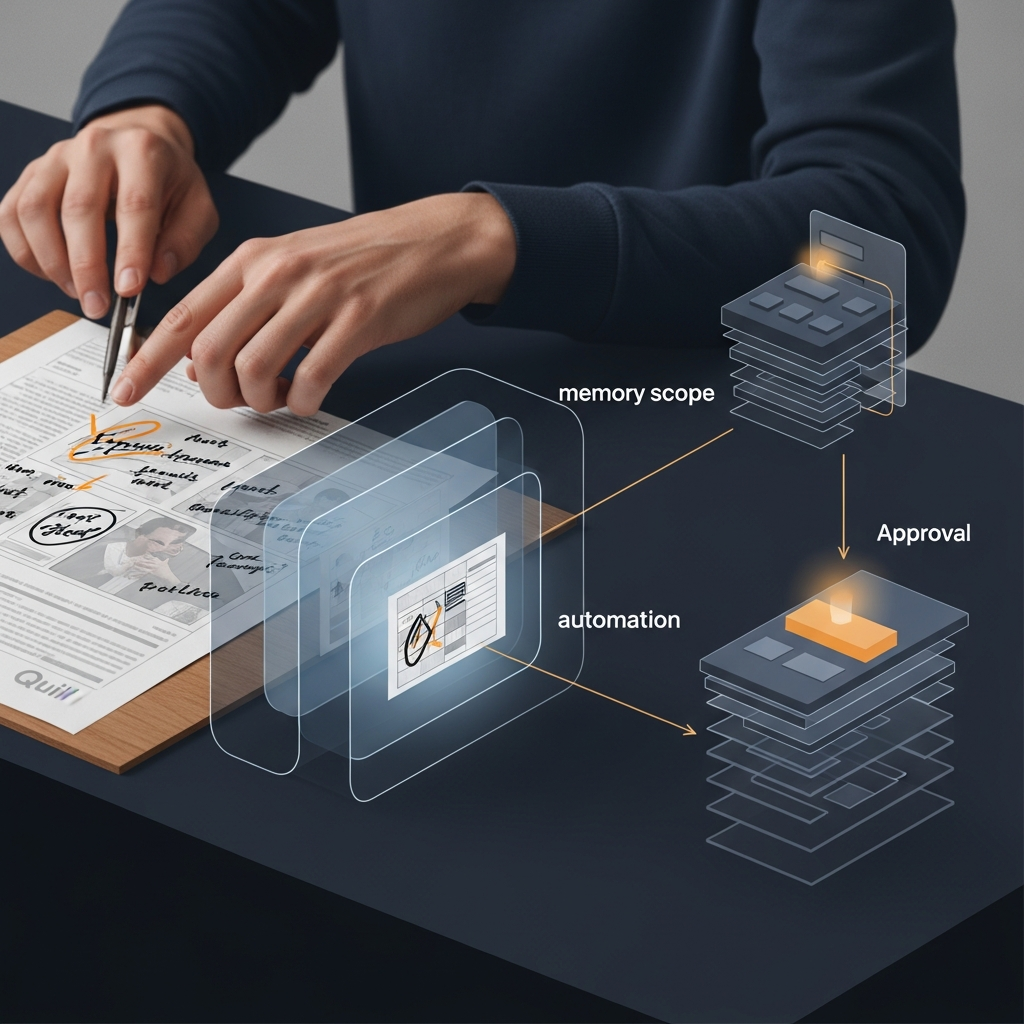

Data shows a significant slice of draft time spent re-justifying past decisions. That frustration marks a common error: optimising for total recall when design should prioritise disciplined forgetfulness. Scoped memory addresses this by limiting what the system carries forward at each workflow stage. In Quill, the question shifts from how much to remember to what should retain current relevance. Implementation trims review loops, clarifies approvals and keeps automation rooted in evidence, not theatre.

What is being decided

The real decision pits unlimited recall against bounded context. They sound similar until applied to regulated publishing.

When every draft, comment and legacy edge case stays live forever, reviewers re-litigate old decisions. That looks thorough. In practice, it slows approval mean time to resolve, muddies accountability and gives outdated guidance equal weight with current policy. Platforms that cannot explain their decisions do not deserve your budget.

Early assumptions that more retained context means better control often backfire. Teams with unrestricted setups typically show stronger archives but weaker flow. Approval MTTR can rise sharply over months as reviewers trawl through irrelevant prior drafts. Systems with perfect recall demonstrate poor judgement. Scoped memory fixes this by tying context to relevance windows, signal confidence and workflow stage. Operational memory retains only what aids the next decision; record memory preserves evidence for audits. Separation avoids overcomplication and keeps decisions clean for working editors.

Comparative view

Compare ad hoc memory with governed memory. Ad hoc systems store everything because storage is cheap and product teams dislike data loss. The immediate benefit is obvious: fewer rules, less setup, fewer initial arguments. The cost arrives later. Reviewers inherit noise. Preferences drift into pseudo-policy. Contradictions accumulate.

Scoped memory requires more design upfront. Teams define what stays visible during briefing, what follows a draft into approval, and what is preserved after publication as evidence. Setup takes work. It also creates a workflow people can actually explain. Data from UK content teams tells a plain story. Teams with scoped memory consistently see shorter approval MTTR, lower final-draft error rates and higher reviewer satisfaction. They move faster with fewer errors because the workflow forces sharper choices about relevant evidence. The trade-off is clear: more discipline in setup, less drag in delivery.

The more interesting comparison is behavioural. In ad hoc systems, reviewers often treat old comments as soft precedent even when the brief, policy position or audience has changed. In scoped systems, they are pushed back towards current signals and approval criteria. That reduces memory drift, where yesterday's preference becomes today's accidental rule. Teams sometimes confuse recoverability with usefulness. Records are necessary, but the drafter does not need every historical wobble in the next approval pack.

How it changes day-to-day operations

Effects appear first in briefing. When memory is bounded to the active campaign, current quarter or latest policy cycle, briefs get shorter and cleaner. One UK financial publishing team cut briefing documents from 15 pages to 5 by limiting context to current priorities and approved source material. Initial draft time fell. Less context yields a better start.

Review improves because reviewers have fewer excuses to revisit old arguments. A process tested between late March and early April that retained every edit indefinitely showed the risk. A reviewer fixated on a wording choice from months earlier and stalled a timely piece over an irrelevant issue. Capping visible memory to the last two approval rounds while preserving the final evidence trail elsewhere halved review time.

Governance is where the design either holds or fails. A proper editorial memory system should move the right evidence through the right gate. This matters more in teams balancing automation with human approval controls. Automation without measurable uplift is theatre, not strategy.

Where teams usually get it wrong

The common mistake is treating memory as a single bucket. It should be at least two layers: operational memory for active work, and retained records for audit, learning and recovery. Separation makes decisions easier. Editors see what they need to publish well. Compliance and leadership still have the trail for later inspection.

The second mistake is making scope optional. That sounds user-friendly but usually produces inconsistency. One team member keeps everything, another trims aggressively, and soon nobody can explain why similar articles took different approval paths. In a governed workflow, consistency matters more than individual preference. Reliable systems are built on this principle.

The third mistake is measuring the wrong outcome. Teams often celebrate faster drafting while ignoring approval drag, legal escalations or rework after sign-off. Those costs bite. A better paired view tracks approval MTTR beside final-draft error rate, and reviewer satisfaction beside escalation volume. Speed without control is cheap only until someone senior must unwind it. Platforms that cannot show why a piece carried specific context into approval are not ready for serious editorial operations. They may be clever, but they are not accountable.

Recommendation and next step

Start with one content stream and make the decision concrete. Define what the system can remember during drafting, what remains visible during approval, and what is archived after sign-off as the lasting record. Measure the result over 30 days using approval MTTR, number of review rounds and final-draft error rate. If those metrics do not improve, change the scope rules. Keep the explanation burden on the workflow, not on exhausted editors.

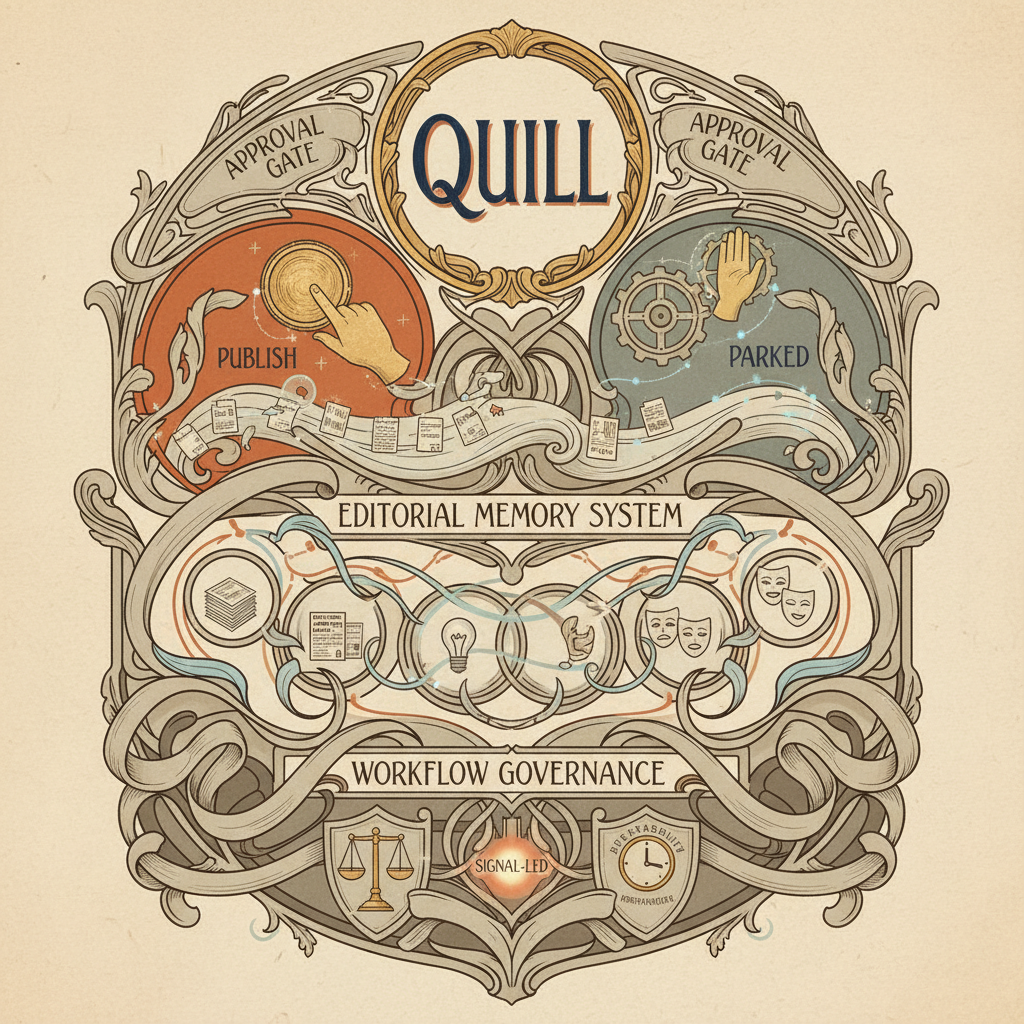

Quill is designed for governed publishing work: scoped memory, signal-led routing and human approval automation that stays legible under pressure. If your team is stuck in long review loops or keeps hauling old context into new decisions, the next sensible move is a conversation about how Quill could tighten the flow without loosening control. Cheers, and if you want to see what that looks like in practice, we can talk it through in plain English and map it against your current process.

If this is on your roadmap, Quill can help you run a controlled pilot, measure the outcome, and scale only when the evidence is clear.