Full article

After an acquisition push, volume is the easy bit to report. Proving whether those contacts were worth having is harder, and that is where most stakeholder arguments start. This delivery assurance note sets out a practical way to link acquisition cleanup to lifecycle retention metrics, so the discussion moves from opinion to evidence.

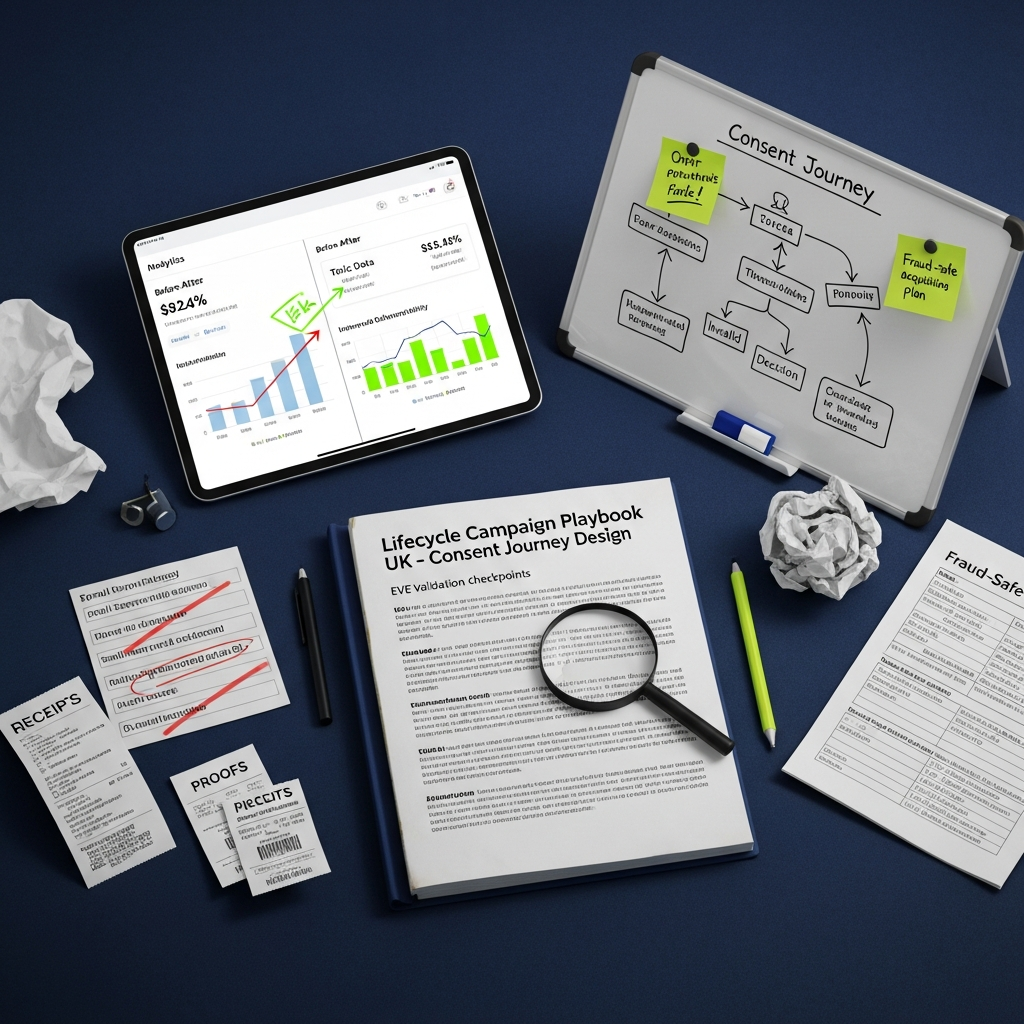

The working assumption is simple: if toxic data gets into the CRM, retention reporting is distorted from day one. The fix is not a heroic clean-up three weeks later. It is a measurable control at capture, followed by a before-and-after readout that shows what changed, who owns it, and by when.

Context

It is mid-March 2026, and plenty of UK teams are reviewing Q1 acquisition numbers against tighter budgets and a fairly unforgiving operating climate. BBC reporting on 14 March shows the Treasury is already under pressure over household energy costs, with Rachel Reeves discussing options for vulnerable households and Laura Kuenssberg asking whether the public should expect another crisis-style intervention. Different topic, same signal: scrutiny is up, spare budget is not. Marketing teams will be asked to show what worked, what leaked, and what gets cut.

In practice, the pattern is familiar. Acquisition hits the lead target; CRM inherits typo-ridden addresses, disposable domains and bot entries that drag down the first 30 days of lifecycle performance. Yesterday, after stand up, ticket CRM-45 was blocked by a spike in invalid domains from one affiliate source. A quick call with Sarah, our CRM lead, cleared it. Source under review, suppression rule live, new date set for the next cohort check on 22 March 2026. That is not glamorous, but it is how the path to green usually starts.

One retail programme we reviewed last year spent 40 person-hours cleaning a single competition list before the welcome journey was stable enough to trust. The first follow-up send then carried a bounce spike that sender operations had to unwind manually. If you are bit tight on time, that sort of rework is exactly what should be designed out.

What is changing

The shift is from celebrating acquisition volume to defending customer value. Stakeholders want to know what happened after sign-up: hard bounce rate, first-30-day clicks, unsubscribes, confirmed opt-ins, and how much manual effort was burned correcting records that should never have passed the front door.

That is why an email lifecycle playbook uk should start with acquisition controls, not with rescue tactics in retention. I was wrong about the implementation effort on this a while back; the data feed was trickier than expected, and the integration needed more buffer. The updated plan was still worth it. In a Q4 2025 FMCG campaign review, EVE's validation engine returned decisions in under 50ms and blocked more than 95% of invalid or toxic entries at source, with no measurable drop in form completion against the pre-launch baseline. No magic, no guarantees, just better input data and fewer downstream problems.

The sharp opinion is this: if your plan has no named owners and dates, it is not a plan, fix it. And if your retention model ignores acquisition data quality, it is not a retention model either. It is a reporting problem waiting to happen.

Implications for lifecycle and consent design

Cleaner acquisition data changes more than list hygiene. It affects consent journey design, suppression logic, onboarding cadence and how confidently you can read early retention signals. A form should not merely collect an address; it should check whether the address is structurally valid, plausibly genuine and suitable to enter the next step of the journey.

Between 10:00 and 12:30 in last month's review window, I rewrote the acceptance criteria for the sign-up flow so the rules were testable: invalid syntax blocked immediately, known disposable domains routed to fail, and higher-risk but uncertain addresses flagged for review rather than auto-rejected. Tests passed once the alias edge case was covered. That distinction matters. False-positive control is part of the job; otherwise you trade one quality issue for another.

There is a compliance benefit as well. An auditable record of validation at capture, plus a clear email confirmation loop and opt-out route, gives teams something they can defend if consent or provenance is challenged later. Under UK GDPR, that kind of evidence is more useful than a vague claim that the form was compliant. Keep the form short, keep marketing opt-out clear, and keep full terms elsewhere if needed. Sorted.

What to measure and how to prove it

The case to stakeholders should be built on matched cohort reporting. Same campaign type, same 30-day observation window, same metrics before and after the control change. Anything looser invites debate that goes nowhere.

- Core acquisition quality metrics: invalid email rate, disposable domain rate, hard bounce rate on first send, and percentage of records suppressed before CRM entry.

- Lifecycle performance metrics: welcome journey click rate, confirmed opt-in completion, unsubscribe rate by day 30, and conversion to a second meaningful action such as account activation or second session.

- Operational metrics: hours spent on manual list cleaning, number of support tickets linked to bad capture, and time to approve a campaign audience.

Use acceptance criteria for each metric. For example: hard bounce rate below 1% on the first welcome send; manual cleaning reduced by at least 50% versus the chosen baseline cohort; risky-address review queue cleared within two working days; and no increase in valid-user complaint rate after validation rules go live. Those are testable. “Data quality improved†is not.

If you want an extra sense check, look at wider wellbeing and operating pressure too. ONS quarterly wellbeing and local authority datasets continue to show why household confidence and anxiety levels matter when audiences are deciding what to ignore, what to trust and what to opt out of. It does not prove email quality on its own, obviously, but it is a useful reminder that weak onboarding journeys are judged by people who already have enough noise in front of them.

Action plan with owners, dates and risks

Here is the practical rollout. Keep it simple and traceable.

1. Baseline the current state

Owner: CRM Lead

Due: 30 April 2026

Acceptance criteria: one historical cohort selected, 30-day metrics locked, manual effort logged in hours, and source-level acquisition breakdown agreed with Marketing Ops.

2. Implement capture controls

Owner: Marketing Ops

Due: 31 May 2026

Acceptance criteria: EVE validation deployed on primary web forms, suppression rules documented, review workflow live for uncertain cases, and change log signed off by CRM and Data Protection leads.

3. Tune thresholds and monitor false positives

Owner: Head of CRM

Due: 14 June 2026

Acceptance criteria: only highest-risk categories auto-blocked on day one; weekly review of challenged records; complaint rate and completion rate monitored side by side for the first two weeks.

4. Compare the next matched cohort

Owner: Insight Manager

Due: 30 September 2026

Acceptance criteria: same 30-day retention window used, variance explained by source where possible, and stakeholder readout includes delta on bounce, click, unsubscribe and manual hours.

Key risks and mitigation

- Risk: valid users blocked by aggressive rules. Mitigation: start conservative, review uncertain categories manually, adjust thresholds weekly for the first month.

- Risk: teams argue about attribution after the fact. Mitigation: lock baseline definitions before launch and keep a dated change log.

- Risk: acquisition keeps optimising for volume alone. Mitigation: agree a shared quality-lead definition, including bounce and confirmation thresholds, before media goes live.

What this means for retention decisions

Once the input data is cleaner, retention decisions get easier to trust. Segments stop being inflated by dead addresses. Welcome and win-back journeys can be assessed on genuine behaviour. Suppression policy becomes operational rather than reactive.

I would be sceptical of any team still leaning heavily on opens to judge new-cohort quality in 2026. Privacy protections have made that signal too soft on its own. In the first 90 days, clicks, confirmation events, second-session behaviour and complaint rates are more useful. They are closer to intent, harder to fake, and better for stakeholder conversations.

The unresolved tension, if we are honest, is usually organisational. Acquisition is rewarded for volume; CRM is measured on engagement and deliverability. Until both teams use the same acceptance criteria for a quality lead, the reporting will keep fighting itself. That is not a tooling issue. It is an ownership issue.

Proving data quality gains after acquisition cleanup is not about dressing up hygiene work as strategy. It is about showing, with dates and evidence, that better controls at capture produce a better first 30 days in lifecycle. If you want to map the owners, thresholds and reporting checkpoints for your own programme, book a frictionless validation walkthrough with EVE's solutions team. We will help you set the controls, keep the change log clean, and give stakeholders something they can actually sign off. Cheers.