Full article

Editorial workflow design tells you more about service operations than most dashboards do. When approvals sprawl, handovers blur and nobody can explain why one item sailed through while another sat for three days, the problem is rarely “contentâ€Â. It is operating design with better stationery.

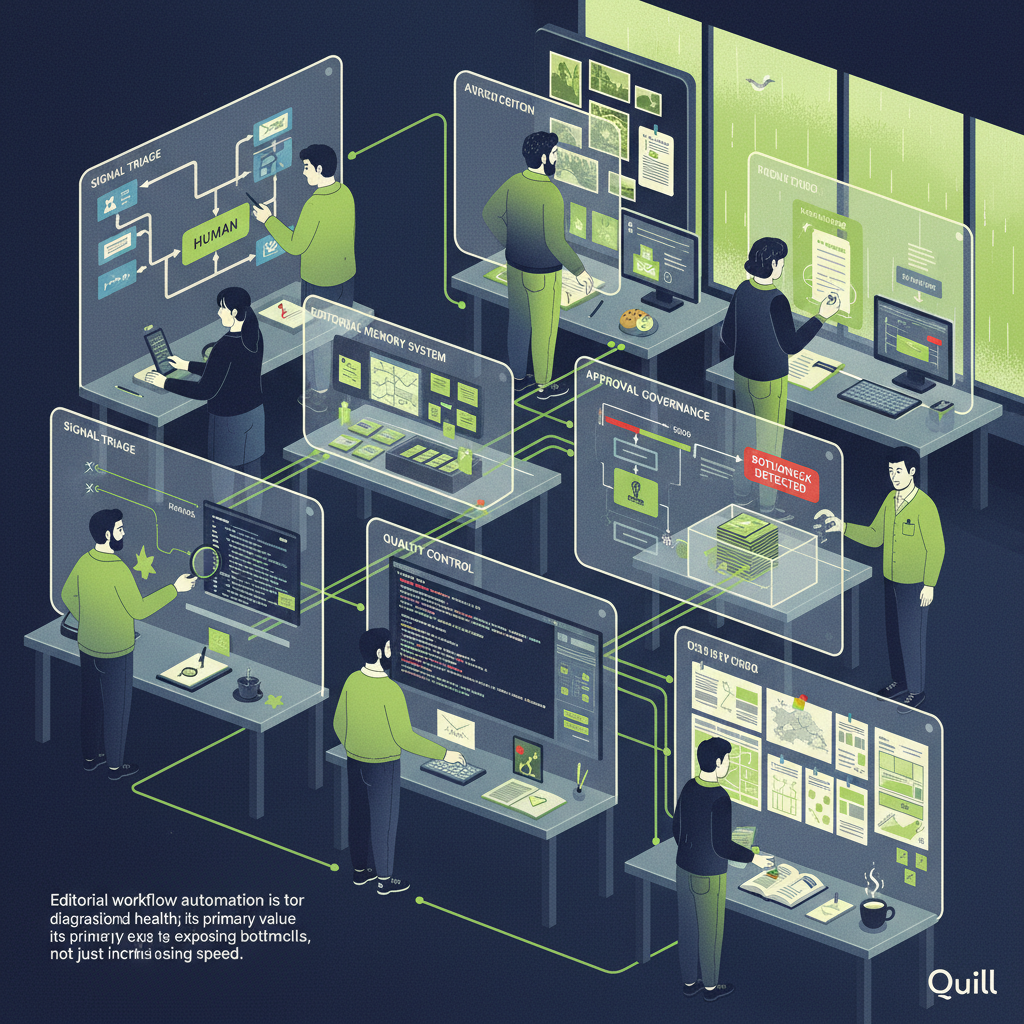

That matters because editorial workflow automation is only useful when it exposes bottlenecks you can actually fix. Used well, it shortens cycle time, sharpens accountability and leaves a clean audit trail. Used badly, it automates confusion and calls it progress. Cheers.

Context

Last Thursday, in our Canary Wharf office, the heating failed during a cold snap that had Abbey Mead at 1°C, observed on 15 March 2026. The room had that metallic, dry-air chill and the content queue in front of me was colder still: three approval layers, two duplicated checks and no clear owner for the final go-live. That’s when I realised, again, that workflow design is a service-operations problem first and a publishing problem second.

Teams often normalise this sort of drag because it looks cautious. In practice, it is usually muddle dressed up as governance. The Office for National Statistics publishes quarterly personal well-being estimates and local-authority level data, which many teams now use to support localisation decisions in the UK. Fair enough. But local relevance does not logically require bloated approval chains. If anything, the trade-off runs the other way: more nuanced, localised output needs clearer rules, not more people hovering over the same draft.

I have seen teams in Manchester burn half a day in email threads over a routine update, then lose another hour reconciling version changes. It feels safe because everyone was copied in. It is not safe. It is brittle. If a platform cannot explain its decisions, it does not deserve your budget. And automation without measurable uplift is theatre, not strategy.

What is changing

The shift is not simply from manual work to automated work. It is from generic review queues to signal-led publishing, where systems use defined inputs to route work by risk, relevance or urgency. Between February and March 2026, I tried a drafting and approval setup that looked clever on paper and still let placeholder copy slip into a near-final review. Fixed it with a plain filter on banned strings before approval. Not glamorous. Very effective. That small failure told me more than the sales deck did.

This is where the useful change is happening. Data from APIs, tagged content types, prior approval outcomes and regional signals can all help route work sensibly. The ONS quarterly well-being dataset and local-authority estimates are good examples of structured public data that can support localisation logic when the use case genuinely fits. The trade-off is straightforward: you gain speed and consistency, but only if the triggers are explicit and auditable. Otherwise you have built a faster black box.

I still don’t fully understand why some approval thresholds produce cleaner behaviour from teams than others, but here’s what I’ve observed: when content types, owners and escalation rules are defined in advance, routine items move faster and the truly risky pieces become easier to spot. That is not magic. It is just less over complicated than the average review stack.

What editorial workflow design reveals about operations

A workflow is really a map of institutional trust. If every article, product update and service notice goes through the same senior bottleneck, the organisation is telling you it has not decided what deserves scrutiny and what deserves flow. That is expensive. It delays launches, muddies accountability and makes failure analysis oddly hard because the process itself is vague.

There is a compliance angle too. The boundary between service messaging and marketing matters. Routine operational information should stay neutral when it is not intended as promotion. Profiling, targeting and follow-up promotional capture sit in a different category and need different controls. A decent workflow reflects that distinction in routing and approvals, rather than expecting editors to remember it ad hoc on a Friday afternoon.

The practical signal is simple: where the handoff becomes fuzzy, service quality usually degrades next. Missed approvals, duplicate reviews and unclear ownership tend to show up before bigger publishing mistakes do. That is why editorial systems are so revealing. They expose whether your operation can classify work, assign responsibility and recover cleanly when something goes wrong.

Implications for governance and control

Once automation becomes part of the operating model, governance cannot be bolted on at the end. You need named approvers, audit trails, exception handling and a measured view of what “good†looks like. A tiered approval model is usually the sane place to start: routine content moves on preset rules, regulated or reputationally sensitive content escalates, and anything ambiguous gets a human decision with a reason attached.

The trade-off is real. Tighter controls reduce publication risk, but they add design overhead upfront. That is still a better bargain than vague review culture, where five people sign off because nobody trusts the process. If you cannot trace why a draft was approved, rejected or escalated, you are not governing anything. You are collecting opinions.

The broader lesson from operational systems applies here as well: clean manual fallback matters. Automated triage is useful. It is not a licence to let the whole publishing line seize up when one tool fails or one threshold misfires. A practical workflow has a recovery route, a named owner and a record of what happened. Otherwise resilience is just a slide in a deck.

Actions to consider

Start with the last 90 days of work, not a workshop fantasy. Measure average approval time, number of review rounds, percentage of items escalated and how often version conflicts forced rework. If you cannot pull those numbers, that is your first finding. Build from there.

Next, separate content by operational risk. A service update, a campaign page and a sensitive public-sector response should not travel through the same path. Define what signals trigger localisation, legal review or executive escalation. Use API-fed data where it earns its keep, and keep the logic visible to the people responsible for outcomes. For memory, store prior decisions, approved phrasing and exception notes in a usable editorial memory system so teams stop re-arguing old calls.

Then tighten quality control where it matters most. Add pre-publication checks for placeholders, missing alt text, broken links and banned claims. If imagery is part of the workflow, keep alt text plain, descriptive and accessible rather than decorative. If a mechanic relies on user-generated content, require traceable evidence of entry such as a hashtag, tag or URL and define that evidence before launch, not after someone in legal starts asking fair questions.

Finally, review whether your current automation produces measurable uplift. Faster turnaround, fewer missed launch dates, lower rework rates, cleaner accountability. Pick at least one of those and track it monthly. If the numbers do not move, the automation is probably solving the wrong problem.

Why this matters now

The pressure on editorial and marketing operations is not easing. More channels, more variants, more localisation and more scrutiny mean old informal habits crack sooner. The organisations that cope best are not the ones with the loudest AI story. They are the ones that can explain how a decision was made, who owns the exception path and what changed after the workflow was redesigned.

That is the bit worth paying for. Not synthetic speed for its own sake, but a publishing operation that can direct routine work efficiently, support human judgement where it still matters and create a trail others can audit without guessing. If you want to see where your service operation is actually wobbling, look at the editorial workflow. It rarely lies.

If your team is trying to untangle approvals, memory and publishing controls without making the whole thing even more over complicated, Quill is a sensible place to start. We build governed content operations that support real editorial teams, with automation you can inspect and outcomes you can measure. If that sounds like the conversation you need, have a word with Holograph and take the next step while the bottlenecks are still fixable.