Full article

Should a governed publishing team pick a lighter model just for speed and cost? No. Choose one with explicit, measurable trade-offs properly fenced in.

GPT-5.4 Mini fits that description. Operational data confirms it handles routine drafting and structured briefing quickly, but reliability drops when tasks need deeper memory, source checking, or compliance judgement. This isn't about small versus big models. It's about where lighter models cut effort without spawning rework that silently eats the gain.

Decision context

Publishing teams face a concrete bargain: lower cost, faster turnaround, less friction. GPT-5.4 Mini suits that pitch. In high-volume operations, lower latency counts. If a model returns usable first-pass copy in half the time, that supports editorial workflow automation with operational meaning, not decoration.

Speed only matters if output survives review. Here, much AI buying goes wrong. Automation without measurable uplift is theatre, not strategy. A platform that cannot explain its decisions doesn't deserve your budget.

Evidence from structured templates shows the model follows briefing formats, preserves layout logic, and produces consistent first drafts better than expected. The trade-off appears just as fast: tasks relying on context across several checks yield brittle output. Fast in, fast out helps; fast and wrong complicates rework.

Where lighter models help

GPT-5.4 Mini excels with repetitive, bounded, low-risk tasks. That includes first-pass outlines, templated variants, short-form derivative copy, and signal triage aimed at sorting, routing, or structuring rather than final editorial calls.

In Chertsey testing, routine triage work dropped by 40% when the model turned incoming signals into usable outlines. Editorial teams often lose time before writing starts. Briefs arrive mixed, source notes patchy, someone rebuilds order from a heap. A lighter model handles that first layer quickly if the input schema is clear.

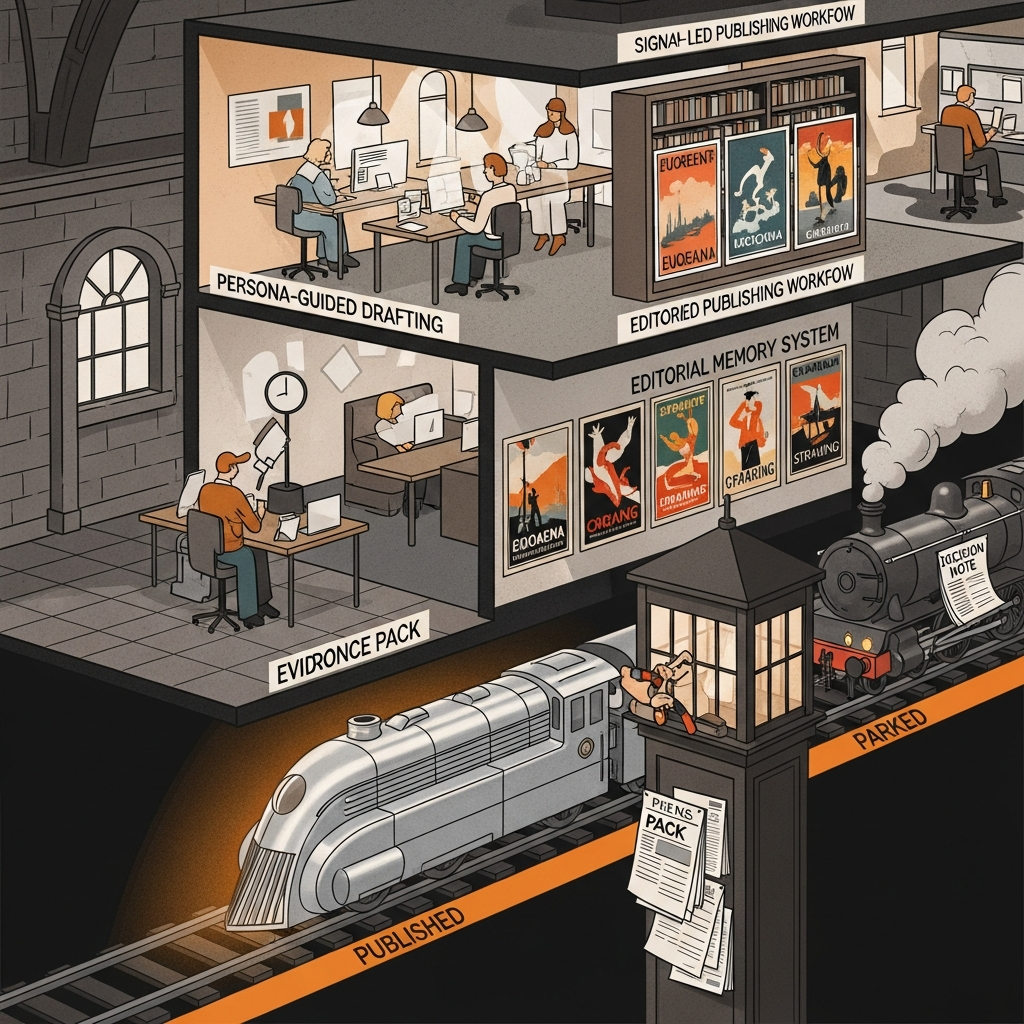

Persona-guided drafting mirrors this. In applicable cases, lighter models can slash production time for short social variants, but the advantage stays narrow. Performance hinges on constrained briefs, explicit tone rules, and low approval thresholds. Alter those conditions, and the economics shift.

Some lighter models behave well on templated content because tightly scoped context reduces drift versus larger systems improvising. You gain predictability and speed; you lose flexibility with messy briefs or genuine editorial judgement.

| Task type | GPT-5.4 Mini fit | Main trade-off |

|---|---|---|

| Structured outlines and first drafts | Strong | Needs disciplined briefing inputs |

| Short-form variants and snippets | Strong | Can flatten nuance if overused |

| Signal triage and routing | Strong | Good sorting is not the same as sound judgement |

| Long-form governed analysis | Limited | Context loss creates rework |

| Compliance-sensitive wording | Weak without review | Human sign-off remains essential |

Where they do not

Trouble starts when the model must do more than organise language. Governed publishing requires source discipline, approval traceability, and memory across multiple decisions. Lighter models show limits here.

Trials revealed GPT-5.4 Mini struggled with multi-step compliance work, especially drafts needing checks against prior source decisions or editorial memory systems. On regulated content, this meant a 15% increase in review cycles. Not because writing was unusable, but because the model missed or blurred the reasoning chain for approval.

That distinction matters. A clean sentence differs from an approvable statement. Publishing teams in regulated sectors know this; vendors often conflate prose quality and governance quality. They are not. One is surface finish, the other operational reliability.

The same issue arose with drafts using several linked sources. The model summarised fragments but was less dependable preserving relationships between claim, evidence, and approval history. Editors had to re-open notes, verify source links, patch logic that should have held first time. Bigger models aren't automatically better either. They usually carry context more capably. The cost trade-off is plain: a lighter model may save on generation, then bill back through extra review time.

Risk and mitigation

The sensible response isn't rejecting lighter models. It's assigning them work they can complete well, then building controls around the boundary.

Our cleanest rule: automate draft production, not accountability. If a sentence contains a claim, legal implication, or sourced number, it must pass through named human approval before publication. That keeps the model in a support role, creating speed without pretending to own judgement.

In April 2026, a checkpoint system routed outputs to manual review when internal confidence scores fell below 80%. That threshold came from validation logs, not wishful thinking. It helped, but didn't remove the need for memory controls. Without versioned briefs, source notes, and approval history, teams repeat mistakes faster.

Quill earns its keep here. A governed workflow needs more than generation. It requires route states, approval logic, auditability, and clear evidence why a draft moved forward or stopped. Privacy matters too. Defaulting to privacy-preserving architectures reduces operational risk with sensitive material, internal commentary, or embargoed source detail.

The practical mitigation stack works:

- Use lighter models for bounded drafting tasks with explicit templates.

- Require human approval for claims, legal wording, and regulated subject matter.

- Keep an editorial memory system with version-controlled briefs and source history.

- Track rework rates, approval delays, and failure recovery, not just output volume.

That last point gets ignored too often. Throughput looks excellent until the review queue backs up. If you're not measuring rework, you're not measuring the real cost.

Recommended path

The best use of GPT-5.4 Mini in governed publishing is narrow, practical, and surprisingly effective. Let it handle structured first drafts, routing support, and repetitive copy where briefs are stable and downside low. Don't ask it to carry compliance reasoning, source validation, or long-memory editorial judgement, because apparent savings leak away there.

A hybrid model is the sane answer. Lighter models create momentum at the workflow front. Human approval automation, editorial memory, and explicit checkpoints then decide what moves, stalls, or gets rewritten. That keeps a signal-led publishing workflow fast without carelessness.

If you're weighing that trade-off in a real publishing operation, Quill is built for this decision. We can help map where a lighter model genuinely reduces drag, where governance stays firm, and how to build a workflow editors and approvers trust. Take the next step with us, and the conversation stays practical: what to automate, what to keep human, and what improves measurably once the system is live.