Full article

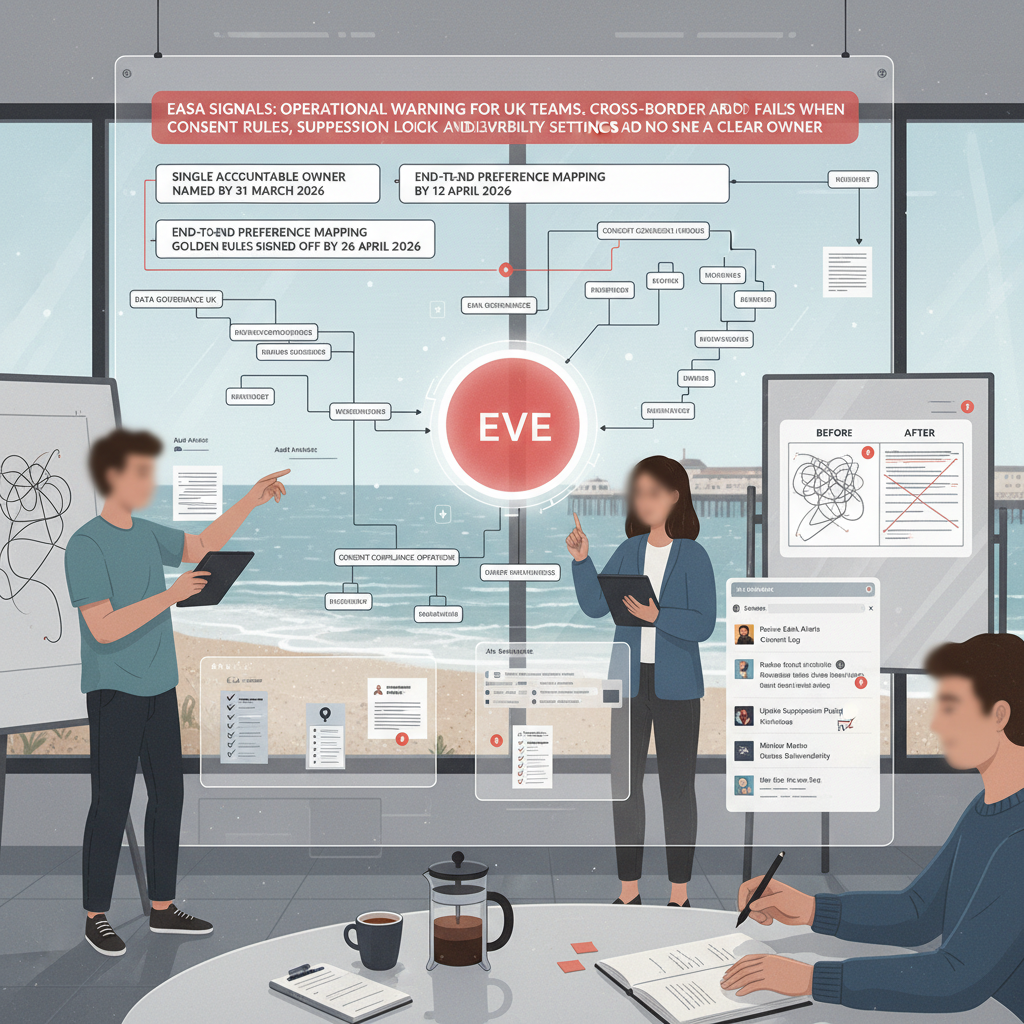

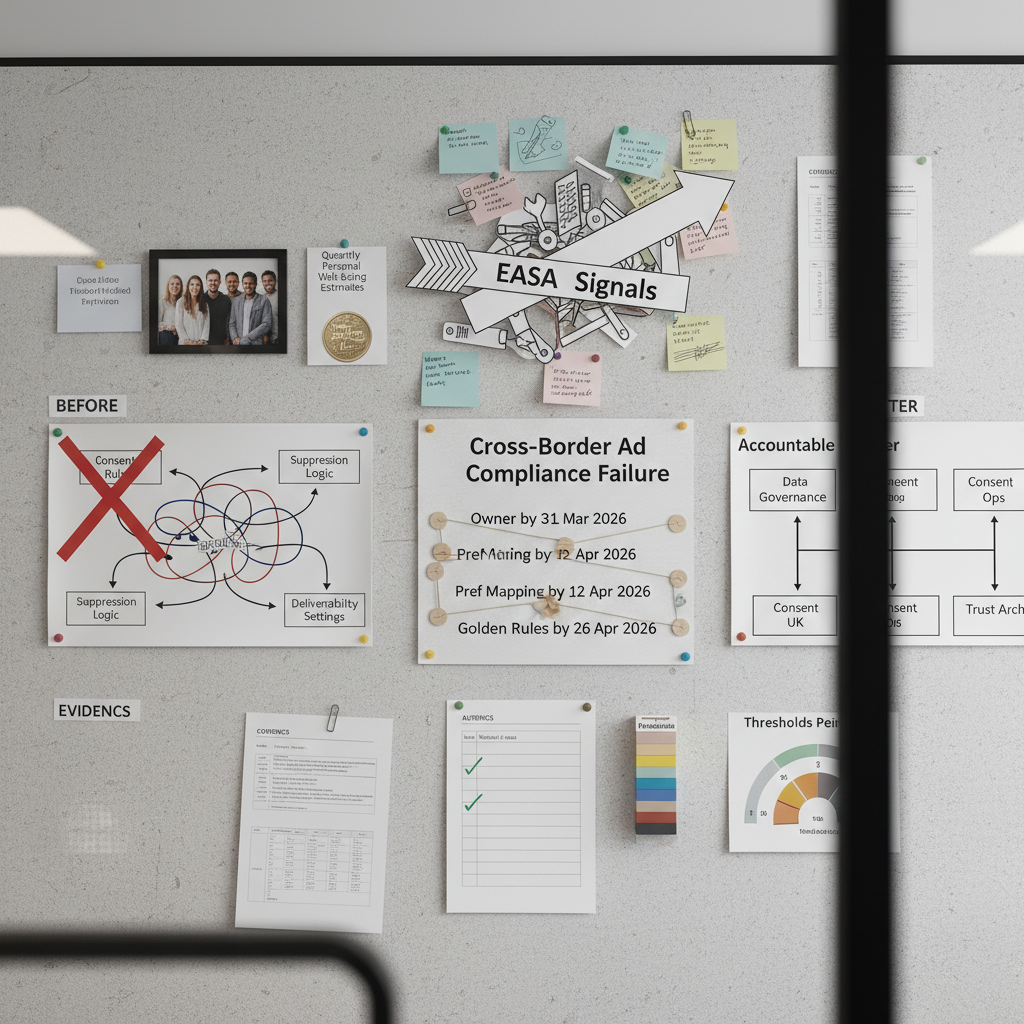

EASA signals are not the story on their own. The real issue is what they expose inside campaign operations: one team owns consent language, another owns the ESP, someone else tunes deliverability, and the customer record gets caught in the middle. When that happens across borders, the gap is not theoretical. It shows up as delayed sends, mismatched suppression, awkward audit questions and, if you are unlucky, a complaint you should have seen coming.

This is a delivery assurance note, so the test is simple. Can you name the owner, the date, the rule and the checkpoint for each cross-border consent decision? If not, you do not yet have control. You have a bit of policy, a bit of platform logic and too much hope. For teams working on data governance UK priorities, that is the fix: turn signals into owned operational rules.

Signal baseline

EASA’s cross-border signals are best read as an enforcement and oversight prompt, not a new statute. They point to a stricter expectation that advertisers can evidence how claims, targeting and customer-data handling hold up across markets, even where local implementation differs. For UK teams running activity into France, Germany or Spain, that matters because your campaign workflow has to survive more than one regulator’s line of questioning.

The same principle shows up in the UK data picture. ONS wellbeing datasets and local authority cuts exist because broad national averages hide operational differences between places and populations. Weekly deaths datasets are also published at region, local authority, health board, age and sex level for the same reason: once scrutiny increases, coarse reporting stops being enough. Cross-border ad compliance works the same way. A single global rule may look tidy in a slide deck, but it falls apart if local consent logic, suppression behaviour and service-message exceptions are not explicit.

Yesterday, after stand up, ticket CMP-411 was blocked by a suppression file conflict between the ESP and the CDP consent flags for a pan-European campaign. A quick call with the data owner cleared the dependency. New date set: 29 March 2026 for rule review and retest. That is the point. These issues do not start in a courtroom. They start in a queue.

What is shifting

The awkward bit is the collision between customer-data rules and deliverability settings. Email teams tune for inbox placement. Compliance teams tune for lawful basis, preference handling and auditability. Both are doing sensible work, but the platforms do not always share the same definition of customer intent.

A common example is the List-Unsubscribe header. It is good practice for deliverability and mailbox-provider trust. Fine. But if a customer in Manchester clicks once, what exactly have they withdrawn from: one newsletter, all promotional mail, a partner stream, or every non-service communication? If the ESP applies a blunt global suppression while the CRM records a narrower preference change, you have created two versions of the truth. That is not a compliance strategy. That is drift.

Between 10:00 and 11:30 last Thursday, I rewrote the acceptance criteria for a preference-sync story after an edge case appeared around service notices versus promotional opt-outs. Tests passed once the event taxonomy separated transactional, service and marketing messages. I was wrong about the effort at first; the data feed was trickier than expected. Updated plan: two extra days for mapping and one extra day for regression. Better to say that early than pretend the platform will sort itself out.

The practical shift is this: platform best practice is only a baseline. You need brand-specific golden rules for consent states, sync frequency, override rights and exception handling. If those rules are not written down with acceptance criteria, they will be invented ad hoc by whichever system processes the event first.

Who is affected

Marketing feels it first. Audiences shrink without a clean explanation, campaigns miss booked dates and deliverability metrics get blamed for a governance problem. In one Q4 2025 review, an unaudited suppression rule reduced an active marketable audience by 15% over eight weeks. No one spotted it quickly because reporting tracked send volume, not consent-state mismatches.

Compliance and legal then inherit the mess. They get asked to explain whether a contact was suppressed correctly, whether lawful basis was preserved after a preference change, and whether a service update should still have gone out. If the answer depends on digging through ESP logs, CRM records and middleware events by hand, the operating model is already too brittle.

Technology and data teams are usually blamed last and least fairly. Often the platform is doing exactly what it was configured to do. The problem is that no single owner defined the hierarchy of rules across systems. And the customer gets the worst version of it all: inconsistent treatment of preferences, which is how trust starts to leak away one support ticket at a time.

Sharp opinion, because it needs saying: if your plan has no named owners and dates, it is not a plan. It is an aspiration with a RACI attached.

Actions and watchpoints

The path to green is usually shorter than people think, but it does require discipline. Four actions tend to clear the fog.

1. Name one accountable owner.

Owner: Head of Marketing Operations, Data Governance Lead, or equivalent. Not a steering group. Not three people. One person with authority to make the rule hierarchy stick.

Date: appoint by 31 March 2026.

Acceptance criteria: owner named in the operating model; escalation path agreed with Legal, CRM and ESP leads within five working days.

2. Map the preference journey end to end.

Owner: Compliance Delivery Lead with CRM and ESP admins.

Date: complete mapping by 12 April 2026.

Acceptance criteria: every entry point documented, including web forms, preference centres, one-click unsubscribe, customer service updates and API imports; each event linked to source system, downstream systems, sync timing and failure handling.

3. Write the golden rules.

Owner: Compliance Delivery Lead, approved by Legal and Platform Engineering.

Date: draft by 19 April 2026; signed off by 26 April 2026.

Acceptance criteria: single source of truth named; sync threshold set, for example within 15 minutes for marketing-preference updates; manual override rights restricted to named roles; transactional and service-message exceptions defined; change log created for every rule change.

4. Put live checks in place.

Owner: Data Operations Manager.

Date: dashboard live by 10 May 2026.

Acceptance criteria: weekly mismatch rate reported; time-to-sync monitored; unsubscribe processing success rate tracked; exception queue reviewed every Monday; red threshold set for any mismatch rate above 0.5% or sync delays above 30 minutes.

Watch the obvious risks. A global suppression rule may be simpler to maintain, but it can over-block lawful service communications. A local market workaround may solve one complaint and create six undocumented exceptions. Mitigation is boring but effective: a rule register, weekly exception review, and mandatory approval before any suppression logic changes go live. Cheers, sorted, or at least properly controlled.

Evidence and governance checkpoints

If you want this to hold up under scrutiny, measure it like an operational control, not a brand aspiration. Start with three checkpoints that a risk lead can review this quarter.

- Consent-state mismatch rate: percentage of records where CRM, CDP and ESP disagree on marketable status. Target: below 0.5% week on week.

- Preference sync time: median and 95th percentile from source event to downstream update. Target: median below 15 minutes; 95th percentile below 30 minutes.

- Exception ageing: number of unresolved preference or suppression exceptions older than five working days. Target: zero.

Then keep the traceability. Every change to suppression logic, unsubscribe handling or consent taxonomy needs an owner, date, reason and rollback note. That is not admin for admin’s sake. It is how you answer a challenge without three teams reconstructing the past from screenshots.

The ONS datasets cited above are a useful reminder that serious oversight depends on segmented evidence, regular publication and definitions that do not shift every week. Cross-border ad compliance needs the same mindset. Consistent terms. Stable measures. Named owners. Review dates in the diary.

What to do next

EASA signals should prompt a control review, not a panic. The practical question is who owns the rule when customer-data permissions and deliverability settings point in different directions. If the answer is vague, fix that first. Then map the flow, write the acceptance criteria and monitor the mismatch rate. That is the path to green.

If your team is bit tight on time and needs a clear operating model rather than another abstract governance workshop, Holograph can help. We will work with your owners to define the rule hierarchy, set measurable checkpoints and build a change log that stands up in delivery, not just in policy. contact Holograph to sort the next review with named owners and dates.