Full article

Creative ideas do not fail because the room lacked ambition. They fail because nobody can show who owns the awkward bits, which approval is still open, or what happens if a supplier misses a date. This delivery assurance note sets out how a UK on-pack activation moved from a hopeful concept to an auditable programme with clear controls and measurable outcomes.

The short version is simple enough: we named owners, fixed dates, replaced risky mechanics, and made the measurement plan part of delivery rather than a clean-up job at the end. Bit tight on time, yes. Still better than pretending a vague plan will sort itself.

Situation

In Q3 2024, we were brought in on a national FMCG on-pack activation built around QR entry and a digital reward mechanic. The brief had momentum. The delivery base did not. Key suppliers had been proposed but not fully contracted, the risk log lived across email threads, and no single owner had been assigned to compliance, data processing, or launch readiness.

The sharpest issue sat inside the promotional mechanic. The inherited concept used a social sharing route that leaned towards a most shares wins outcome. From a UK promotions perspective, that is exactly the sort of thing that creates fairness and compliance risk if the selection method is not explicit and auditable. We pushed the mechanic towards a defensible route, with the winner selection process and claims path documented clearly. That is not creative vandalism. It is basic operational hygiene.

A fast supplier audit in October 2024 exposed a second problem. Responsibilities overlapped across QR delivery, microsite build, and prize fulfilment, yet the end-to-end data journey was not covered cleanly in one accountable model. I was wrong about the effort at first; the data feed was trickier than expected, and the hand-offs between vendors were where risk was hiding. Updated plan issued with buffers, owners, and revised acceptance criteria by 15 October 2024. Without that reset, the project had a decent chance of reaching launch week with unresolved legal and technical exposure.

Approach

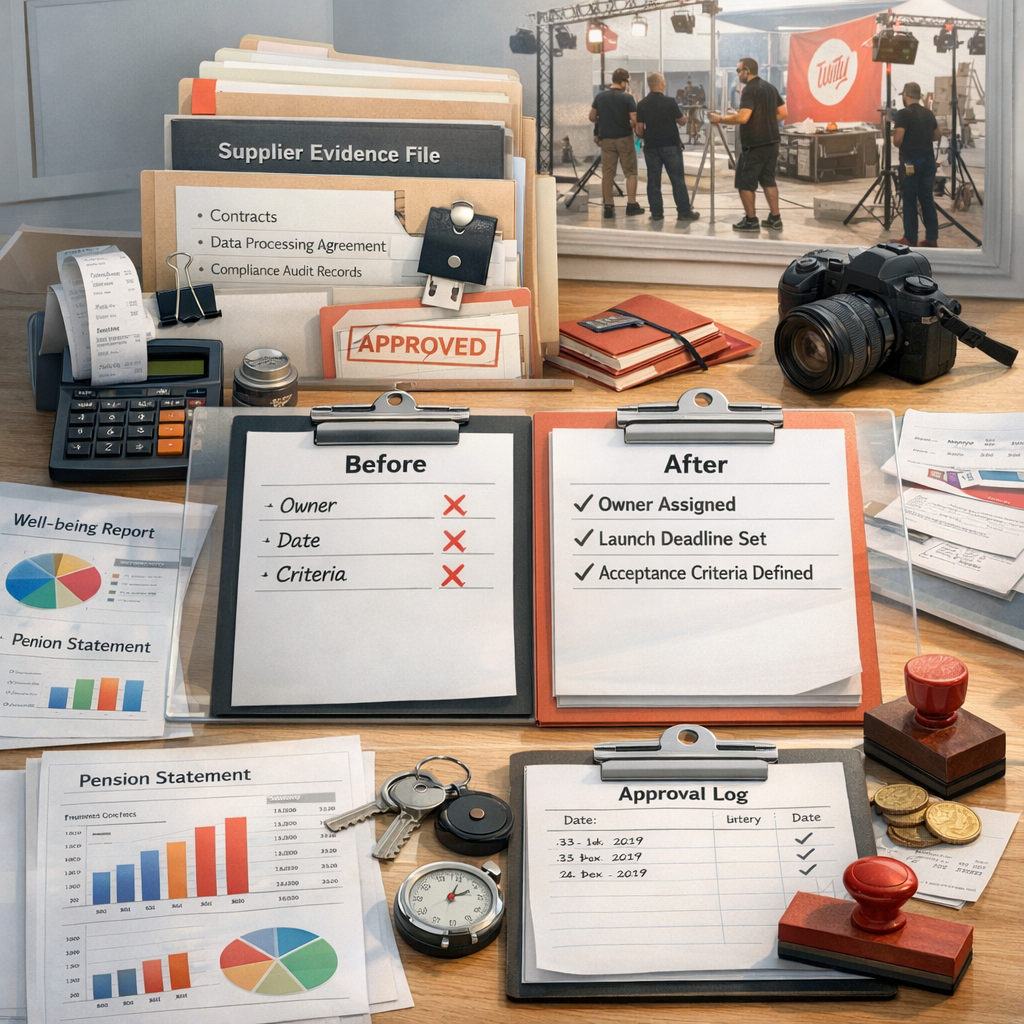

We rebuilt the programme as a delivery plan rather than a deck. Every workstream had an owner, a date, and acceptance criteria. Weekly RAID calls started on Tuesdays at 10:00, chaired by Holograph delivery, with supplier leads and the client marketing owner present. Risks were logged with status, mitigation, and path to green. If your plan has no named owners and dates, it is not a plan. Fix it.

The first formal checkpoint was supplier and governance alignment, signed off by the client marketing lead on 15 October 2024. The second was approval of the revised mechanic, legal wording, and claims process in January 2025. We also tightened supplier evidence requirements: signed contracts, data processing terms, security documentation, and fulfilment SLAs were reviewed before launch readiness could move to green. One partner was consolidated out of the flow to reduce duplicate responsibility and make accountability clearer.

One example is worth keeping because this is what delivery work actually looks like. After stand-up, the QR integration was blocked by a supplier API rate limit that had not been surfaced in the early planning. A quick call with the platform owner cleared the capacity issue and a revised date was agreed the same day. Nothing glamorous there, but useful. Risks move when someone owns them, speaks to the dependency, and resets the date in plain English.

From there, we put proper acceptance criteria around the build and launch checks: expected scan volumes under peak load, fulfilment processing against SLA, opt-in data capture rules, and approval gates for legal, brand, and operations. Between the final pre-launch sprint window and UAT, the acceptance criteria for the reward flow were rewritten to cover edge cases around duplicate claims and incomplete customer records. Tests passed once those were covered. That saved a messy argument later.

How evidence was kept audit-ready

Case studies often skip the evidence trail and jump straight to the shiny numbers. Procurement teams do not have that luxury, and neither do we. For this programme, the evidence pack covered supplier due diligence, approval history, change logs, and the route from customer entry to fulfilment. That matters because a promotion should feel fair and verifiable, not theatrical.

We kept a dated log of key approvals across mechanic wording, terms and conditions, data handling, prize fulfilment, and launch readiness. Change control was simple: what changed, who approved it, and when. Nothing heroic, just traceable. The winner and reward mechanics were documented in consumer-facing language so the route in, the claim process, and the basis for selection could be understood without hidden steps. That follows the practical compliance pattern UK teams need when platform rules and promotional scrutiny can shift quickly.

Performance evidence was built in early. Dashboard requirements were defined before launch, not after. That meant the performance wrap pulled from agreed sources rather than from three different agencies trying to explain three different numbers. Where external benchmarks were needed, we kept to named, public datasets. For example, when discussing broader context around public mood or regional pressure on services, Office for National Statistics sources remain the right anchor: quarterly personal well-being estimates, local authority well-being estimates, and weekly deaths registrations by region and local area. Those datasets are useful for context. They are not a substitute for activation KPIs, so we did not force them into claims they cannot support.

Outcomes

The activation launched on 1 March 2025 and ran for 12 weeks. Because the measurement plan and delivery checkpoints were agreed upfront, the final readout held together. No last-minute hunt for numbers, no debate over which dashboard was real.

- Scan and play rate: 12.8% across 2 million packs against an 8% target.

- Unique entrants: 256,000 across the campaign period.

- Loyalty opt-ins: 47,250 opted into the new scheme, equal to 18.5% of entrants.

- Fulfilment SLA: 99.8% of 50,000 tiered rewards processed within the agreed 28-day window.

Those are strong experiential campaign results in the UK because the numbers were agreed, sourced, and checked rather than inflated in hindsight. More to the point, the client could evidence how the result was produced: supplier controls, dated approvals, compliance decisions, and exception handling were all visible. Marketing had room to focus on the audience experience because legal, procurement, and operations could see the delivery mechanics were under control.

There were trade-offs. Supplier consolidation improved accountability, but it also forced some early replanning. The data feed took longer than expected, and the revised buffers reduced room for late creative changes. I would still take that trade every time. Better a slightly firmer process in November than a public scramble in March.

Lessons for others

The practical lesson is not complicated. Start with scope, owners, dates, and acceptance criteria. Then test the ugly bits early: fairness of mechanic, supplier overlap, data responsibility, claims handling, and peak-load behaviour. If one of those has no owner, you have found your next risk, not a minor admin task.

Run a pre-mortem before launch. We did ours in January 2025 with delivery, legal, and supplier leads in the room. The output was more useful than a polished status deck: clear mitigations for complaints handling, fulfilment exceptions, and operational disruption. On a later launch day, poor weather created field changes that could have caused chaos. Because the contingency sat in the risk log with an owner and action, the team adjusted quickly. Not elegant. Effective. Sorted.

The final point is about case study quality. If the story cannot show who approved what, when suppliers were evidenced, which risk nearly pushed the plan off course, and what moved the result back to green, it is not yet a useful case study. It is marketing copy wearing a hard hat.

If you are reviewing an activation and the plan still lives across loose email threads, bring it into the open. Book a chemistry session with the Holograph studio team and we will work through the owners, dates, approvals, and risk controls needed to make the next performance wrap stand up to scrutiny. Cheers.