Full article

On some programmes, the first sign of a retention problem is not churn at all. It is a welcome series that suddenly underperforms while acquisition volume looks healthy on paper. That mismatch is worth a closer look, because weak onboarding signals often point to toxic data entering the journey faster than operations can react. In a strategy call this week, we tested two paths and dropped one after the first hard metric came in. The broad creative refresh looked sensible, but the evidence pointed elsewhere: poor early engagement clustered around risky sign-up cohorts, not tired messaging.

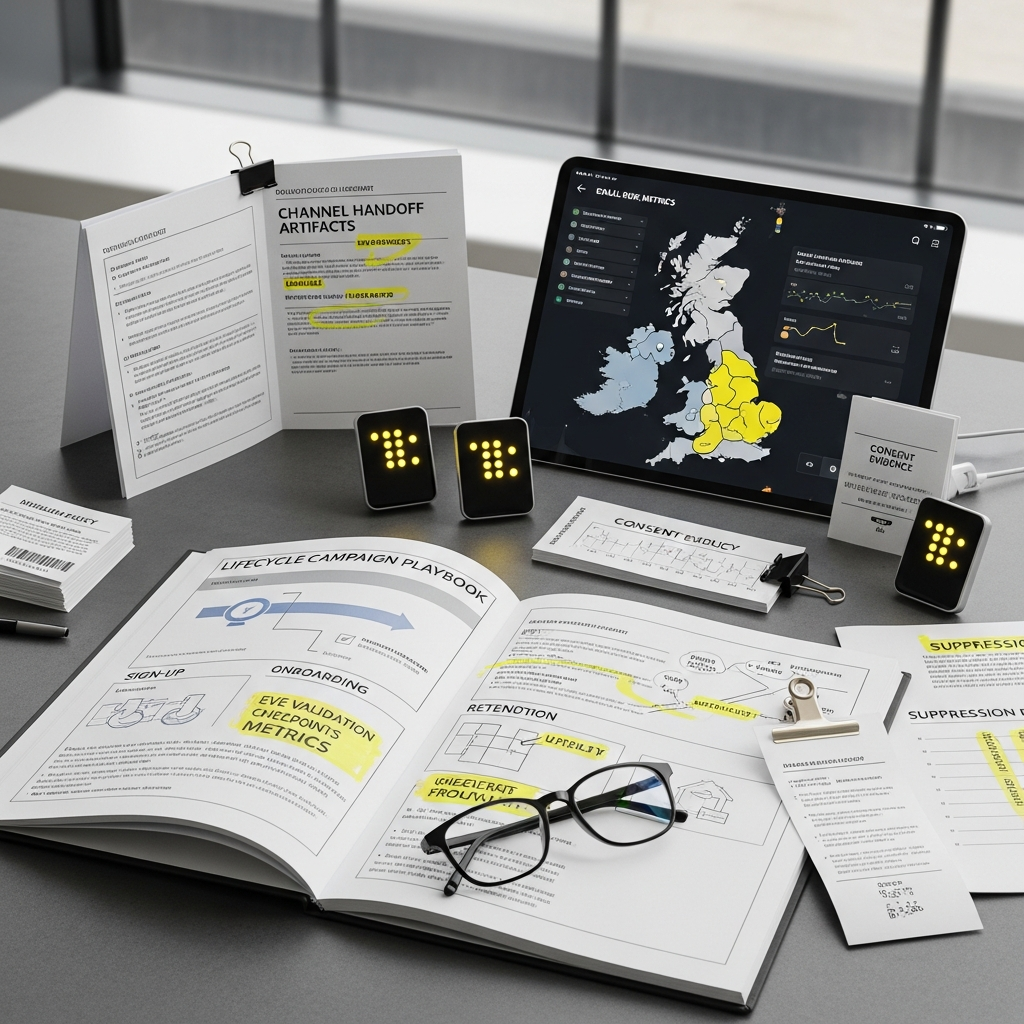

That is the decision facing many UK CRM teams in 2026. Within 48 hours of seeing soft opens, low clicks, delayed confirmations or odd registration spikes, do you tighten controls, suppress risky records and slow the journey, or do you protect volume and hope the pattern clears? My view is simple: a strategy that cannot survive contact with operations is not strategy, it is branding copy. The better option is a measured UK email risk monitoring response that protects reach, respects consent compliance and keeps the commercial case defensible.

What is being decided

The practical decision is not whether onboarding matters. It is where to intervene in the first 48 hours when new-contact quality starts to wobble. For most UK CRM programmes, the option set narrows to two models. One is a volume-first approach: allow almost all new records into the welcome flow, monitor complaints and bounces after the send, then clean later. The other is a controlled-entry approach: score each record at sign-up, route medium-risk records into an email confirmation loop, and suppress high-risk records until a human or automated rule clears them.

I liked the first option, but the evidence favoured the second once the numbers landed. Late cleaning usually arrives after the sender has already absorbed the damage. According to best practice carried into Holograph's EVE playbooks, forms should stay short and clear, with opt-out choices visible, but poor-quality records still need front-door controls. EVE is designed for that operating moment. It validates emails in under 50ms, uses more than 30 proprietary detection methods, and keeps zero data retention with audit trails available for compliance review. That mix matters when a CRM lead has to explain why suppression thresholds changed between Monday morning and Wednesday's performance review.

There is another constraint this year. According to the Financial Times on 16 March 2026, the UK is still struggling to electrify in the way policymakers want, a reminder that infrastructure dependency can blunt strategy. CRM teams face a similar problem in miniature. A welcome journey may be well designed, but if your ESP, consent logging and validation logic are not aligned, the operating sequence breaks under load. A plan looked strong on paper, then one dependency moved, so we re-ordered the sequence and regained momentum. That is often the difference between a contained onboarding issue and a deliverability dip that bleeds into retention.

Comparative view

For a 48-hour response, decision-makers need comparative metrics, not generic warnings. The table below sets out the trade-off.

| Response path | Speed to deploy | Main upside | Main constraint | Commercial effect inside 48 hours |

|---|---|---|---|---|

| Volume-first, clean later | Fast if no new rules are needed | Protects top-line sign-up volume | Higher risk of bounce, complaint and sender damage | May keep acquisition numbers up while lowering welcome-series efficiency |

| Controlled entry with EVE scoring | Fast if validation is already live at form or API level | Protects list quality and early engagement integrity | Needs threshold tuning to avoid false positives | Usually improves decision quality before retention reporting locks in |

That second constraint matters. No validation engine should be presented as infallible, and EVE's own position is careful here: results infer authenticity probabilities rather than certainties. Still, the commercial case for controlled entry is strong when weak onboarding signals appear in clusters. If one source, one incentive mechanic or one landing page begins generating typo-heavy domains, alias abuse or bot-like behaviour, delaying action can hurt more than a modest dip in raw lead count.

Holograph's public campaign precedents are useful because they anchor outcomes in operating reality rather than theory. A Tesco and Co-op coupon campaign delivered a reported 43% uplift in email sign-ups, but best practice remained the same: preserve consent clarity and keep forms simple. In AR-led promotions for Lucozade Energy and Ribena, reported uplifts of 32% sales and 258% against entry goals show that top-of-funnel success can arrive quickly. It also raises the burden on email deliverability and list-quality controls, because growth claims without baseline evidence should be parked until the data catches up.

A useful tangent here: teams often ask whether stricter validation kills conversion. Sometimes it does, a little. The better question is whether you prefer a lower headline acquisition number or a welcome cohort that can actually be nurtured. If your onboarding series is the bridge to second purchase, then false confidence in bad records is expensive.

Operational impacts

In practice, weak onboarding signals show up as combinations, not isolated metrics. A CRM team might see open rates fall, but the real clue is that confirmed opt-ins are slowing at the same time as registrations from one source rise. Or click-to-open stays flat while hard bounces rise by domain cluster. Those are different problems and they call for different threshold changes.

The first 24 hours should focus on signal capture. Check source-level registration volumes, domain concentration, typo frequency, role-account prevalence and any unusual burst patterns by minute or hour. EVE's behavioural fingerprinting, entropy analysis, keyboard-walk detection and alias unmasking can help separate natural volume spikes from synthetic or low-intent traffic. For UK teams handling regulated audiences or promotions, fraud signal monitoring should sit alongside consent evidence, not instead of it. A risky record with weak consent proof is an easy suppression call. A risky record with clear, timestamped consent may need a confirmation loop rather than outright exclusion.

The second 24 hours are about journey decisions. Pause or soften the cadence for cohorts showing weak intent. That could mean delaying incentive reminders, excluding suspect records from day-two cross-sell, or sending only the lowest-risk welcome touch while validation confidence is reviewed. To be fair, this is where operations can get messy. Suppression rules that make perfect sense in a dashboard can frustrate acquisition teams if they are not logged properly. EVE's audit trail is valuable because it records why a record was flagged, which threshold fired, and when the rule changed. That makes stakeholder conversations less theatrical and more evidence-led.

There is a wider UK point too. According to BBC News on 8 March 2026, the UK government said it does not agree with the US on every issue, a small but useful reminder that alignment cannot be assumed between close partners. The same applies internally. CRM, acquisition, legal and deliverability teams may all look at the same onboarding dip and reach different conclusions. A shared operating log is not glamorous, but it prevents delay at exactly the moment delay costs the most.

How EVE fits the 48-hour decision window

EVE is strongest when used as a decision layer rather than a static gate. At sign-up, it can score records in real time with sub-50ms response, which matters for mobile completion rates and paid-media landing pages. During onboarding, it can feed suppression logic, confirmation-loop routing and source comparison. Before retention sends, it can help identify records that should be held back while sender reputation is protected.

The deployment choice depends on how your CRM stack is set up. If you have API access at form level, use real-time scoring and write the risk state directly into the contact model. If implementation ownership sits with Holograph or your internal engineering team, intelligent caching and optional client-side execution can cut friction where latency is tight. If the immediate need is auditability, prioritise rule visibility, reason codes and threshold versioning. That sequence is less flashy, but often more useful when a board-facing question lands on Friday about why welcome performance changed on Tuesday.

One honest gap in certainty remains. No team can know in the first 48 hours whether a weak onboarding patch is a passing channel anomaly or the start of a broader sender issue. That is the unresolved tension operational people live with. Still, delaying controls until certainty arrives is usually the more expensive mistake.

Recommendation and next step

As it stands, the better decision for most UK programmes is controlled entry with rapid threshold tuning, not late-stage cleanup. Start with three actions inside 48 hours: identify the affected source or cohort, route medium-risk records into an email confirmation loop, and suppress only the highest-risk records where signal strength and consent weakness line up. That protects early retention performance without turning the onboarding journey into a barricade.

The commercial implication is straightforward. If you act inside two days, you can often protect sender quality, preserve reporting confidence and stop one acquisition pocket from distorting the whole retention programme. If you wait for a neat monthly story, the damage is usually already in the cohort. EVE gives teams a practical way to make that call with evidence, not instinct, while keeping UK GDPR expectations and operational scrutiny in view. To test this in your own context, book a frictionless validation walkthrough with our solutions team and model the impact on your live data.