Full article

Fast acquisition is useful right up to the point it trashes deliverability. That is the trade-off most teams are really managing, whether they admit it or not. A binary email check might catch a typo, but it will not tell you much about whether a sign-up should pass straight through, pause for proof, or stop before it hits your database.

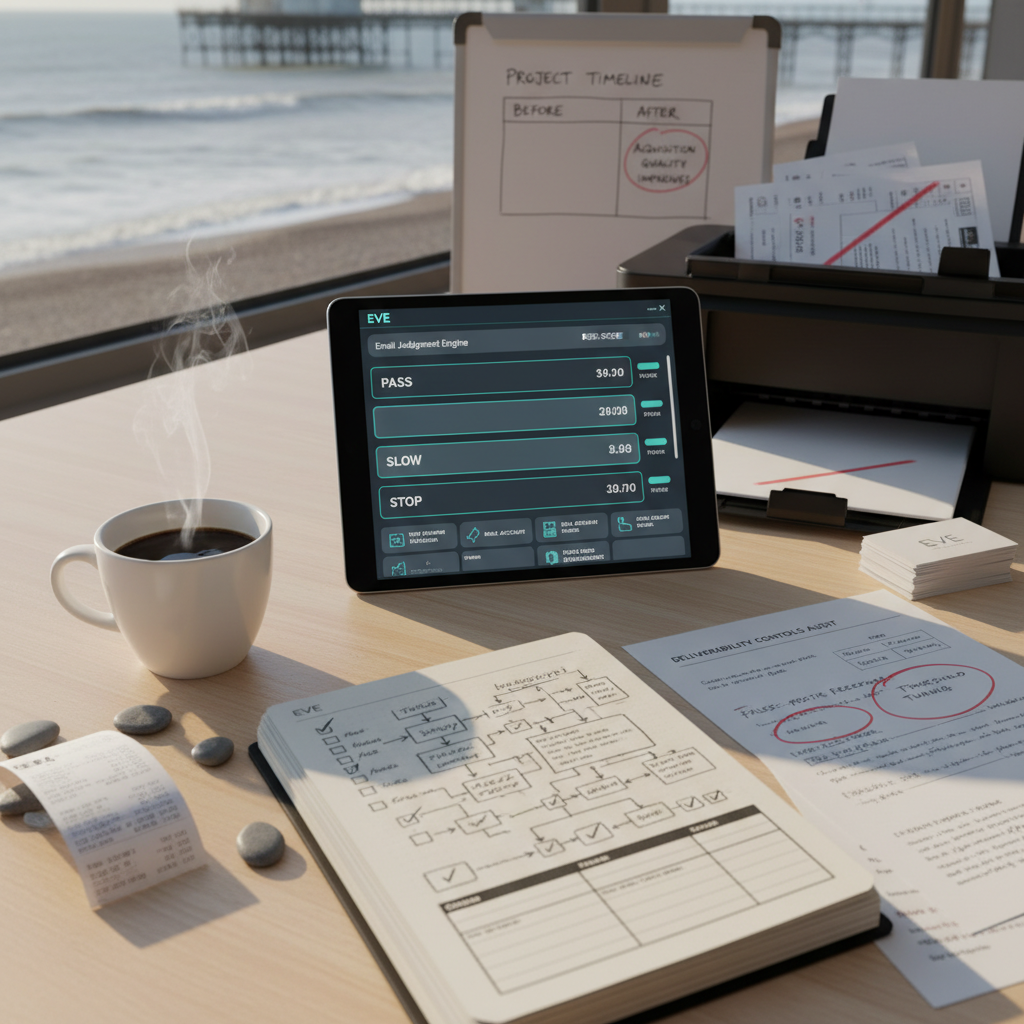

The practical answer is graded email judgement. Treat sign-ups as Pass, Slow, or Stop based on observable signals, then review those rules against bounce rate, complaint rate, and downstream engagement on a set date. If your plan has no named owners and dates, it is not a plan. Fix it.

Context

The problem with pass-or-fail validation usually shows up after the campaign report lands, not before. In one Q4 2025 review, a high-volume acquisition source hit its lead target, but the CRM team flagged a 15% hard bounce rate from that same segment. Every one of those addresses had already passed the existing validation check. On paper, green. Operationally, not green at all.

That matters because deliverability damage spreads. A bad sign-up source does not stay politely contained in one channel. If hard bounces and complaints rise, mailbox providers start treating all your traffic more cautiously. The real risk is not a few untidy records. It is reduced inbox trust across welcome emails, service updates, and marketing sends.

There is a useful discipline test here: measure the failure after the form, not just the form itself. At minimum, the owner of acquisition and the owner of CRM should review three checkpoints every month: hard bounce rate by source, complaint rate by source, and activation or first-click rate by risk tier. If you cannot split those numbers by channel and date range, you are flying on vibes.

What should slow a sign-up

Some signals deserve friction because they are ambiguous rather than outright bad. That is the sweet spot for a sign-up risk scoring model. You are not saying no; you are asking for one more proof point before granting full access.

The signals that should usually trigger a Slow path include:

- Very new domains, such as a registration age under 30, 60, or 90 days, depending on your risk appetite.

- Role-based addresses such as info@, sales@, or admin@, where intent can be legitimate but engagement often runs weaker.

- Source mismatch, where a partner or campaign suddenly sends a pattern of mailbox types or domains you have not seen before.

- Volume spikes from one domain family over a short window, especially if those users do not complete the next expected step.

The control should be proportionate. A slowed sign-up might get a confirmation email, a one-time code, or a short manual review queue. The acceptance criteria need to be explicit: confirmation clicked within 24 hours, or no account activation; manual review completed by 10:00 the next working day; reason code logged against every delayed record.

Yesterday, after stand up, ticket EVE-113 was blocked by a partner test feed sending addresses from a domain registered 48 hours earlier. A quick call with James in fraud cleared it. New date set for the live retest the next morning. That is the point of a Slow lane: enough control to catch ambiguity, without pretending every odd signal is malicious.

What should not slow a sign-up

This is where teams often overcorrect. Once a few bad cases surface, the temptation is to add friction everywhere and call it prudent. Usually it is just expensive.

Signals that should not, on their own, slow a sign-up include:

- A free mailbox provider such as Gmail or Outlook. High volume does not equal high risk.

- A syntactically unusual but valid address, provided it passes standards-based validation.

- A first-time domain appearance without any supporting negative signal. New to you is not the same as risky.

- A failed enrichment lookup where third-party data is missing rather than negative.

The rule of thumb is simple enough: do not add friction just because you lack context. Slow a sign-up when the signal changes the probability of poor delivery or abuse in a measurable way. If it does not move a monitored metric, it probably does not belong in the decision engine.

A decent checkpoint here is false-positive rate. Review, by source and by month, what percentage of slowed users later prove genuine by confirming, activating, or engaging. If that number is high, your threshold is too blunt. Between 14:00 and 15:30 last Thursday, I rewrote the acceptance criteria for a role-account rule after tests showed valid B2B leads were being delayed unnecessarily. Once the edge case for shared inbox procurement teams was covered, the tests passed. Bit tight on time, but sorted.

Implications for delivery and inbox trust

The case for graded control is not theoretical. In a January 2026 pilot on one high-volume source, a Pass/Slow/Stop model reduced completed sign-ups by 4% because the Stop rules were finally doing their job. More importantly, hard bounce rate dropped from 15% to under 2%, and spam complaints fell by more than 60%. Cost per quality lead improved by 14%.

Those are the measures worth defending in a planning meeting. Volume on its own is a vanity metric if the addresses do not activate, do not engage, or damage sender reputation. Better deliverability controls should show up in at least four places: lower hard bounces, lower complaints, better activation from the pass cohort, and a documented reason code for every slowed or blocked record.

This is also where explainability matters. If an address is slowed or stopped, log the reason: disposable domain, domain age below threshold, role-account rule, or source anomaly. Without that audit trail, you cannot tune thresholds, answer partner queries, or prove whether a decision was sensible. And if you cannot explain why a sign-up was delayed, the model will not hold up under operational pressure.

Owners, dates, and risk decisions

Thresholds are not a developer-only choice and they are not a marketing-only choice either. They sit across acquisition, CRM, fraud, and data quality. So assign ownership that reflects reality.

A workable model is this:

- Growth lead owns conversion impact and source quality by channel.

- CRM lead owns bounce rate, complaint rate, and activation outcomes by tier.

- Fraud or risk lead owns stop-list rules, abuse pattern review, and evidence pack maintenance.

- Engineering lead owns implementation date, logging, and rollback path.

Put review dates in the diary before launch. A quarterly governance meeting is the minimum; a weekly review for the first four weeks is better. One live example: on 5 March 2026, a planning session surfaced a disagreement over whether all role-based addresses should default to Slow. Fraud wanted the brake on immediately; B2B sales pushed back because small firms often start with shared inboxes. Fair objection. The path to green was evidence, not opinion. Chloe, supporting CRM operations, was given the action to analyse engagement and complaint rates for existing role-based accounts by 2 April 2026, then bring the data to the next rule review.

That kind of tension is healthy. Pretending the threshold is obvious usually means nobody has checked the numbers.

Actions to consider

If you are still on a binary valid-or-invalid model, do not try to rebuild everything at once. Start with one source, one review cadence, and one owner per decision. A practical first pass looks like this:

- Baseline the current risk , Owner: CRM lead. Date: by 30 April 2026. Measure 90 days of sign-ups by source and tag hard bounces, complaints, disposable domains, and low-engagement cohorts.

- Define the first Stop rules , Owner: Fraud or risk lead. Date: by 15 May 2026. Keep it narrow: known disposable providers, invalid syntax, and confirmed abuse patterns. Acceptance criteria: every rule has a reason code and rollback note.

- Pilot one Slow rule , Owner: Engineering lead with CRM support. Date: by 31 May 2026. Start with a domain-age threshold or a role-account review path. Acceptance criteria: slowed users receive confirmation within five minutes; queue is reviewed by 10:00 each working day.

- Review false positives and delivery impact , Owners: Growth and CRM. Date: first review seven days post-launch, then monthly. Track hard bounce rate, complaint rate, confirmation completion, and activation by Pass, Slow, and Stop.

Two risks usually show up early. One, the Slow queue becomes an unloved admin pile. Mitigation: set a review SLA and make one named owner accountable each morning. Two, teams keep adding rules because they sound sensible. Mitigation: no rule goes live without a measurable hypothesis, a review date, and a rollback condition.

The short version is this: slow sign-ups when the signal is predictive of delivery or abuse risk, and do not slow them when the signal is merely unfamiliar. Keep the model explainable. Keep the owners named. Keep the dates real. If you want to map your current sign-up flow into a Pass, Slow, Stop model without making a mess of conversion, contact Holograph. We can help you define the thresholds, owners, and checkpoints that give you a sensible path to green.