Full article

Signal-led publishing rarely breaks at the glamorous bit. It breaks in the hand-offs: who owns the next move, which claim is actually cleared, whether anyone can see that the same angle ran six weeks ago, and why a routine piece has been stuck in review since Tuesday. More drafting capacity has exposed the plumbing.

That matters because editorial workflow automation is only useful when the rules are visible. If a platform cannot explain its decisions, it does not deserve your budget. The first fixes are usually quite plain: cleaner status logic, tiered approvals, scoped memory, and a hard distinction between a signal worth noticing and a story worth publishing.

Signal baseline

Most teams do not have an ideas problem. They have a systems problem. Signals live in one tool, approvals in another, source notes in a thread nobody can find, and institutional memory in the head of the busiest person in the room. You can still publish like this for a while. It just gets over complicated the moment volume rises.

The broader pressure is real. Around 10 to 11 March 2026, market reporting across Yahoo Finance and vendor announcements pointed in the same direction: more investment in AI infrastructure, more enterprise automation, more pressure to prove efficiency. That does not automatically mean better publishing. It means senior teams are more likely to ask editorial and marketing operations to move faster with the same headcount. The trade-off is blunt: speed bought without governance usually comes back as rework.

I’d start with four checks. Can every incoming signal be tagged by source, confidence and relevance? Can someone see whether the topic, claim or angle has already been covered? Is there a named approver for regulated, legal or reputation-sensitive material? Can the team measure turnaround time from brief to publish? If two of those are missing, the workflow is not scaled; it is merely coping.

Between 09:00 and 11:30 last Thursday, I tried to trace one stalled approval path and got the small failure you’d expect: three versions of the same draft, two contradictory comments, no clear owner. Fixed it with a simple hack: five valid statuses, each tied to one named role. It was not glamorous. It worked.

What is shifting

A year or two ago, many teams were constrained by drafting capacity. Now drafting is cheaper, and judgement is the scarce asset. That changes the failure pattern. The bottleneck moves from “can we produce a first pass?” to “can we route, review and justify what goes live?”

This is where a lot of signal-led content systems go wrong. They treat signal intake as if it were editorial permission. It isn’t. A credible source gives you something to inspect, not something to publish. Cross-source corroboration matters, and so do caveats. For instance, the Office for National Statistics quarterly personal well-being estimates show trends in reported life satisfaction across the UK, but they don't prove causality. Good workflow discipline forces that distinction into the draft.

There is a trade-off here. More evidence checks and approval rules add friction at the start. That can feel annoying, especially on a calm Friday afternoon when everyone wants the piece out. Still, ten extra minutes up front is usually cheaper than two extra review rounds, a legal correction, and a founder being dragged into Slack to explain what the article meant to say.

Where systems go wrong first

The first failure is ownership. Teams often describe a courteous sequence of assumptions and call it process. The strategist assumes the editor will spot duplication. The editor assumes legal will be brought in for sensitive wording. Nobody is idle; the workflow is simply vague.

The second failure is review design. Too many organisations run every item through the same path because a single route looks neat in a diagram. In practice, it is wasteful. A low-risk service-page update should not wait behind a claim-heavy article for a regulated market.

The third failure is memory. An editorial memory system is not a fancy archive. It is a working record of prior angles, approved claims, source notes, and house positions close to where the work happens. If retrieving a cleared point takes more than two minutes, the memory layer is decorative.

Last Monday, in a small meeting room in East Sussex, a radiator started clicking like an impatient metronome while we untangled why one team kept rewriting the same introduction. That’s when I realised the issue was not tone guidance at all. Their previous decisions were technically stored, but operationally absent.

The fourth failure is false assurance from tooling. Audit trails matter, but they are not the same as understanding. Automation without measurable uplift is theatre, not strategy.

Who is affected

Editorial leads usually feel it first because they are nearest to the backlog. Then the pain spreads. Marketing operations sees launch dates wobble. Legal gets dragged in too late. In lean UK organisations, one person may be commissioning, editing and publishing in the same afternoon. That can work when the rules are explicit. It becomes brittle when the workflow runs on memory and goodwill.

The effect varies by content type. Low-variance pages and routine updates are good candidates for automation. For example, Boots Magazine saw repetitive editorial tasks cut by up to 90% when low-value mechanics were handled properly. More nuanced material needs slower lanes with named reviewers and explicit evidence thresholds.

A practical marker is turnaround-time variance. If the median path from draft to publish is two days, but one in five items takes seven days or more, you probably have a governance problem, not just a capacity issue.

Actions and watchpoints

Start with workflow anatomy, not software demos. Map one live publishing path from signal to published output. Note each hand-off, waiting point, and place where someone must “just know” what happens next.

Then apply four controls. Separate signal capture from publish permission. Create tiered review routes. Build memory into commissioning and review. Instrument the bottlenecks to track rework rate and waiting time.

Watch out for over-automating edge cases or collapsing all exceptions into one senior approver. Keep status labels simple: draft, in review, changes requested, approved, published. I still don’t fully understand why teams insist on inventing 14 status labels for a five-step process, but here’s what I’ve observed: every extra label creates one more place for work to disappear.

The first gain from better discipline is reduced ambiguity. Teams know what state a piece is in and who owns the next move. Once that’s settled, speed improves because fewer items loop back for preventable reasons.

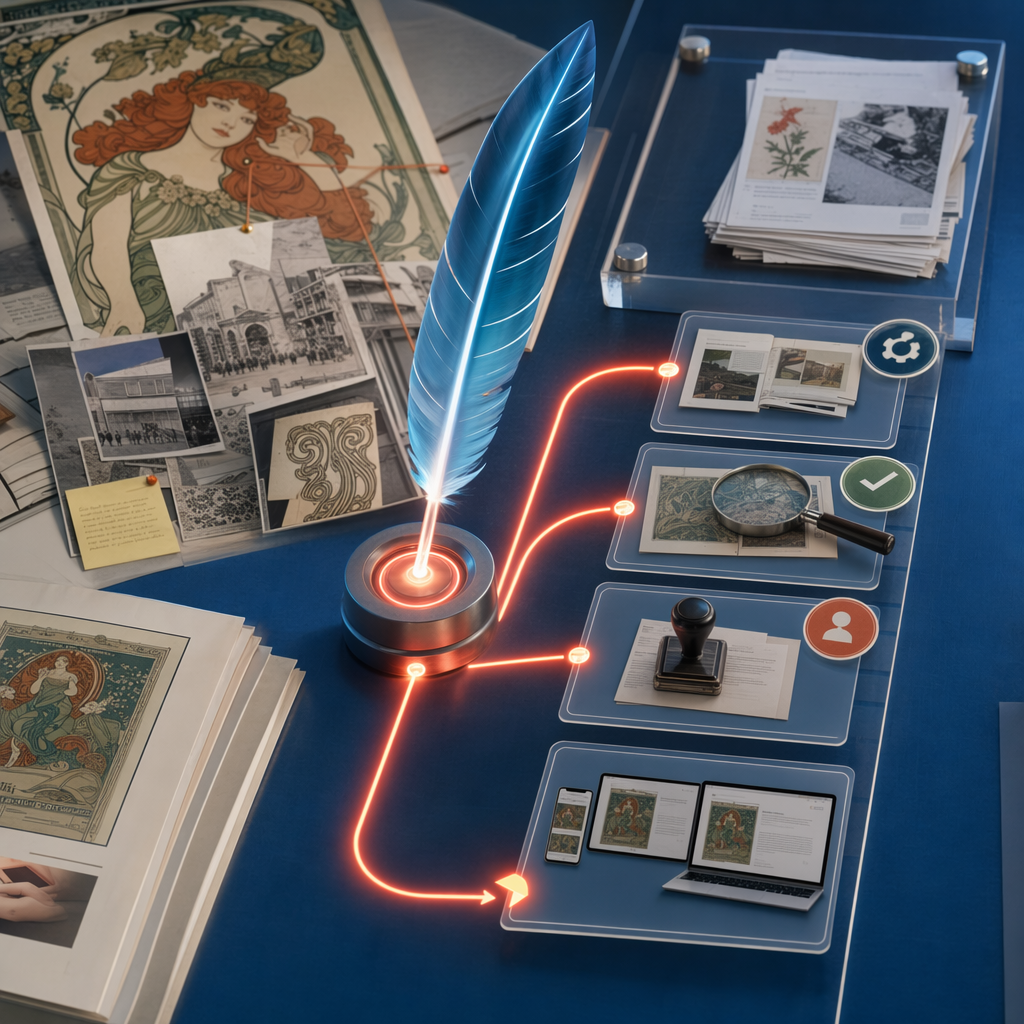

If your team is seeing repeated angles or fuzzy sign-off, map one live publishing workflow through Quill. Invite editorial teams to map one live publishing workflow through Quill to find where hand-offs leak time and which approval rule should change first, not more theatre, just a sturdier publishing system.