Full article

Overview

A valid email address only tells you that a mailbox can probably receive a message. It does not tell you whether the person behind it intends to engage, whether the address is likely to support abuse, or whether sending to it will improve acquisition efficiency. That gap matters more now because privacy tools, disposable domains and fragmented user behaviour have made pass/fail validation a poor proxy for commercial quality.

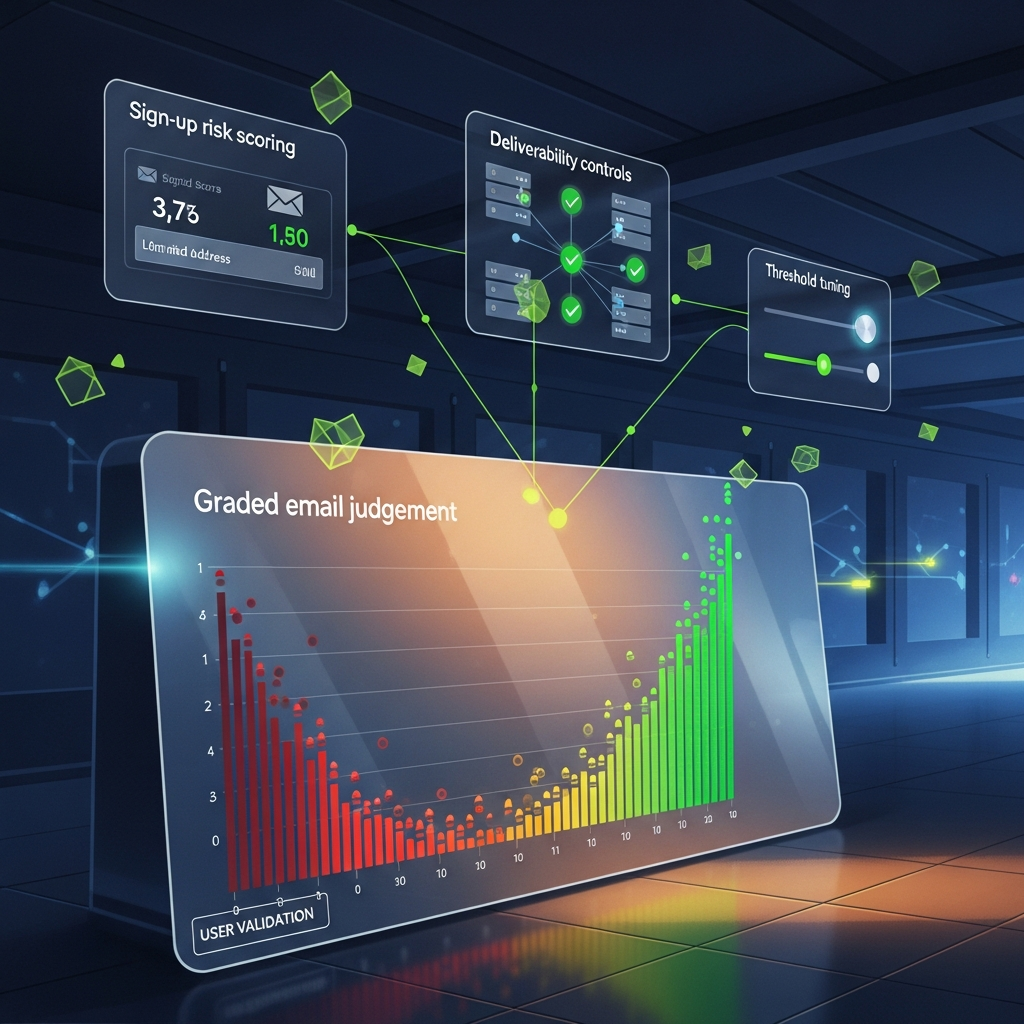

For UK growth, CRM and product teams, the practical move is to replace binary checks with email judgement: a scored, explainable decision model that combines technical validation, domain intelligence and behavioural signals. The trade-off is simple enough: more friction can reduce abuse, but too much can suppress legitimate demand. The job is to test where that line actually sits, not guess over a cup of tea.

Signal baseline

Traditional validation still has a place. Syntax checks and mailbox or server-level checks remain useful for catching obvious rubbish before it enters the system. GOV.UK guidance for driving examiners, updated on 10 March 2026, is a decent analogy: a driving test establishes baseline competence, not whether someone will make good decisions in every real-world condition. Email validation does much the same. It confirms a technical minimum. It does not establish intent, value or likely downstream performance.

Last Tuesday, in our London office, we reviewed acquisition data from one campaign with a 99.8% validation pass rate. Sounds tidy. The first-send engagement told a less cheerful story: open rates around 4% and hard bounces edging towards 3% after initial acceptance. The addresses were syntactically sound and apparently deliverable, yet the commercial result was poor. That is the core problem: a valid address can still be the wrong decision.

The logic matters here. Low engagement and later bounces do not prove fraud on their own. They do show that technical validity alone is not enough to protect sender performance, campaign efficiency or offer economics. If a platform cannot explain why it accepted an address, it does not deserve your budget.

What is shifting

Three changes have made email judgement more important than simple validation. First, privacy-preserving relay services have become mainstream. Apple Private Relay and similar tools generate valid aliases that protect the user while reducing the amount of reputation data available to the business. Blocking those users outright is clumsy and, frankly, a bit of a faff. The better approach is to accept that the email is real while treating it as a different signal class from a long-established primary inbox.

Second, disposable email infrastructure is cheaper and easier to automate than it was even five years ago. Many services now use clean-looking domains and API-based provisioning, which makes them harder to catch with superficial checks. That creates a direct trade-off: if you keep the sign-up flow frictionless, you preserve conversion; if you ignore disposal patterns entirely, you invite list pollution, bonus abuse and misleading acquisition numbers.

Third, users now manage email addresses by purpose. Plenty of people keep one inbox for trusted contacts, another for retail and newsletters, and a throwaway route for one-off forms. A valid result tells you none of that. As ETBrandEquity noted on 11 March 2026 in its reporting on AI in marketing, the broader direction of travel is towards more dynamic, data-led decisioning rather than single-signal rules. Fair enough, with a caveat: dynamic only helps if the model is measurable and explainable. Otherwise it is just expensive theatre.

Who is affected

Marketing and CRM teams feel the problem first because poor-quality sign-ups distort campaign reporting and can weaken sender performance over time. A weak cohort can depress opens, clicks and conversion, making decent creative or offer testing look worse than it really is. The trade-off here is uncomfortable but real: looser acquisition rules can flatter top-of-funnel volume while quietly damaging the quality of the list you are trying to build.

Fraud and finance teams see a different pattern. Addresses tied to disposable domains or unusual sign-up behaviour can correlate with promotional abuse, repeat account creation and chargeback risk. Between Q3 and Q4 last year, we worked with an e-commerce client that found accounts created with known disposable domains accounted for more than 60% of friendly-fraud chargebacks while representing less than 5% of the user base. That does not mean every disposable address is fraudulent. It does mean the signal is strong enough to deserve a score, not a shrug.

Engineering and product teams inherit the operational mess. Large volumes of low-intent accounts clutter data models, muddy cohort analysis and add needless housekeeping. Storage is cheap until the downstream complexity arrives: messier lifecycle logic, noisier analytics and more edge cases for support. Fancy that , what looked like a simple growth shortcut turns into technical debt.

How to build an operating model

A workable model for email judgement starts with layers, not miracles. Layer one is basic validation: syntax, domain existence and mail exchange checks. Necessary, not sufficient. Layer two is domain intelligence: whether the domain is known for disposable use, how established it is, and whether standard email records such as SPF, MX and DMARC are configured sensibly. A long-standing company domain and a freshly registered throwaway domain should not receive the same treatment simply because both can accept mail.

Layer three adds behavioural context. Useful signals include form completion speed, device and browser patterns, IP geography against the declared country, and whether the journey resembles ordinary human behaviour or scripted traffic. None of these signals is decisive on its own. Together they support a more reliable score. That is the practical heart of sign-up risk scoring.

In most teams, the cleanest implementation is a three-way decision model:

The trade-off is always between speed and certainty. Push too hard on blocking and you lose legitimate users, especially privacy-conscious ones or people on smaller domains. Go too soft and you fill the funnel with records that look healthy in a dashboard and underperform everywhere else.

- Low risk: accept immediately and continue the normal onboarding journey.

- Medium risk: accept with a lightweight challenge, such as email confirmation or a stepped verification route.

- High risk: block or hold for review where the abuse indicators are materially strong.

Actions and watchpoints

Start by auditing what you already capture at sign-up. Most teams have more signal than they use: IP data, user-agent strings, completion timing, referral source and first-session behaviour. The trick is to connect those signals to outcomes that matter. Did the user verify? Did they open the welcome message? Did they purchase, lapse quickly or create support overhead? Email judgement improves when the model is tied to operational feedback, not vendor mythology.

Between last Monday and Wednesday, I tightened thresholds hard on a side project and cut bot sign-ups by 90%. Lovely for about half an hour. Then we found a 15% drop in prospects that looked genuine, many from smaller corporate domains with less obvious reputation history. We fixed it with a simple review route for mid-range scores instead of a hard block. Slightly more manual work, yes, but far less collateral damage. That is usually the better trade: a review queue costs time; a blanket reject costs opportunities you rarely get back.

There is another watchpoint worth stating plainly. Explainability is not an optional extra. If your scoring system cannot tell your team why an address landed in accept, review or reject, governance becomes guesswork. In regulated or reputation-sensitive environments, that is not clever automation; it is a procurement mistake waiting to happen.

What good looks like for UK teams

A sound UK operating model for email judgement is privacy-aware, measurable and easy to challenge. It does not punish users for sensible privacy behaviour, and it does not pretend every valid inbox deserves the same treatment. It gives growth teams room to ship quickly, fraud teams a clearer early-warning signal, and product teams data they can actually trust.

The best systems are usually the least dramatic. They score, explain, test and improve. They do not promise clairvoyance. They help teams make better decisions with the evidence available, then refine those decisions against observed outcomes. That is how you build a healthier database and a saner acquisition engine without turning sign-up into a bureaucratic obstacle course.

If your growth or CRM team wants to see what this looks like in practice, bring one live acquisition journey and we can walk through how EVE would score it, where it would add friction, and where it would stay out of the way. You will come away with a clearer view of the trade-offs in your current flow and a practical next step to test, not just another slide deck. Cheers.