Full article

Created by Matt Wilson · Edited by Marc Woodhead · Reviewed by Marc Woodhead

What the ops team should tune next week in an email judgement engineThe short answer: do not make the engine harsher just because risk exists. In EVE, the next useful change is a three-way model, Pass, Slow, and Stop, with named owners, review rules, and a short evidence loop. The point is not stricter validation for its own sake. It is better sign-up risk scoring, clearer deliverability controls, and fewer good users caught by a blunt rule.

That is the tension. List growth wants less friction. Deliverability wants fewer poor records. People often frame those aims as a straight fight, then default to a binary valid or invalid rule and call it discipline. Usually it is just a neater way to hide the trade-off. A working email judgement engine should decide what can pass now, what should pause for review, and what needs to stop before list quality starts to drift.

This note is about next week’s tuning work in EVE. The question is not whether risk exists. It does. The question is what changes first, who owns each move, and which checkpoints tell you the settings are helping rather than adding noise. If your plan has no named owners and dates, it is not a plan. Fix it.

What is being decided

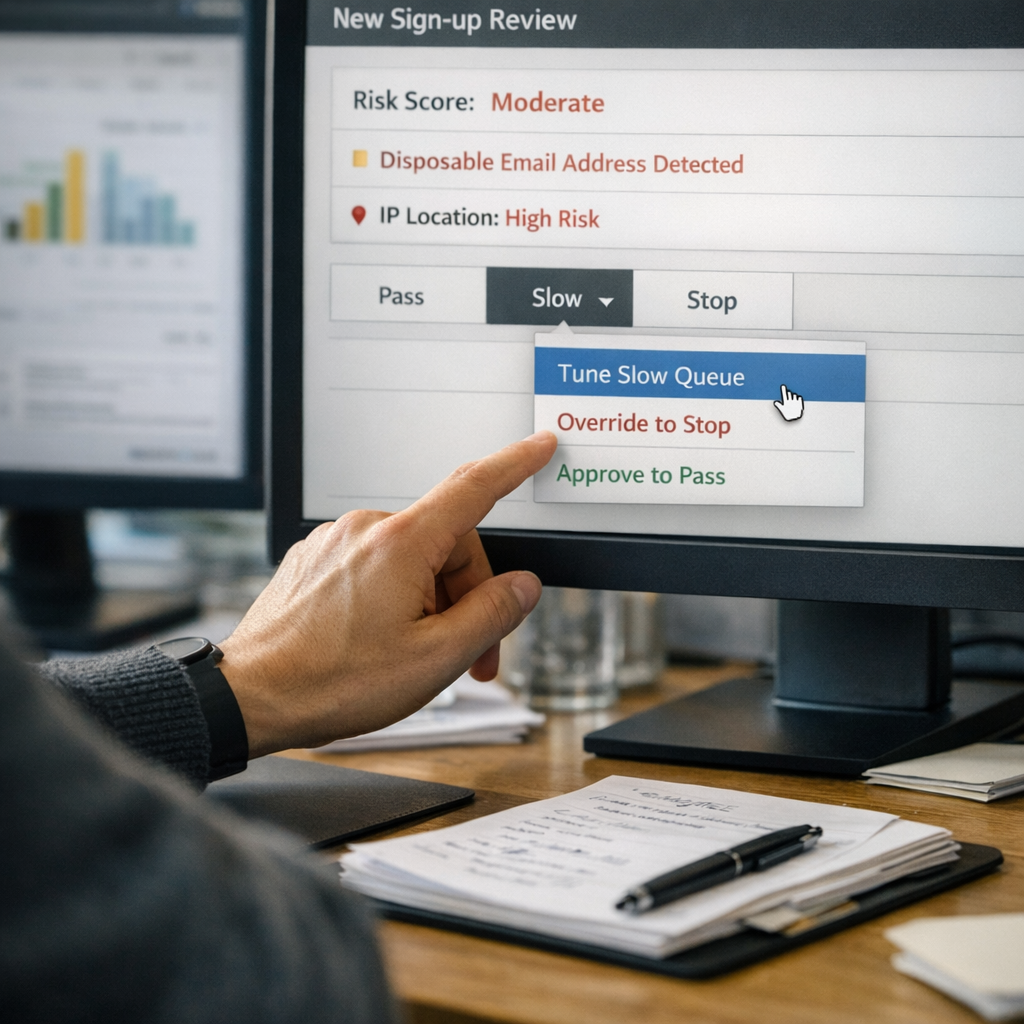

The immediate change is a move from binary validation to a three-way decision model: Pass, Slow, and Stop. Operationally, that means a sign-up risk scoring rule set that can treat a newly created address on a high-velocity promotion differently from a lower-risk address entering a standard CRM flow. Same channel, different consequence.

The earlier instinct here was to tighten Stop and clean the data fast. That is the tidy answer, not the useful one. The brief and proof anchors point the other way: EVE is there to make explainable validation decisions in real time, with the reasoning visible to the team. So the next tuning priority is not a harsher stop line by default. It is a smarter Slow layer with clear score bands, an override rule, and a review SLA the ops team can actually keep. Owner for threshold definition: CRM Lead, with support from the Fraud Analyst. Date for first threshold agreement: end of this week. Acceptance criteria: documented score bands, an override rule, and a review SLA the ops team can hold.

What risk or deliverability issue needs controlling

The practical risk is not one bad address. It is what blunt handling does at scale. Static validation looks clean because the rule is easy to explain. The cost lands later. Technically valid but poor-quality addresses still enter the list, while odd but legitimate addresses get blocked at the door. That is how mailbox-quality drift starts and why support pressure turns up in the wrong place.

A graded email judgement engine is less tidy on paper and much easier to run properly. You can see why a record slowed or stopped, log the reason code, record an override, and tune the logic from evidence rather than instinct. That is the more useful comparison here: governed validation with override policy versus silent rejects and slow list damage.

The current working baseline is testable: scores below 70 pass, scores from 70 to 85 slow for review, and scores above 85 stop. Those score bands are not final truth. They are a starting point, and a better one than arguing in the abstract about whether growth or deliverability matters more this week.

| Control factor | Static validation | Graded judgement in EVE |

|---|---|---|

| False positives | Higher risk of rejecting unusual but genuine addresses. | Medium-risk cases can be held and reviewed instead of blocked outright. |

| Deliverability control | Limited; technically valid but poor-quality records still pass. | Stronger; risky patterns can slow or stop before list damage spreads. |

| Operational load | Low upfront effort, more downstream clean-up. | More setup discipline, better traceability and fewer blunt interventions. |

| Auditability | Weak; little context beyond pass or fail. | Clearer; the team can log reason codes, overrides, and outcomes. |

The obvious objection is queue pressure. A Slow queue can become a bottleneck if the hold rules are vague or nobody owns the work. Better to admit that now. The mitigation is plain enough: set a review SLA, cap manual review volume, and allow low-confidence holds to auto-release after a defined period where policy allows it.

Where EVE fits best

EVE fits where a team needs real-time email judgement and does not want to choose between weak validation and blanket blocking. Its value is not that it makes risk disappear. It makes the decision path visible. That matters most in sign-up journeys where acquisition pressure, fraud signals, and deliverability exposure are all present at once.

In that setting, the right question is not whether next week’s traffic looks more like high-volume promotion or more like hostile mailbox activity. Those are different operating conditions. They should not be averaged into one mushy threshold. If the coming traffic is promotion-heavy, bias the Slow logic towards temporary holds on clustered risk rather than broad stopping. If mailbox creation patterns begin to look actively hostile, tighten Stop on the signatures causing damage. Different signals, different consequences.

For teams weighing alternatives, this is the side of the comparison that matters more: real-time email judgement versus static regex or allow-list checks. Regex and allow-lists are useful for narrow formatting or known-domain rules. They are weak substitutes for explainable validation decisions when the actual issue is delivery risk, campaign velocity, or suspicious sign-up patterns. More detail on the product sits in the named proof links here: EVE and Holograph solutions.

What to tune next week

Next week should stay narrow. Tune the controls the ops team can assess inside one working week, not a grand redesign. Two things matter first: threshold placement and queue handling. If those remain loose, everything after that turns into opinion.

| Time window | Action | Owner | Acceptance criteria |

|---|---|---|---|

| Mon-Tues | Review the last 30 days of hard bounces, spam complaints, and obvious low-quality sign-ups to isolate repeat patterns such as disposable domains, role-based addresses, or velocity spikes. | Deliverability Specialist | Pattern list agreed and logged for initial Stop tuning; change log updated. |

| Wed | Apply the initial Stop threshold in EVE on non-critical sign-up journeys and monitor rejection behaviour for 24 hours. | CRM Lead | Rejection behaviour documented against the test flow and added to the change log for review. |

| Thurs | Define Slow criteria for medium-risk records, including the reason codes that trigger review and the conditions for automatic release or override. | Fraud Analyst | Slow policy published with named owner, review SLA, and override notes. |

| Fri | Launch the Slow queue process and brief ops on review handling, suppression rules, and exception logging. | Head of Operations | Manual review queue volume tracked daily and exceptions recorded. |

| Mon next | Compare the first cohort results across Pass and approved Slow records, using early engagement and complaint signals to decide whether thresholds hold or move. | Growth Manager | Cohort comparison completed, with threshold recommendation logged and no decision taken on a single metric alone. |

The plan is short-cycle on purpose. Long tuning loops are often just indecision with nicer formatting. It is also a bit tight on time, so keep the test scope narrow. Week one is not for solving every edge case. It is for reducing the most expensive mistakes first.

Operational impacts and risks

The main operational impact is ownership, not technology. A Slow queue without an owner becomes a holding pen. A Stop rule without a review trail becomes folklore. EVE can keep the reasoning visible, but that only helps if the surrounding process is disciplined enough to use it. Owner, date, acceptance criteria, done.

Risk one is false positives on legitimate entrants, especially in campaigns that naturally attract newer mailbox patterns. Mitigation: start with conservative thresholds, use them first on non-critical journeys, and review the highest-risk segment of the Slow queue in the first few days.

Risk two is review backlog. Mitigation: set a daily cap, define an auto-release window where appropriate, and keep suppression and override logs for traceability.

Risk three is over-reading one measure. Mitigation: assess quality in combination, not in isolation. Watch complaints, hard bounces, early opens, queue volume, and override rate together. Where campaign analysis matters, assess quality at ad level and spend level as well, rather than pretending one operational metric settles the argument.

Recommendation and next step

Recommendation: proceed with the 7-day tuning plan and use the current score bands as a controlled baseline, not as settled truth. Keep Stop above 85, keep Slow at 70 to 85 for the first pass, and review the evidence after the first live cohort instead of shifting thresholds halfway through. That gives the team a workable test, a measurable trail, and a cleaner decision at the end of the week.

The next move sits with the Head of Operations: approve the plan and confirm owners before work starts next week. If you want EVE set up with thresholds, queue rules, and override logging that your team can actually run without guesswork, contact us. We will walk through the risks, name the owners, and get the path to green properly sorted.

If this is on your roadmap, EVE can help you run a controlled pilot, measure the outcome, and scale only when the evidence is clear.