Full article

Delivery assurance note

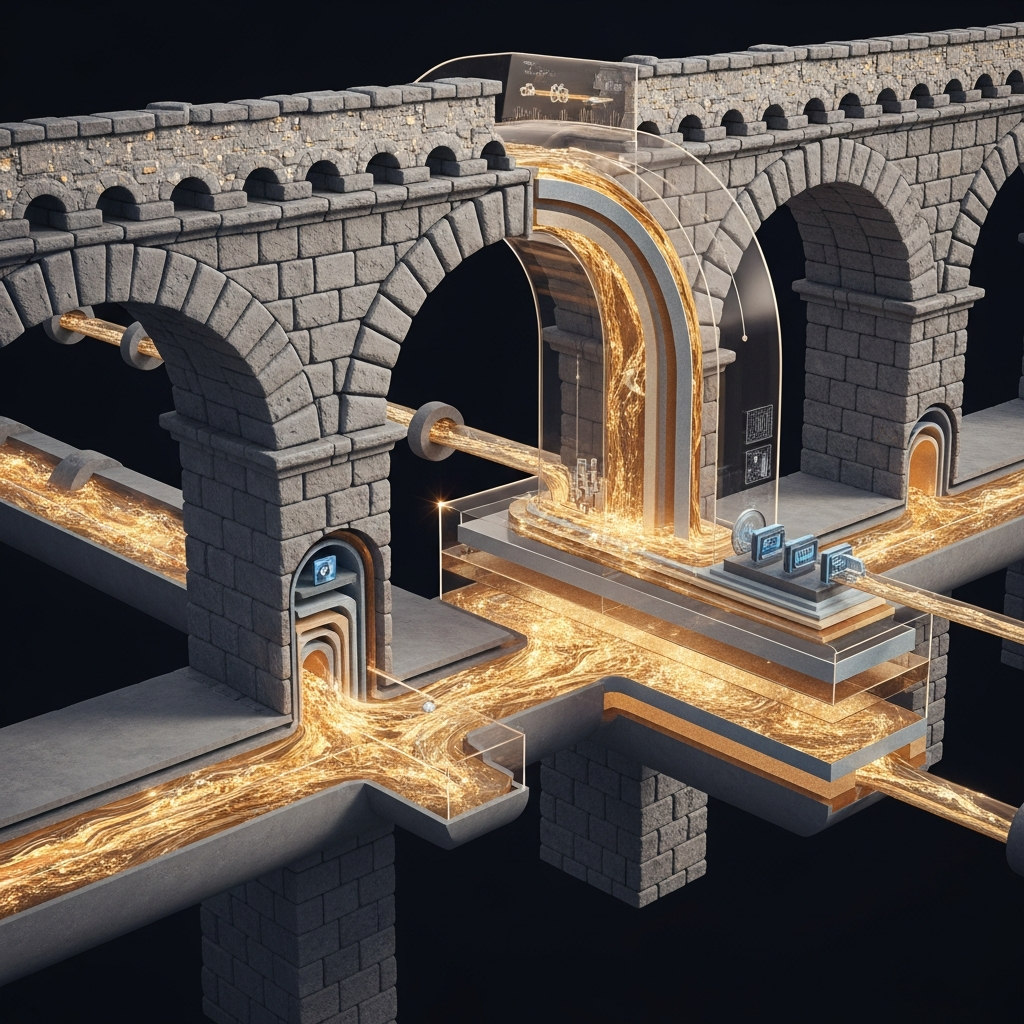

UK retail teams rarely lack data first. They lack a reliable way to join it, govern it and activate it in time. Signals sit across EPOS, ecommerce, CRM, loyalty and media platforms, then arrive late, conflict with each other or lack the lineage needed for confident reporting.

External indicators such as Bank of England rate signalling, ONS well-being data and short-term local conditions can help explain shifts in demand, but they do not replace first-party behaviour. The practical test is simpler than most dashboards suggest. If a priority audience still takes days to build, or nobody owns the fix, the problem is operational.

Context: Reading the signals in a complex market

The backdrop for UK retail is mixed. On 20 March 2026, the Financial Times reported that the Bank of England had made the sharpest shift in tone among major central banks after the energy shock. Tougher rate rhetoric tends to feed into household caution, category mix and promotional sensitivity, so it is a prompt to watch value perception, margin pressure and response rates more closely.

The ONS adds another useful layer. Quarterly personal well-being estimates track life satisfaction, happiness, anxiety and whether people feel the things they do are worthwhile, while local authority series show regional variation. Useful, yes. Predictive at customer level, no. These are planning signals, not targeting inputs.

Short-term local conditions can matter too. On 20 March 2026, East Sussex was around 4°C with partly cloudy conditions and winds near 13 mph, while Abbey Mead, Surrey was closer to 8°C. That can affect footfall, trip timing and convenience purchasing in the same trading week.

The difficulty starts when retailers try to read those external signals alongside disconnected internal ones. Store transactions have one owner, web analytics another, loyalty data another again. When feeds do not reconcile, teams get partial answers dressed up as complete ones.

What is changing: From channel reporting to customer reporting

The shift is from channel reporting to customer reporting. Customers do not shop in tidy reporting lines. Someone browses on mobile on Tuesday, checks reviews on a laptop that evening and buys in-store on Saturday. Measure each touchpoint in isolation and both marketing contribution and customer value are easy to misread.

That is why a single customer view now behaves like an operating requirement rather than a technical aspiration. Without it, segmentation slows, suppression logic gets messy and attribution skews towards whichever platform reports fastest.

A useful checkpoint is blunt enough to work. If a team cannot build a cross-channel audience inside one working day, the model is under strain. If matched-customer rate across store, ecommerce and CRM sits below an agreed threshold, say 80% for the use case in scope, performance confidence should be marked amber until fixed.

Implications: The operational risks of a disconnected view

The first risk is speed. Manual joins eat time, pushing launches back and narrowing the window for testing, optimisation and stock-led decisions. If a segmented campaign takes five days to prepare instead of one, some of the commercial opportunity expires before the work is live.

The second is weaker judgement. A paid social campaign can look soft if reporting captures online conversion while store purchases remain unlinked. Equally, a drop in loyalty scans does not automatically mean lower engagement. It may mean customers are moving between channels faster than the measurement model can follow. Those explanations lead to very different actions.

The third is governance. When reports disagree, confidence drops because metric definitions are unstable. The remedy is not dramatic. Name one source of truth for each board-level measure, assign an owner, state the refresh date and keep a dated change log. If customer lifetime value is in the board pack, document which transactions are included, how returns are treated and when the model refreshes.

There is also a clear limit on how external context should be used. ONS well-being data may help explain regional softness or resilience, but it should not be used to claim that a specific campaign caused individual purchasing behaviour. Better practice is to combine external context with first-party signals, then test whether behaviour changed after the activity, where it changed and whether any lift clears a sensible threshold against control or baseline performance.

How to read the evidence with checkpoints

Context is not proof. Correlation is not proof either. A cooler week, tougher consumer sentiment and a promotion landing late may all exist at once. The job is to separate signal from story before the board deck gets ahead of the facts.

The minimum useful scorecard should cover operational readiness as well as commercial output. Four measures usually earn their keep:

- audience build time

- campaign launch lead time

- matched-customer rate across channels

- active loyalty member rate

Each needs an owner, a refresh date and acceptance criteria. Otherwise teams end up arguing over interpretation rather than fixing the process.

For example, if audience build time is five working days, the target might be one day by 30 April 2026, owned jointly by the CRM lead and data engineering lead. Acceptance criteria would include automated refresh, duplicate handling rules and a reproducible audience count variance within agreed tolerance. If matched-customer rate is 68%, a credible first step may be 80% for the first priority use case, with known gaps logged rather than hidden.

Actions to consider: A phased path to joined-up data

A sensible route forward is phased, testable and tied to actual decisions.

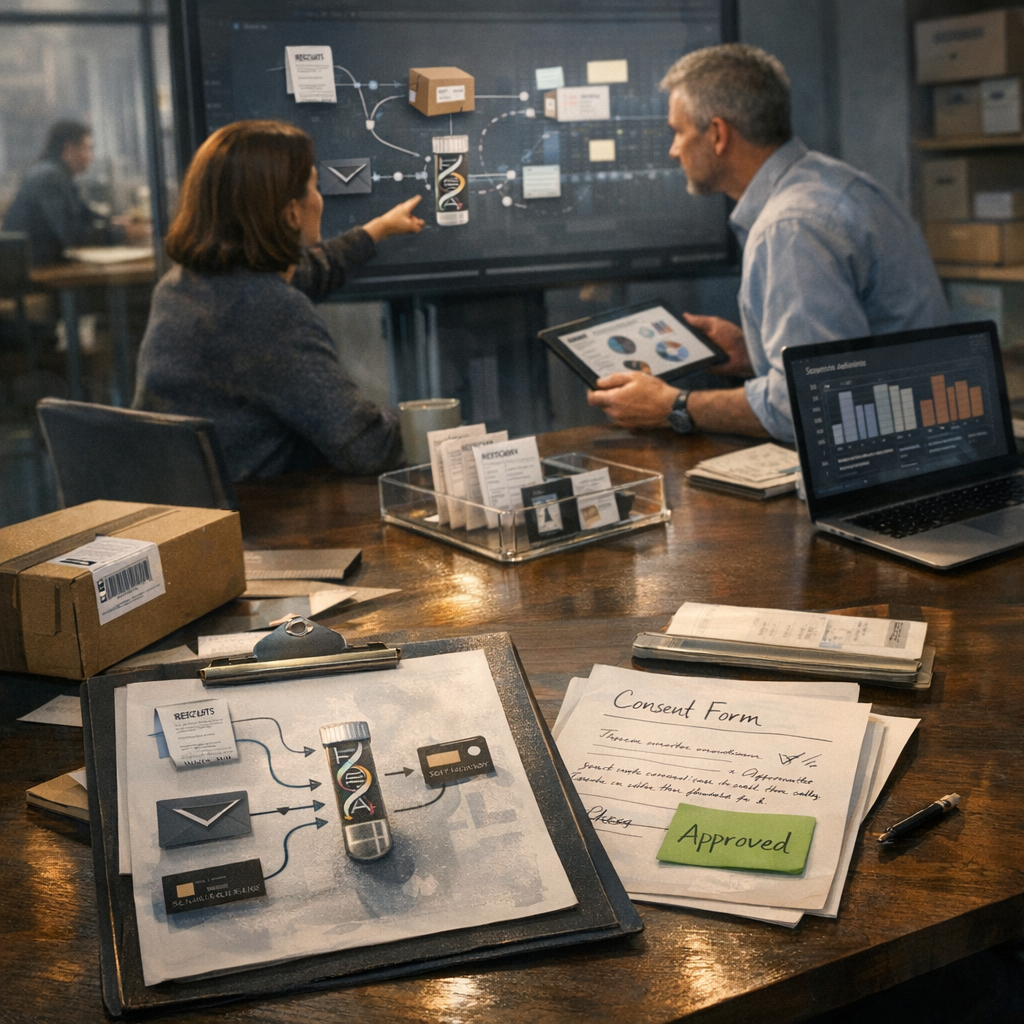

1. Audit the customer data estate. Owner: Head of Marketing. Target date: end of the current quarter. Acceptance criteria: a one-page inventory of customer data sources, system owners, update frequency, consent status and known quality issues.

2. Define the top three decisions that need better evidence. Owner: Marketing Director, with Finance input. Target date: within 10 working days of the audit. Acceptance criteria: three agreed questions linked to KPIs, such as omnichannel customer lifetime value, reactivation cost or time to launch for segmented campaigns. If no decision changes when the metric moves, drop it.

3. Map only the minimum data needed for the first use case. Owner: Data or IT lead. Target date: two weeks after sign-off. Acceptance criteria: source-to-metric mapping, match logic, known gaps, dependency list and delivery sequence. Start with one use case where value and feasibility are both clear.

4. Put checkpoints against operational movement. Owner: Programme lead. Target date: agreed in sprint planning and reviewed weekly. Acceptance criteria: improvement in at least one live measure, such as reducing audience build time from five days to one, or increasing matched-customer rate from 68% to 80% for the pilot segment. If the metric does not move, the change has not landed.

5. Keep governance visible. Owner: Data governance lead or equivalent. Target date: alongside pilot launch. Acceptance criteria: named metric owners, dated definitions, opt-out handling and a live change log for logic updates. If you are collecting email addresses or other customer data, keep forms short and make opt-out choices clear.

What good looks like next

The end state is not perfection. It is a working model that joins customer records well enough to support segmentation, measures performance in the way people actually shop and gives leadership a board-ready view of what changed, why it matters and what happens next. Faster audience creation, cleaner attribution and fewer expensive guesses are sensible outcomes.

That is the decision hiding inside this data pulse. Retail teams do not usually fail for lack of signals. They stall because identity, consent, ownership and activation readiness are too weak to turn signals into usable audiences with confidence. DNA is built as a governed customer-data and activation layer to close that gap, joining identity, consent, segmentation and activation readiness so teams can move with fewer delays and clearer lineage.

If your team is trying to connect online, in-store and loyalty signals without tying itself in knots, ask for a joined-up data workshop with DNA.

If this is on your roadmap, DNA can help you run a controlled pilot, measure the outcome, and scale only when the evidence is clear.