Full article

When content volume climbs, teams lose control by inches, not in one dramatic crash. The brief is in one system, source notes in another, approvals happen in chat, and published copy seems fine until a claim is challenged a fortnight later. This is where editorial workflow automation becomes useful plumbing or expensive theatre. A governed editorial memory shows what was written, what evidence supported it, who approved it, and when it needs checking. If a platform cannot explain its decisions, it does not deserve your budget.

Signal baseline

When tracing live workflows, it becomes clear that scaling problems in content are usually memory problems dressed as productivity problems. Important decisions live in people's heads, not in the system. The wider market signals support this. March 2026 reporting from Yahoo Finance on firms like Snowflake, Nvidia and ServiceNow shows a clear trend: organisations are buying systems that assume more content, routing and decision support. But market excitement is not operational value. Automation without measurable uplift is theatre, not strategy.

For editorial leaders, the baseline is plain. Once output moves beyond a handful of articles a week, unmanaged memory becomes a risk. Source reuse becomes patchy, approval rationale disappears, and claims are rewritten from scratch. The trade-off is speed versus traceability. Teams chase speed because the pain is visible, while traceability feels like a faff until legal asks for substantiation or a stale claim needs correcting across 40 pages.

What is shifting

The shift is not just about generating more copy; publishing systems are being tied more closely to decision systems. In practice, three things are changing. First, the unit of work is the asset plus its evidence, approval state and publication history. Second, review is becoming tiered. A routine product note should not sit in the same queue as a regulated market claim. Third, memory has to outlast the individual author. If someone on annual leave takes the logic of an approved phrase with them, the process is decorative.

This is where governed recall earns its keep. It routes low-risk work quickly while forcing higher-risk work through the right eyes. The trade-off is simple: apply heavy controls to everything and publishing slows to a crawl; apply no meaningful controls and rework piles up later.

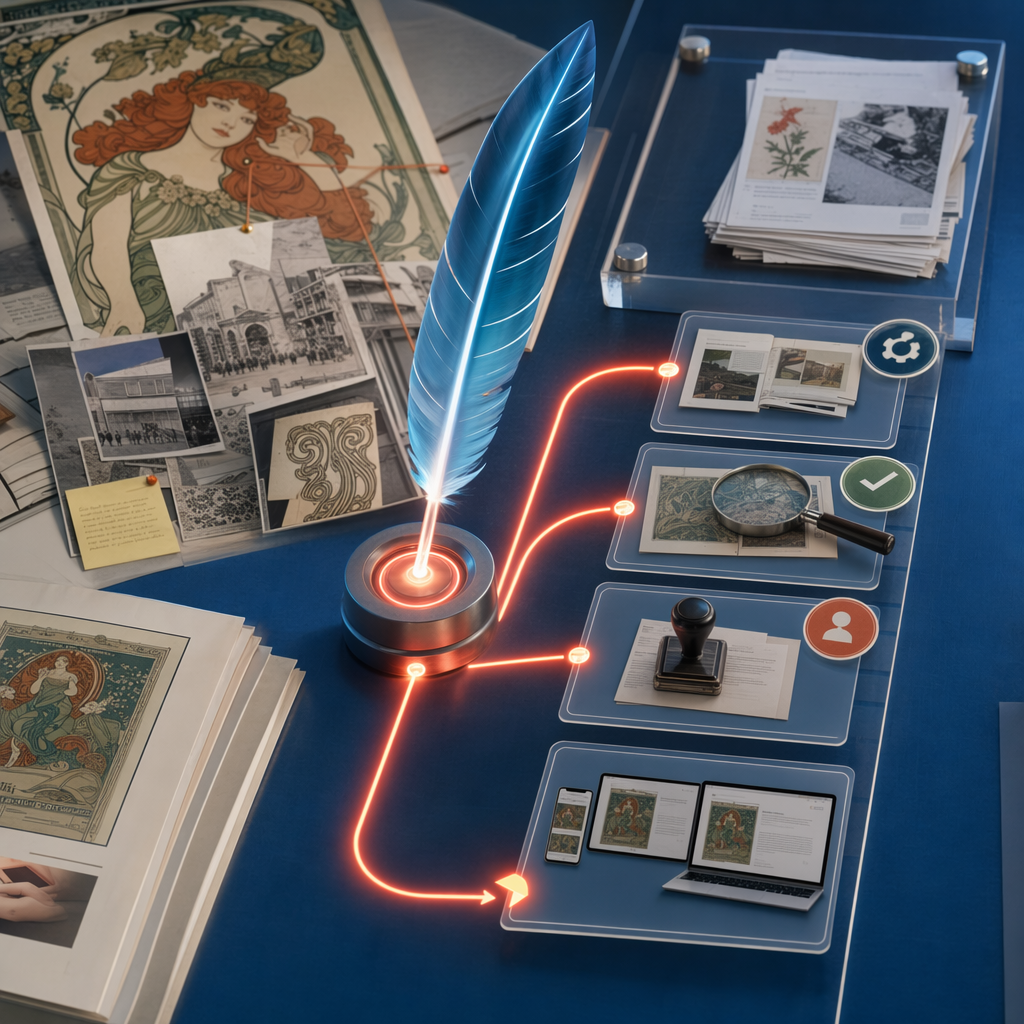

What a governed editorial memory actually looks like

A governed editorial memory is not a giant archive nobody trusts, but a structured record attached to the flow of work. The best versions are boringly effective: editors can find the brief, sources, approved wording, notes, expiry dates and status in one place. People make better decisions when they see the same facts.

A minimum viable model needs six parts: the canonical brief (audience, purpose, owner); a source register (named sources, dates checked); an approved claims library; a decision log (approvers, timestamps, exceptions); risk flags (regulated topics, legal dependencies); and lifecycle status (draft, approved, published, archived).

Last Thursday, an auto-routing setup misfiled two items because of inconsistent brief metadata. The fix was simple: required fields, a controlled vocabulary and one human checkpoint before legal routing. Avoidable exceptions dropped that afternoon because the system had stopped guessing.

This illustrates the key rule: governance must live in the path of work, not a side spreadsheet. If editors must copy evidence into a separate log after publication, the process will break under pressure. Memory must be updated as work moves.

A useful way to think of this is in three layers. Source memory stores evidence and freshness dates. Decision memory records who approved what and when. Pattern memory shows recurring bottlenecks, like legal review delays. Once visible, process design becomes a build problem, not a guessing game.

Who is affected when memory is weak

Weak memory affects more than editorial. Content ops, legal, brand, and marketing all feel the drag. In B2B, a weak link creates an expensive chain reaction: product updates a positioning line, marketing updates the website but not the sales deck, and sales uses the old phrase for another fortnight. The system lacks durable, authoritative memory.

Founders are often surprised the cost is not in drafting but in duplicate reviews, frozen content, and cleaning up stale claims. Our workflow mapping shows the first failures are usually missed hand-offs, ambiguous approvals and repeat review loops: process faults, not talent faults.

Regulated sectors like finance and health feel this sooner. A single sentence can end up in paid media or an investor deck. The trade-off is autonomy versus consistency. A governed memory preserves nuance once, gets proper approval, and allows reuse without fresh chaos.

Smaller teams are not exempt; a team of four can create confusion when output jumps from six pieces a month to 30. They often need clearer controls because one person may be writer, approver and publisher in the same afternoon. A lightweight separation of duties for defined risk classes does more good than another tool licence.

Actions and watchpoints

Start with one live workflow, not a grand plan. Map a repeatable content type from brief to publication, marking each hand-off and noting where approvals happen in private messages. This exercise usually reveals the first fixes within an hour.

The first controls to ship are practical: named approval tiers by risk; a source register with checked dates; reason codes for exceptions; a separate library for reusable claims; and defined trigger rules for legal review.

Key watchpoints: a calendar is not a memory system; scheduling is not validation. Use AI drafting in regulated work only when tied to named sources and human review. Keep expiry dates on claims that age badly, like pricing or benchmarks. Measure rework, because a quick-to-publish, slow-to-correct process is not efficient.

A simple scorecard is enough: track approval turnaround, review rounds, exceptions, and corrections. If these metrics do not improve, trim the process. If the platform cannot explain them, trim the platform.

The next move is not to buy another tool and hope for discipline. Make memory explicit where work happens, then automate only what earns its place. To find a starting point, invite your team to map one live publishing workflow through Quill. We will show you where the friction and risk sit, and what to build next without slowing vital work.

Invite editorial teams to map one live publishing workflow through Quill.