Full article

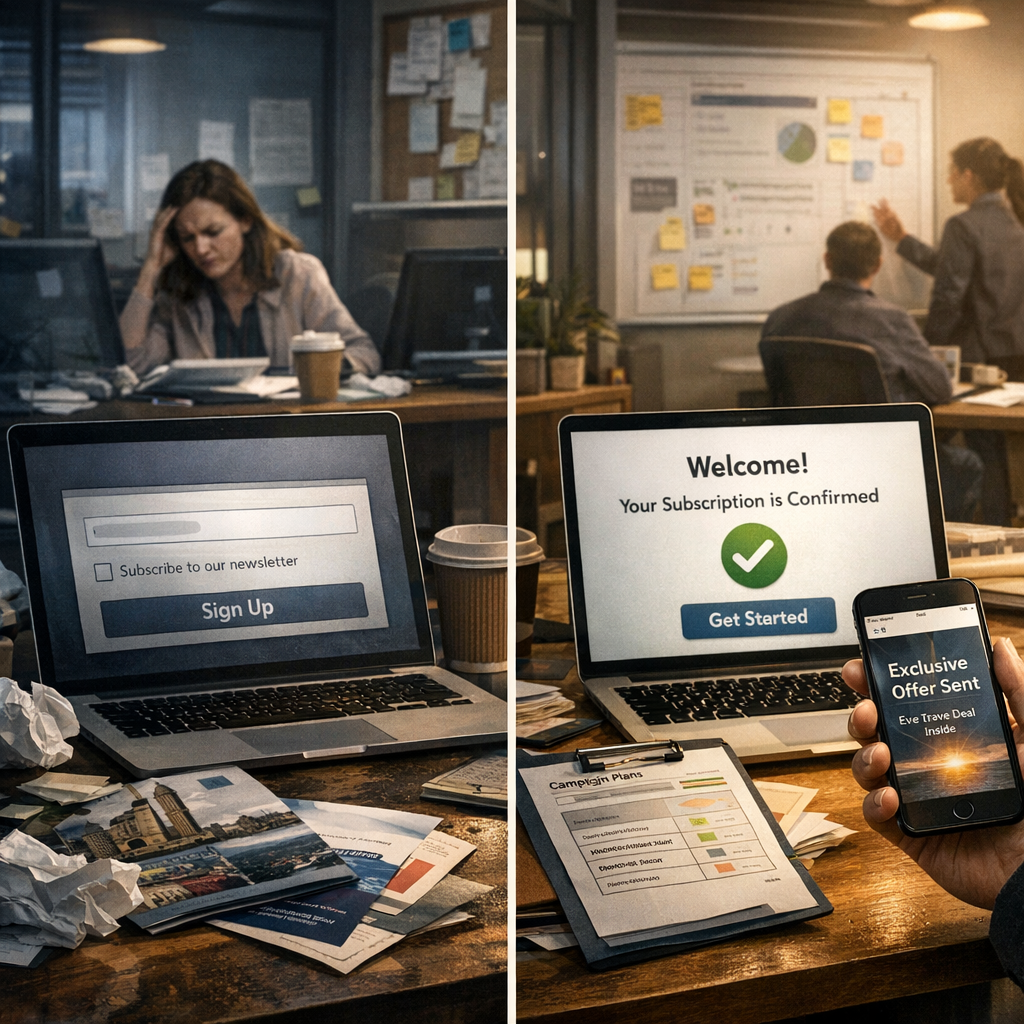

Most teams reach for stricter sign-up checks to protect deliverability. The snag is that harder gates do not automatically produce cleaner data. You can still let risky entries through, while slowing legitimate users and muddying the picture for everyone who has to run the programme afterwards.

That is the tension behind sign-up control. What changes the result is not another blunt rule but better routing. A practical email judgement model separates entries into pass, hold or stop, then checks those decisions against live measures such as confirmation completion, soft bounces and complaint rate. Used properly, this is not a one-off form tweak. It is an operating model with owners, dates, acceptance criteria and a clear path to green.

Quick context

The short answer is this: EVE gives UK teams a governed way to decide which sign-ups should move straight through, which should pause for a trust check, and which should stop, with the reasoning visible to the team.

That matters because binary validation only answers a narrow question. It catches obvious rubbish, such as broken syntax, dead domains or malformed addresses. Useful, yes. Sufficient, no. A syntactically valid address may still be a typo, a throwaway mailbox or a low-trust sign-up that causes trouble later.

Once you look at it that way, the real choice is not strict versus lenient. It is whether you keep pretending every entry fits one of two boxes, or whether you manage the trade-off in a way ops, CRM and fraud can actually run.

A graded model gives you three workable routes:

- Pass when confidence is high enough to add the sign-up straight into the journey.

- Hold when the signal is mixed and a short confirmation or review step is cheaper than a hard rejection.

- Stop when the evidence is strong enough that processing the sign-up creates more risk than value.

That middle state usually does more work than teams expect. It is where automated email risk routing becomes useful, because uncertain entries can be slowed without turning every doubtful case into a dead end.

How to set pass, hold and stop thresholds

If your plan has no named owners and dates, it is not a plan. Fix it.

Start with acceptance criteria, not tooling. CRM, growth and fraud owners need to agree what each route means in practice, what happens next, and which measure shows the threshold is doing its job. EVE can enforce the logic, but it cannot invent the governance for you.

| Threshold | Typical signal pattern | Automated action | Acceptance criteria and measure | Owner |

|---|---|---|---|---|

| Pass | Higher-confidence address and source signals, with no material risk flags | Allow sign-up and trigger the normal welcome or verification journey | First-email engagement remains in line with baseline; complaint rate does not worsen after launch | CRM Lead |

| Hold | Mixed signals such as new mailbox patterns, unusual domain behaviour, or source-specific uncertainty | Pause entry to the main list, send a confirmation step, and log for review | Confirmation completion justifies the extra friction; hold volume stays manageable week to week | Growth or Operations Lead |

| Stop | High-confidence risk signals such as failed syntax, known fraudulent patterns, or clearly non-human input | Block the sign-up and write a reason code to the audit log | Blocked volume is stable by source; false-positive reports stay within agreed tolerance | Fraud or Data Quality Owner |

Once that is agreed, run a retrospective test. Put the last 30 days of sign-up data through the model before go-live. You need two answers: how many entries would have landed in pass, hold and stop, and whether the hold queue is realistic to run. Skip that step and the queue problems arrive on day one, not later.

A sensible first cadence is weekly review for the first month, then fortnightly once the volumes settle. At minimum, each review should check:

- hold rate by source

- confirmation completion rate for held sign-ups

- soft bounce trend after pass decisions

- complaint rate trend after release into the main programme

- manual override count and reasons

That is the difference between an email judgement engine and a tidy settings page. One gives you evidence. The other gives you buttons.

Where teams usually get this wrong

The first mistake is treating hold as a bin. It is not. A hold state only works if there is a clear next action, a service level for review, and a rule for what happens when the user does nothing. Otherwise the uncertainty is still there, just parked somewhere less visible.

The second mistake is letting sales logic outrun risk logic. Teams often chase top-line pass volume and leave deliverability checks until later. That is the wrong order. The control measures need to sit beside acquisition numbers from day one. If pass volume rises while confirmation completion falls or soft bounces climb, something is drifting and the threshold needs another look.

The third mistake is poor override discipline. An override policy should state who can approve a change, what evidence is required, and where the decision is logged. Two details matter more than they sound: keep the approver list short, and log the owner and date every single time. Without that, you cannot trace why a threshold moved or whether the change actually helped.

I was wrong about the effort on one implementation. The data feed was trickier than expected, and the first draft of the routing logic assumed cleaner source labels than we had. The fix was not dramatic. We added buffer time, tightened the mapping rules, and held go-live until the reason codes were readable enough for ops to use. Less glamorous, more useful. Sorted.

Running the hold queue without creating a mess

A hold queue is only worth having if it leads to a controlled next step for uncertain sign-ups rather than a backlog nobody owns.

The basic controls are straightforward:

- set a maximum review age for held records

- send one clear confirmation step rather than a string of nudges

- group reviews by source and reason code so patterns are visible

- review exception spikes the same day rather than waiting for the next weekly meeting

This is where explainability starts to matter in operational terms. If EVE says an entry is held because of a new-domain pattern, unusual mailbox behaviour or a source-specific trust issue, an operator has something to act on. If the system just says no, the queue gets noisier and the team learns very little.

The practical gain from explainable decisioning is not theatre. It is faster diagnosis, a cleaner audit trail and less collateral damage when one source starts behaving oddly.

Your minimum governance for hold should cover:

- Owner: one named person accountable for queue health each week

- Date: a fixed review slot in the team calendar from launch

- Acceptance criteria: a stated limit for queue volume and review age

- Risk and mitigation: what happens if the queue spikes, confirmation drops, or a source starts misbehaving

If those four points are missing, the queue will drift. Not might. Will.

Checklist for the first tuning cycle

Use this as a delivery assurance note rather than a wish list. Keep the change log as you go.

Weeks 1-2: scope and setup

- Assign the threshold owner. Usually the CRM Lead or Growth Operations Lead. Due by end of week 1.

- Set the baseline. Record the last 30 days for soft bounces, hard bounces, complaint rate and sign-up to confirmation completion. Owner: CRM Analyst. Due by end of week 1.

- Agree acceptance criteria. Define what pass, hold and stop mean for user handling and audit logging. Owners: CRM, fraud and acquisition leads. Due by mid week 2.

- Configure EVE. Implement the initial routing logic and reason codes. Owner: implementation lead, with Holograph support where needed. Due by end of week 2.

Week 3: test and decide

- Run a back-test. Score the last 30 days of sign-ups to forecast pass, hold and stop volumes. Owner: implementation lead. Due at start of week 3.

- Review the trade-offs. Check whether hold volume is manageable and whether stop looks too aggressive by source. Owner: threshold owner. Due by mid week 3.

- Approve go-live or revise. Do not go live until owners agree the queue can be handled and the audit log is readable. Owner: delivery sponsor. Due by end of week 3.

Week 4 onwards: refine

- Run the weekly review. Check hold rate, confirmation completion, soft bounces, complaint rate and overrides. Owner: threshold owner.

- Adjust in small steps. Move thresholds conservatively and record the reason, owner and date for each change. Owner: threshold owner.

- Escalate exceptions fast. If one source creates a sharp spike in held or stopped entries, review it the same day and set a mitigation. Owner: operations lead.

The point is not perfection in week one. It is traceability. A threshold model you can explain will usually improve faster than one that only looks clever in a slide deck.

Closing guidance

The practical decision is not whether to be strict or lenient. It is whether you want one blunt rule doing quiet damage, or a governed model that shows its workings and lets you tune the trade-offs. For UK sign-up forms, that usually means passing clean entries, holding uncertain ones for a short trust check, and stopping only where the evidence is strong enough to defend.

If you are reviewing your form logic now, start with the measures and owners before you touch the thresholds. Then pressure-test the hold volume against real operational capacity. If you want a hand shaping that plan, contact EVE and we will help you map the first pass, hold and stop rules into something your team can actually run next week.

If this is on your roadmap, EVE can help you run a controlled pilot, measure the outcome, and scale only when the evidence is clear.