Full article

Decision: stop judging direct payment approval on average payout speed alone. It is too blunt to manage risk. A better test is a four-part weekly scorecard covering approval ageing, exception rate, evidence completeness at first pass, and time to confirmation.

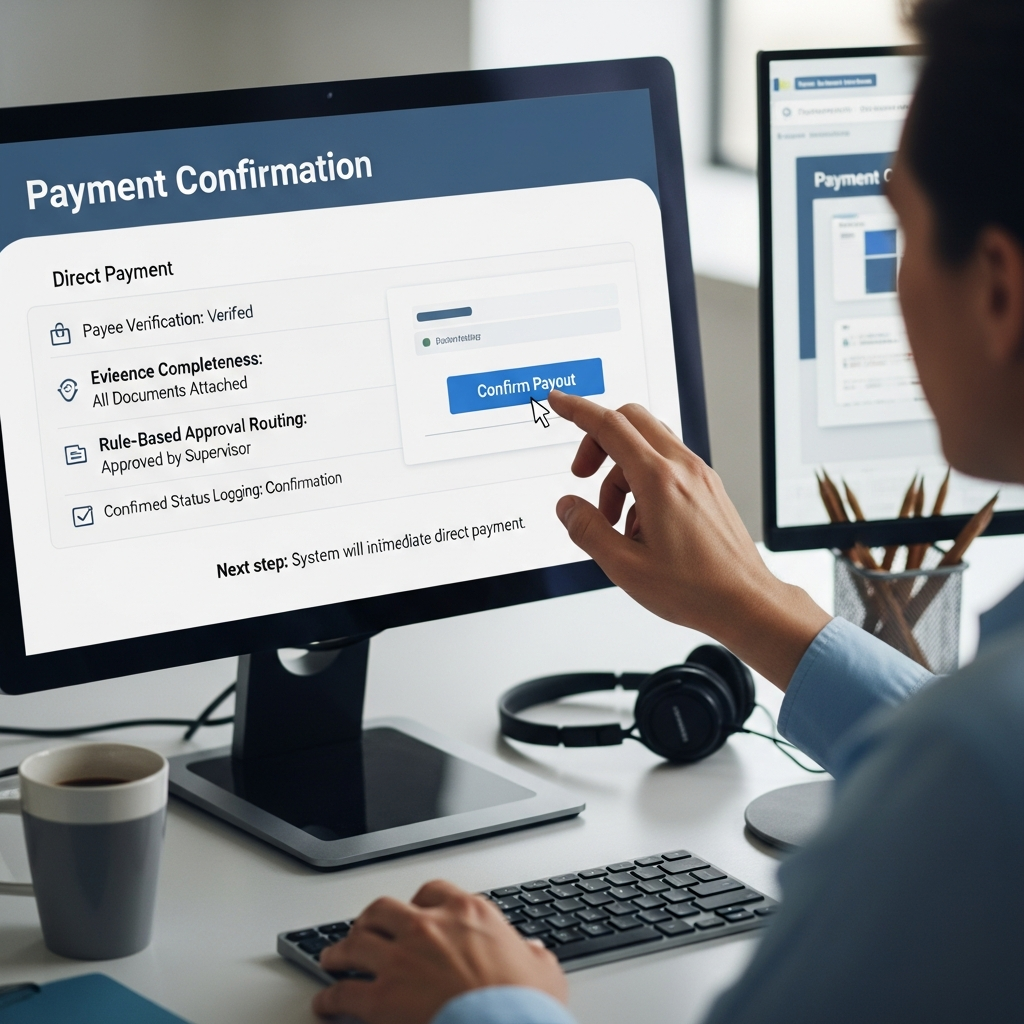

For UK teams assessing Payment Services, the short answer is this: it works best when verification, approval routing and payment status sit in one governed flow, because that is what lets you see where delay, rework and weak evidence start. That matters in consumer payout operations, where a fast payment that cannot be defended later is not really fast at all. If there is no named owner and no review date, it is not a plan. It is a hope with a spreadsheet.

Context: why average speed is not enough

The usual question is simple: how fast are payments going out? Fair enough, but average speed is a vanity metric on its own. It hides the tail of delayed, reworked or overridden cases, and that is usually where claimant frustration, audit pressure and extra handling cost collect.

In fragmented setups, payee verification sits in one tool, approval routing in another, and payment confirmation somewhere else again. Then one missing attachment or an unclear approval threshold holds up work that should have cleared cleanly. The issue is not pace in the abstract. It is control inside the controlled payment workflow.

So the practical question is not pace versus auditability. It is whether you are measuring the points where delay and rework first appear, rather than admiring an average after the fact.

What the four measures actually tell you

These four measures matter because each should trigger a decision, not just fill a report. Review them together every week. Split them apart when one goes red. That is often where the real answer sits.

1. Approval ageing

Measure the time a payment request spends waiting for sign-off after all required evidence has been submitted. A sensible checkpoint for standard cases is under 24 hours, with anything over 48 hours escalated to the named approval owner. When this worsens, the cause is often visible quickly: loose routing rules, thin sign-off capacity, or requests arriving without an agreed approval path.

2. Exception rate

Track the percentage of payments that fall out of the standard route and need manual intervention, rework or override handling. A healthy benchmark will vary by operation, but trend matters more than any borrowed target. If the rate climbs week on week, something upstream is drifting. Common causes are unclear intake criteria, inconsistent evidence requirements, or approval rules that turn ordinary claims into edge cases.

3. Evidence completeness at first pass

Measure the share of claims that arrive with everything needed for an immediate decision. In reimbursement governance, this is a useful early check on whether intake and case design are making the standard route easy to use. Set a threshold your teams can explain, then review failure reasons each week. The measure is worth the effort because it shows why work is bouncing before approval queues take the blame.

4. Time to confirmation

Track elapsed time from claimant submission to confirmation that funds are on the way. This is the metric the claimant feels. For standard cases, many teams will work towards under 48 hours, but the target should match the approval model and payment type. If this slips while approval ageing stays flat, the delay is probably elsewhere in the flow, such as evidence handling, queue management or payment release timing.

Why these measures work better together

One metric can flatter a weak process. Four, used properly, give you an evidence thread.

If approval ageing rises while evidence completeness holds, the bottleneck is probably in sign-off capacity or routing. If evidence completeness drops first, there is little point blaming approvers. The intake journey needs work. If exception rate climbs and time to confirmation drifts, the process is carrying too many non-standard cases for the current ruleset.

That changes the quality of the conversation. Instead of saying payments feel slow, teams can point to the failing stage, the owner, the acceptance criteria and the next review date. Easier to act on. Easier to audit later.

There is a necessary tension here. A complete audit trail and a low-friction claimant experience do not always line up neatly. Some cases will need judgement. The point of the scorecard is not to remove judgement, but to make exceptions visible, recorded and reviewable before they harden into the unofficial process.

How to put owners and dates around the scorecard

This does not need a grand transformation plan. It does need discipline.

Start with a 30-day baseline. Review the last month of direct payments and calculate all four measures retrospectively, even if part of it has to be done manually. Owner: Head of Operations. Acceptance criteria: baseline produced for all four measures, key failure reasons logged, and any data gaps listed. Date: complete within 14 days.

Then assign a single accountable owner to each metric. One name per measure, not a committee. Approval ageing usually sits with the approval or finance lead. Exception rate often sits with operations or consumer care. Evidence completeness belongs close to intake and case design. Time to confirmation needs someone who can see the full flow end to end. Put those owners in the weekly report and state the review cadence plainly.

Next define the path to green. When a measure misses threshold, the owner should return within 24 hours with three things: diagnosis, mitigation and next checkpoint date. Keep it plain. Acceptance criteria might include reducing aged approvals over 48 hours by the next weekly review, or cutting repeat evidence failures on the top two causes within the next reporting cycle.

Finally, test the tooling honestly. If the team cannot pull these measures without stitching together exports from several systems, the reporting gap is now a delivery risk. That does not mean buying something shiny by Friday. It means recording the gap, assigning an owner, and deciding whether the fix is process change, workflow redesign or platform support from Payment Services, with Holograph owning implementation where required. Bit tight on time is manageable. Flying blind is not.

Risks, mitigations and the decision in front of you

The main risk is false confidence. Teams can publish a tidy dashboard and still miss the operational truth if definitions are loose or ownership is blurred. Mitigation: agree the calculation method for each measure, document the threshold, and review exceptions in the same meeting as the headline numbers.

The second risk is local optimisation. One team may try to improve approval times by weakening evidence checks, which only shifts the problem downstream. Mitigation: review all four measures together and require any proposed change to show its effect on claimant experience, audit readiness and rework.

The third risk is delay dressed up as caution. Some cases need extra review. Some do not. If everything becomes an exception, the standard route is broken. Mitigation: track exception reasons, cap the categories, and review the top causes monthly with operations, finance and compliance owners present.

The decision is straightforward: if you want direct payment approval to be fast and audit-ready, measure the process where it actually bends under pressure, not just where it looks tidy in a summary slide.

If you want a practical baseline for your own consumer payout operations, Payment Services can help map the four measures, define owners and thresholds, and turn the first weekly report into something usable. Contact the team and work through what needs to be measured, who should own it, and the quickest realistic path to green.

If this is on your roadmap, Payment Services can help you run a controlled pilot, measure the outcome, and scale only when the evidence is clear.