Full article

Teams often mistake a content speed problem for an approval design problem. Drafting gets faster, queues lengthen, and output stays sluggish.

In high-volume publishing, the first handoff to fail moves from draft to decision. Context vanishes, ownership blurs, and reviewers receive a blob instead of a case. Fixing it demands better editorial workflow automation: clearer routing, tighter evidence, and human approval where judgement matters.

Signal baseline

The weak point is rarely the copy; it is the approval packet around it. Teams can produce a decent first draft in minutes, then lose half a day because three basic questions go unanswered: what changed, what risk sits inside, and who should sign it off.

This mundane point is where throughput disintegrates. A draft without source context, rights status, audience notes or prior decision history forces reviewers to reconstruct instead of approve. Faster drafting saves time upfront, but a poor handoff adds slower reviews, duplicate comments and avoidable rework downstream.

I remain sceptical of platforms that promise solutions through glossy dashboards alone. If a platform cannot explain its decisions, it does not deserve your budget. The failure pattern is consistent. Legal gets everything instead of only high-risk items. Brand reviewers receive assets with no rights history. Editors chase approvals in chat because the workflow holds no usable memory. None of this is exotic; it is overcomplicated process masquerading as governance.

What is shifting

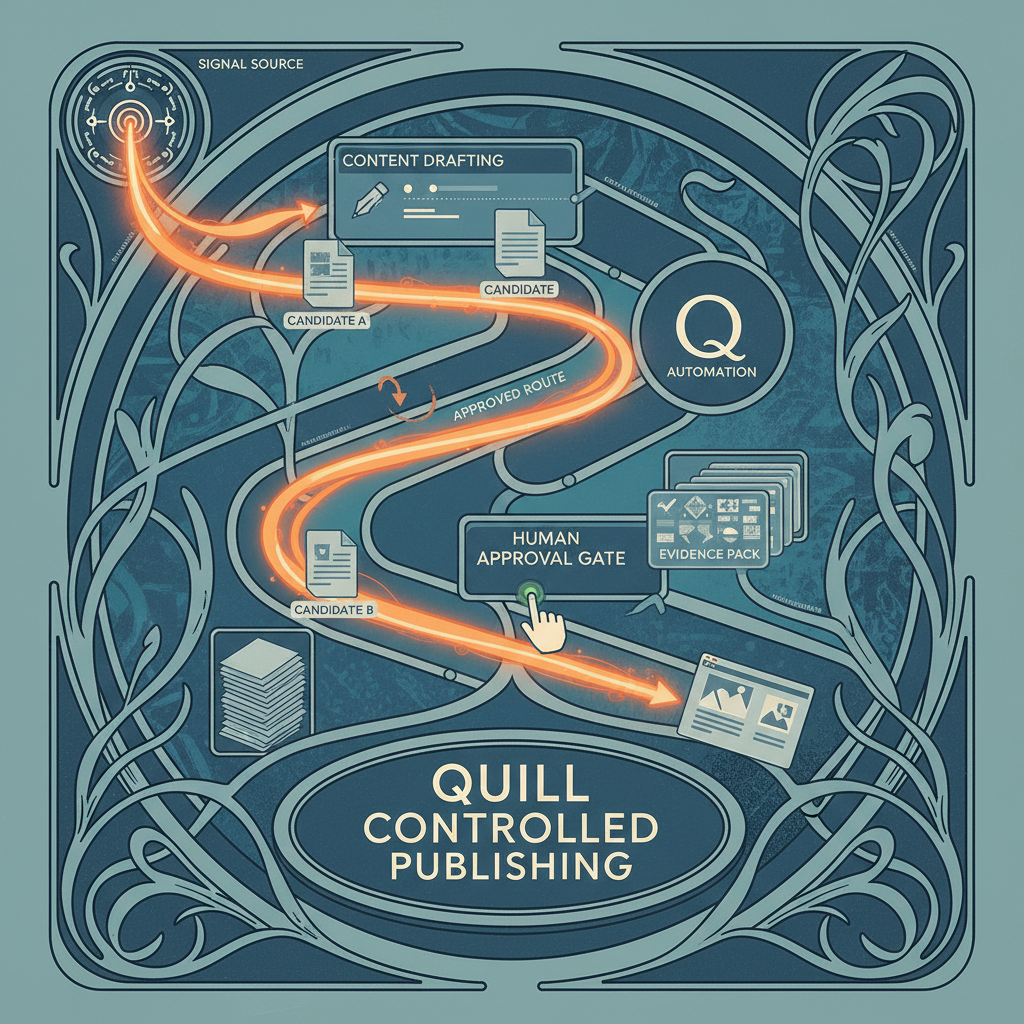

The useful change is not “AI replaces approval”. That claim collapses with regulated or sensible teams. What shifts is the review structure. Better systems now route by signal, not habit. A price claim goes to compliance. A routine category page update may only need an editor. An image with unclear usage rights stops before production.

This bargain beats blanket routing, but carries an obvious cost: someone must design the rules properly. Teams often dodge that design phase because it feels slower than shipping. Then bounce-backs start. A reviewer asks for context. Another asks for the same evidence in a different format. A third asks why they were included. The queue fills with administrative noise, not editorial judgement.

I still do not fully understand why some organisations resist this scoping phase, but observation shows they treat workflow design as overhead until the backlog becomes impossible. By then, blame falls on writers, reviewers, or tools. The fault usually lies in the handoff contract between them.

Done properly, signal-led workflow design changes the unit of work. Reviewers receive a routed decision with context attached: source basis, risk tags, prior guidance, version state and the reason they are the approver. It is not glamorous, but it prevents volume from turning into queue sludge.

Who feels the failure first

Editors feel it first, being closest to the queue, but they are hardly the only ones paying. Content strategists lose campaign timing. Compliance teams triage work that should never have reached them. Platform owners get blamed for latency that is really process ambiguity in disguise.

The trade-off is stark. Tighten approval discipline, and some contributors initially feel constrained. Leave the process loose, and reviewers clean up everybody else’s uncertainty. I know which side I would pick.

A proper editorial memory system helps here. If the team can see how a similar claim was handled before, who approved it, and what evidence was required, they stop refighting old battles. Reviewers focus on current exceptions instead of re-litigating settled ground. This saves time, but memory needs maintaining. Poorly governed memory simply scales stale decisions faster.

What a reliable handoff looks like

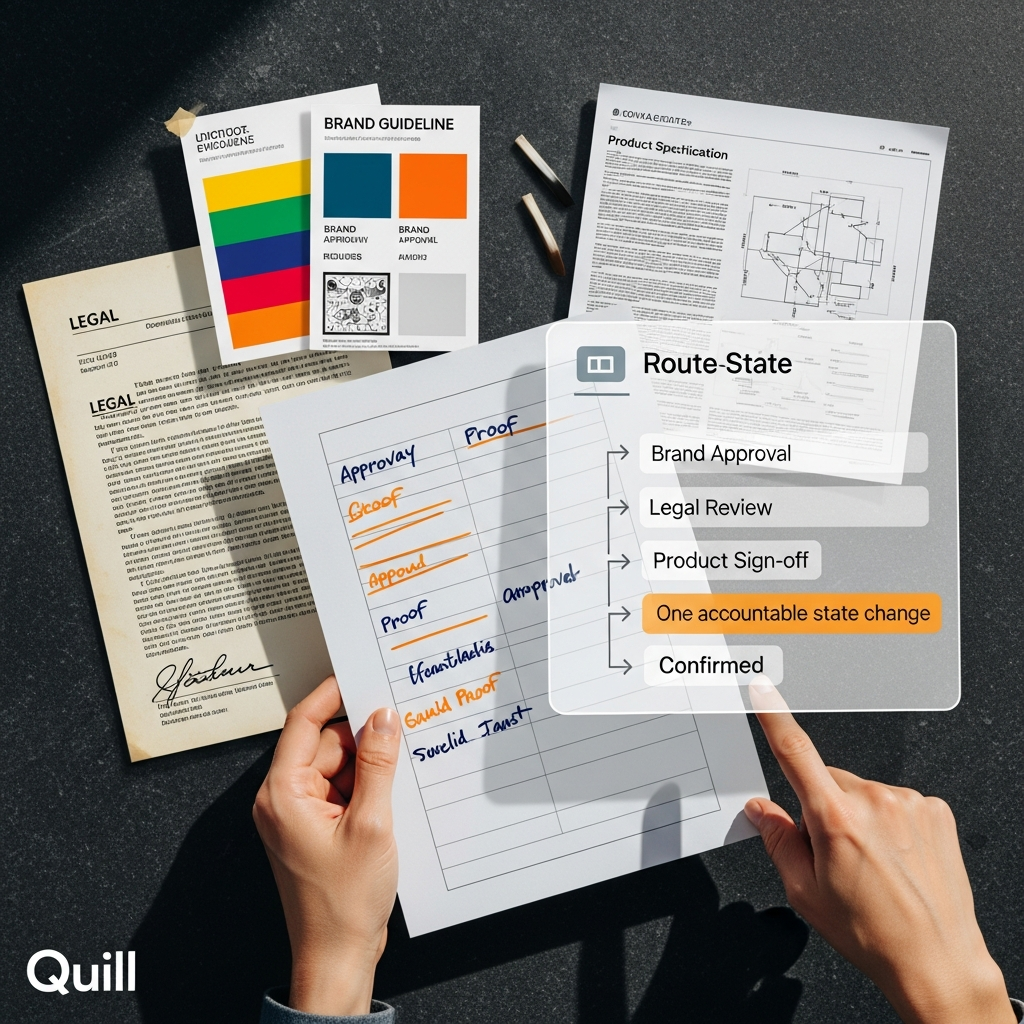

A workable approval handoff is unromantic. It has a named owner, a reason for review, supporting evidence, a version state and a fallback if no decision arrives in time. Miss one, and the queue wobbles.

For high-volume teams, keep the design tight.

Route by risk and change type, not by departmental habit.

Attach source notes, rights status and prior rulings at submission.

Define entitlement clearly so only the right approver can release the item.

Set escalation thresholds for stalled items instead of relying on manual chasing.

Record decisions in a form the next reviewer can reuse.

Image workflows make this obvious. If an asset reaches approval without usage rights, context notes or sensitivity flags, the bottleneck is not a slow approver. The bottleneck was created upstream. Good automation catches that before human review starts. Bad automation just forwards the mess faster.

Actions and watchpoints

Fix the first approval handoff, not the drafting tool. Map the current route for a single content type. Count how many times context is re-entered. Note where reviewers ask for information that should have travelled with the draft. That reveals the actual failure point.

Make small, testable changes. Add structured evidence fields before submission. Route low-risk updates away from legal by default. Set an escalation rule for items stalled beyond an agreed window. Use your editorial memory system to attach previous rulings to repeat formats. This is operational hygiene, less exciting than hype but more profitable.

Resist piling on rules because a few cases went wrong. Over-designed approval trees become brittle quickly. Every new branch should earn its place with measurable uplift: lower mean time to approve, fewer bounce-backs, or fewer manual chases. Automation without measurable uplift is theatre, not strategy.

The approval handoff fails first because it carries the most ambiguity. Fix that, and the rest of the workflow calms. If you want to see where Quill can tighten routing, preserve decision memory and keep human judgement exactly where it belongs, a proper conversation helps. Quill is built for teams that need accountable publishing at volume, and Holograph can shape implementation so the system works in practice.

If this is on your roadmap, Quill can help run a controlled pilot, measure the outcome, and scale only when evidence is clear.