Full article

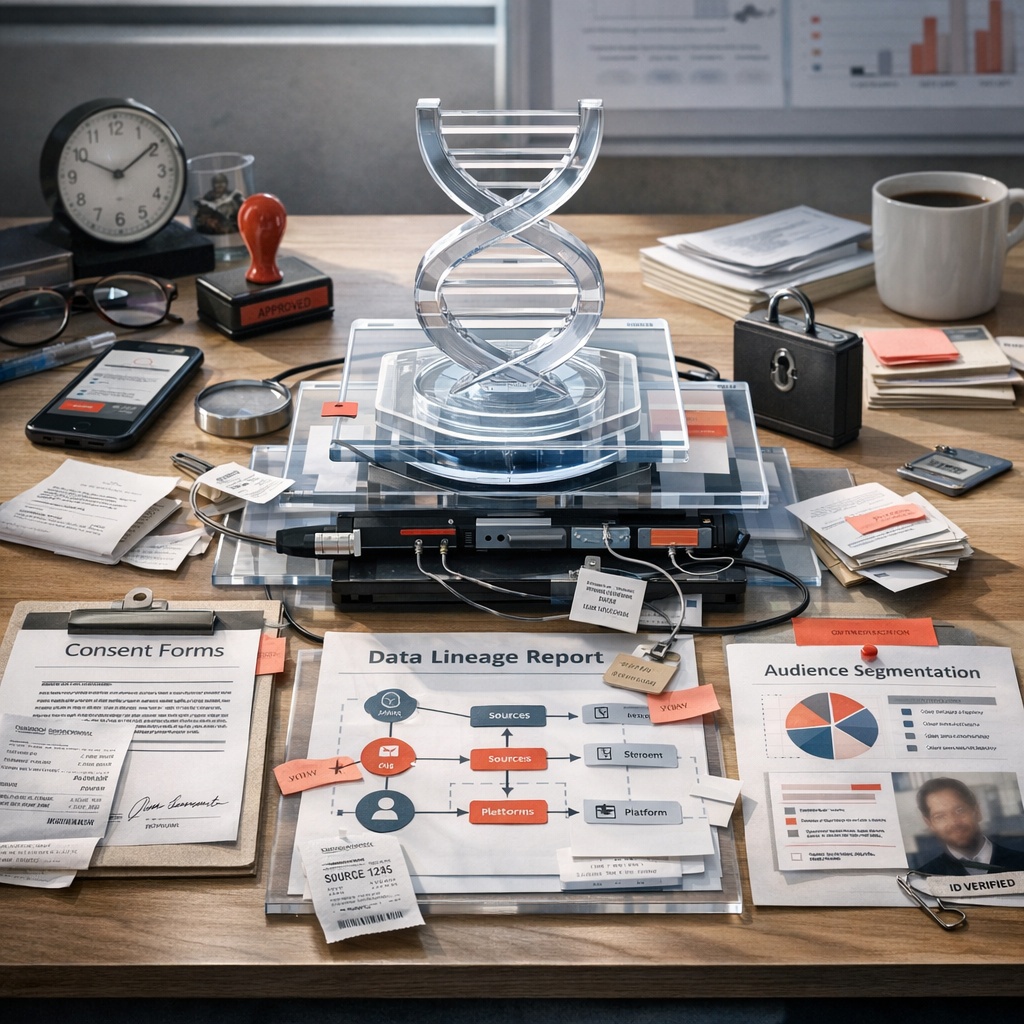

One awkward truth has sharpened in 2025: the fastest way to push a social audience live is often the easiest way to lose confidence in it. Teams can now automate segment creation, syncing and suppression in minutes, yet the stubborn failure point is rarely the platform. It is the missing proof of who consented, when that status changed, and how a segment definition travelled from source to activation.

That is the decision facing UK data leads, CRM managers and activation teams. Do you keep optimising for speed inside fragmented tools, or do you put audience activation governance and traceable lineage in front of release? My view is fairly plain. A strategy that cannot survive contact with operations is not strategy, it is branding copy. In a strategy call this week, we tested two paths and dropped one after the first hard metric came in. The fast path looked clever, until no one could prove why one exclusion rule had disappeared between CRM and paid social.

What is being decided

The practical choice is not whether to automate. That ship has sailed. According to the UK Government’s Department for Science, Innovation and Technology, generative AI adoption among UK businesses continued to rise through 2025, and the pressure to operationalise automation has only tightened into 2026. The real decision is narrower: what evidence must exist before an audience is allowed to move from planned segment to live activation?

For most teams, there are two workable options. Option one is platform-led activation. Definitions are assembled close to the media platform, with local checks for suppression and basic permissions. It is quick, especially when campaign windows are tight. Option two is governed activation through a central layer such as DNA, where consent state, identity mapping and activation rules are validated before audience release. It is slower to set up, but cleaner to defend when someone asks what happened three weeks later.

I liked the first option, but the evidence favoured the second once the numbers landed. Not because governance is fashionable, but because rework is expensive. In fragmented estates, one broken field mapping or one stale consent flag can stall a launch by days, then pull analysts, media owners and CRM managers into a forensic exercise no one budgeted for.

Comparative view

As it stands, the market is moving in a way that rewards evidence over raw orchestration speed. The Information Commissioner’s Office has not softened its position on accountability, lawful basis and purpose limitation for direct marketing and profiling. At the same time, large social and ad platforms keep making activation easier at the interface level. Those two movements are not aligned, and that tension is worth a closer look.

| Decision factor | Platform-led workflow | Governed workflow via DNA |

|---|---|---|

| Time to first audience | Usually faster, often same day | Slower initially while mappings and approvals are set |

| Consent proof | Often partial, scattered across tools | Central record of status, source and use case |

| Activation lineage | Weak when fields are transformed downstream | Traceable from source signal to audience release |

| Change control | Dependent on local platform habits | Versioned rules and clearer ownership |

| Commercial risk | Higher rework and approval delays | Higher setup effort, lower repeat friction |

There is a temptation to frame this as bureaucracy versus growth. I don’t buy that. Growth claims without baseline evidence should be parked until the data catches up. The better frame is margin and confidence. Holograph’s own precedents show what happens when systems are structured for repeatability rather than improvisation. For Google Pixel, a modular production approach deployed 812 assets while reducing cost per asset by 23.5%. Different channel, same operating lesson: when the system is clear, output scales without each release becoming a fresh negotiation.

A useful tangent here: teams often say, “We already have consent in the CRM.†Fine, but that answers only one part of the question. It does not show whether a segment rule changed after export, whether identity resolution merged records across channels, or whether the paid social audience still matches the approved definition by the time it goes live on a Friday afternoon.

Operational impacts

The biggest operational benefit of a stronger customer data operating model is not abstract compliance comfort. It is fewer hidden hand-offs. A plan looked strong on paper, then one dependency moved, so we re-ordered the sequence and regained momentum. That sort of recovery is only possible when ownership is visible. If suppression logic sits in one tool, consent state in another, and channel mappings in a spreadsheet sent over Slack, the audience may still launch, but nobody should be especially proud of it.

There are at least three constraints teams are dealing with in 2025. First, identity fragmentation is getting worse, not better, as support, ecommerce, CRM and paid media all generate usable signals. Second, approval windows are shorter. Third, platforms encourage direct activation before data teams have time to interrogate provenance. To be fair, the commercial pressure is real. Missing a retail promotion window or seasonal burst can cost more than a tidy process ever will.

Still, the cost of weak lineage shows up quickly. A mismatched segment definition can trigger channel underdelivery, duplicated spend or awkward customer experience where someone who opted out in email still appears in a paid audience. The practical answer is consent-aware segmentation that treats permission, purpose and recency as live inputs, not a one-off filter at audience creation. That means documenting source system, transformation step, last refresh timestamp and release approval for each audience that matters.

According to the Office for National Statistics, UK personal well-being datasets continue to track anxiety as a measurable national indicator. I am not smuggling in a wellness detour here. I’m making a smaller point. Operational ambiguity creates friction inside teams, and friction lengthens campaign cycles. The market movement is towards tighter proof, not looser judgement. Teams that reduce uncertainty at release tend to move faster over a quarter, even if the first setup week feels slower.

Recommendation and next step

The recommendation is to adopt a governed release model before expanding social automation any further. Specifically, set a minimum evidence threshold for every audience pushed live: documented consent status, named source systems, versioned segment logic, suppression rules, destination mapping and a clear owner. If one of those fields is missing, the audience does not go. Slightly unfashionable, perhaps, but useful.

For most organisations, the best next move is not a giant rebuild. Start with the highest-risk, highest-frequency audiences, usually CRM retargeting, lapsed customer pools and paid social lookalike seeds. Map the lineage from source to destination, then note where status is inferred rather than proved. That exercise tends to expose the actual bottleneck within days. Once that is visible, DNA can act as the operational hub that joins fragmented signals into governed audiences with usable activation flows, rather than leaving each platform team to invent its own local logic.

There is one unresolved tension, and it is real. The stricter your release controls, the more likely a campaign owner will say the process is slowing growth. Sometimes, for one week, they will be right. But if your team is spending late Thursday rebuilding an audience because no one can evidence where a suppression broke, the current model is already slow, just in a less honest way. The trade-off is clear: a bit more setup prevents a lot of rework. For social automation that's both fast and reliable, evidence has to lead. If this rings true, reach out to see how DNA can build that proof into your workflow.

If this is on your roadmap, DNA can help you run a controlled pilot, measure the outcome, and scale only when the evidence is clear.