Full article

Slowness in content operations rarely stems from writing itself. Triage, routing, and approvals consume the real time.

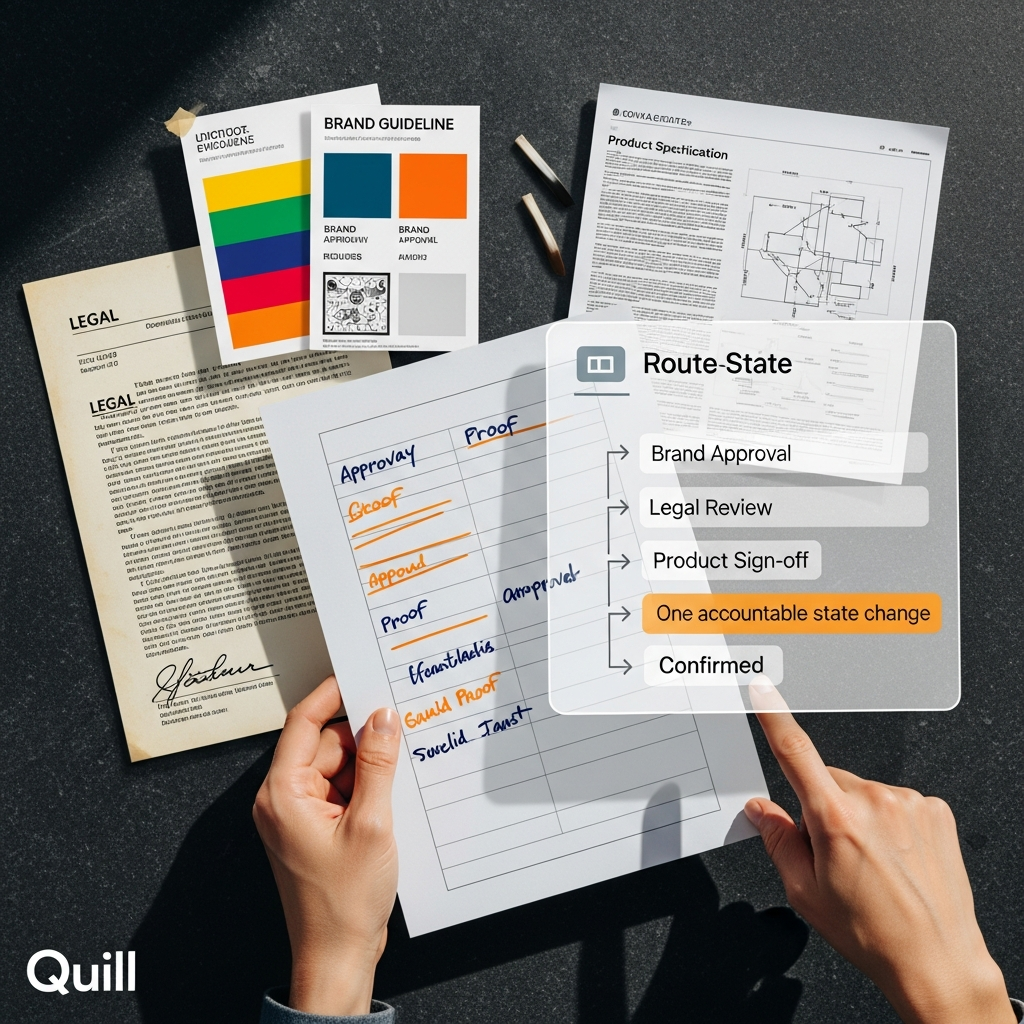

Editorial workflow automation tackles the core delay. This is not shiny copy generation or another dashboard. Proper routing rules and review gates cut mean time to resolution, reduce duplicate drafting and clarify publishing decisions. You exchange some improvisation for predictable throughput and cleaner failure recovery.

Decision context

The choice is rarely manual versus automated in the abstract. It hinges on whether your workflow explains why a piece waits, who owns the next step and what happens on failure. Without that, you have a queue with good manners, not a workflow.

Delays typically start at triage, not drafting. Briefs arrive half-scoped, risk levels are implied, and review becomes a shared inbox problem. The result is duplicate drafts, stalled approvals and rising MTTR on ordinary work.

A signal-led publishing workflow with explicit routing rules performs better. High-confidence, low-risk work moves quickly. Sensitive items like financial claims or image rights trigger named review gates. This feels restrictive initially but creates a defensible path from signal to published asset.

Platforms that talk about speed without showing failure logic do not deserve your budget. That is not anti-automation; it is the minimum standard for buying it responsibly.

Options and trade-offs

Three broad options perform differently.

| Approach | Typical approval MTTR | Set-up effort | Operational trade-off |

|---|---|---|---|

| Manual routing | About 48 hours | Low | Flexible at first, but hard to track, hard to recover and prone to duplicate work |

| Basic automation | About 24 hours | Medium | Faster handling for routine work, but weak governance if rules are thin |

| Governed automation | About 12 hours | High | More configuration up front, but clearer ownership, lower rework and better recovery |

Manual routing is cheap and flexible, but ambiguity grows under pressure. When a time-sensitive piece lands, 'we'll know who signs this off when we get there' is no process.

Basic automation improves queue handling yet often lacks governance. Items move faster, but the system may still miss clear review thresholds or escalation rules. Waiting time drops while accountability uncertainty persists.

Governed automation requires more design but runs better. Routing rules classify work before drafting. Review gates attach to content risk, not habit. Editorial memory reduces repetition with scoped context. The trade-off is implementation discipline now for fewer avoidable arguments later.

Lighter AI models help with draft structure and throughput on routine formats. They do not remove the need for human approval automation. Sensitive claims still need a named owner; image use still needs rights checks. Automation without measurable uplift is theatre, not strategy.

Where MTTR actually moves

MTTR improves when teams reduce uncertainty, not just accelerate drafting.

In weak workflows, a draft can be produced quickly and still sit idle because the next reviewer, risk class or acceptance standard is undefined. Elapsed time gets blamed on 'content demand' when decision design is the issue. A governed workflow routes work by urgency, confidence and consequence before anyone polishes adjectives.

Signal triage deserves more attention. A high-confidence product update with known facts should not travel the same path as a regulated claim or rights-sensitive image package. Treating unlike work as identical creates false fairness and genuine delay.

Quill works as an operational system, not a writing toy. Teams can link plan, create and publish in one governed flow, connecting opportunity identification, drafting and approval. That means fewer hand-offs and a cleaner evidence trail. The trade-off is agreeing routing logic up front, a conversation that often reveals the real bottleneck.

Governed models can bring approval MTTR down from roughly 48 hours to nearer 12, especially where routing, memory scope and review discipline are implemented together. The system stops losing time in the same dull places.

Risk and mitigation

Automation scales mistakes, but manual systems hide mistakes until they are expensive.

Poorly designed routing rules push wrong work down the fast lane. Weak review gates let risky claims inherit routine update paths. Unscoped memory drags stale assumptions into new drafts. Each failure is fixable only if the workflow makes the decision path visible.

Mitigate with this model:

- Route by confidence and consequence, not just content type.

- Keep named human sign-off for regulated, reputational or rights-sensitive work.

- Use scoped memory so prior approvals support drafting without leaking irrelevant context.

- Maintain manual fallback paths for exceptions, outages and disputed claims.

Failure recovery is part of workflow design, not an embarrassing afterthought. If a system cannot fail cleanly, it is over complicated.

I still don't fully understand why some routing heuristics work better for one content class than another, but operational evidence shows that when high-confidence signals are drafted automatically and low-confidence items are held for human triage, duplicate work drops and approval conversations shorten. Teams using Quill's scoped memory achieve a significant drop in duplicate drafts. The system stops asking three people to rediscover the same answer.

Recommended path

Choose a hybrid model with strict review design between ad hoc queue management and governed editorial workflow automation. Automate classification, routing, context assembly and routine work movement. Use people for judgement, accountability and explanation.

That means four decisions. Define routing rules before rollout. Separate low-risk repeatable work from high-risk exception work. Attach review gates to risk thresholds, not seniority theatre. Build an integrated plan-create-publish workflow so the team can trace how a signal became a published asset.

Quill fits that model because it is built around governed publishing, not vague AI assistance. It supports signal-led publishing workflow design, persona-guided drafting, scoped editorial memory and human approval automation in one operational frame. Holograph handles implementation where ownership matters, keeping technical design close to editorial reality.

Content operations improve when decisions become legible. Once routing rules, review gates and fallback paths are explicit, MTTR becomes manageable rather than mysterious. Cheers, that's a much better place to run a team from.

If you're weighing how to tighten approvals without making the workflow brittle, Quill is worth a proper look. We can help map the routing logic, review thresholds and recovery paths that fit your operation, then build it in a way your team can live with. If that sounds like the next sensible step, have a word with us about Quill.

If this is on your roadmap, Quill can help you run a controlled pilot, measure the outcome, and scale only when the evidence is clear.