Full article

Running a reward campaign is one thing. Proving, six months later, that every payout, message and suppression decision was controlled is another. In regulated environments, the work is not finished when the campaign goes live; it is finished when the evidence pack stands up on its own, without a two-day hunt through inboxes and chat threads.

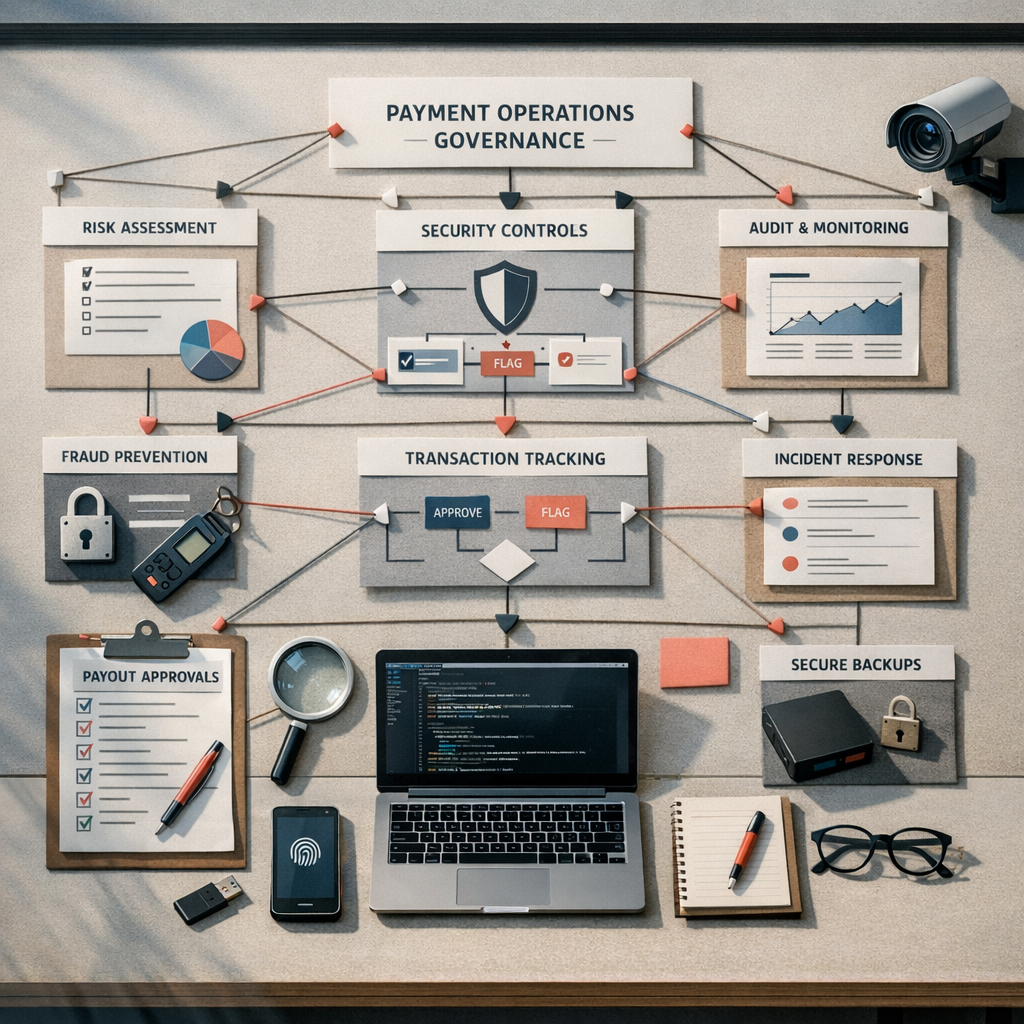

This delivery assurance note sets out a practical framework for UK data governance teams working across Payment Services, CRM, compliance and operations. The aim is simple: make scope, owners, dates and acceptance criteria explicit, log what changed and when, and leave behind a case study an auditor can actually follow.

Quick context

I once sat in a review where one question , “Can you show me the sign-off for the prize draw terms?†, sent three teams into a scramble. The approval did turn up, buried in an old email chain, but confidence took the hit. That is the real issue in regulated reward campaigns: not always a lack of process, more often a lack of a single, timestamped narrative.

Retrofitting that narrative later does not work. If payout approvals sit in finance, consent records sit in the CRM, and messaging changes sit in Slack, you have fragments, not proof. A plan without named owners and dates is not a plan, fix it. In one Q3 2025 review, a payout batch was generated from an out-of-date customer list because the suppression check was manual and missed. The investigation burned a week of sprint capacity and exposed a very ordinary failure mode: the control existed, but ownership and evidence did not.

For UK teams, that matters beyond internal housekeeping. ONS wellbeing datasets track anxiety, trust-related sentiment and local variation over time, while weekly mortality datasets show how sharply conditions can shift by region and period. Different dataset, different use case, obviously, but the operational lesson is useful: context changes, local conditions matter, and time-stamped records beat assumptions every time. If your campaign touches vulnerable audiences, regional payments, or sensitive communications, your control design needs the same discipline.

Step-by-step approach

You do not need a shiny new platform to build a defensible evidence trail. You need a method that covers pre-launch design, in-flight logging and post-campaign reconciliation, with one source of truth and no mystery gaps.

Phase 1: scoping and design

This is where most future pain is either prevented or booked in. Before launch, define the campaign mechanic, the approval path, the payout route and the messaging controls. Between 09:30 and 10:15 yesterday, I rewrote the acceptance criteria for story PAY-341 after legal blocked the terms page on the winner-selection method. A quick call with Sarah in Compliance cleared it: if the winner is chosen at random, say prize draw, document the script used, log execution time, and store the output. Tests passed once the duplicate-entry edge case was covered.

- Define the campaign mechanic: prize draw if the winner is chosen at random; competition if entries are judged. Owner: Marketing Lead. Date: campaign kick-off. Acceptance criteria: mechanic, winner method, judging or randomisation process and sign-off route documented in the brief and T&Cs.

- Map the data flow: show where entry data is collected, where consent is stored, how suppression is applied, which system creates the payout file, and where messaging logs sit. Owner: Data Architect. Date: Sprint 1 planning. Acceptance criteria: version-controlled diagram approved by Compliance and Engineering.

- Lock legal artefacts: T&Cs, privacy notice, consent copy and customer message templates should be versioned before launch. Owner: Legal Counsel. Date: two working days before go-live. Acceptance criteria: approved live URLs, screenshots and document versions archived.

Two checkpoints matter here. First, every control must have a named owner. Second, every control must have evidence you can point to on a specific date. If either is missing, the path to green is not green yet.

Phase 2: execution and logging

Once live, the discipline shifts from design to proof. Every material action should be logged by the person who did it, close to the event, not reconstructed at the end. Confluence, Jira, SharePoint, a controlled spreadsheet , use whatever works, but keep the log central, searchable and locked against quiet edits.

For a January 2026 FMCG promotion, we logged each payout batch with four fields as mandatory minimum: file name, batch date, recipient count and total value. We also recorded the operator name, approval reference and exception count. When a participant queried a missing payment, the team traced their record to the batch sent on 24 January in under five minutes. That is what good evidence looks like: not clever, just complete.

The same applies to messaging controls. If a winner email was updated after legal review, capture who changed it, when, why, and which version went live. If a suppression rule blocked a send, keep the rule version, threshold and sample result. For consent compliance operations, do not settle for “system says noâ€Â. Keep the decision artefact.

| Control point | Owner | Date or trigger | Evidence required | Operational measure |

|---|---|---|---|---|

| Payout batch approval | Finance Ops Lead | Before each batch release | Approved batch file, approver name, timestamp, total payout value | 100% of batches logged before release |

| Suppression check | CRM Manager | Before each send and payout export | Suppression-list version, run log, exception note if any | 0 unapproved suppressed records paid or messaged |

| Winner selection | Lead Developer | At draw or judging event | Script output or judging record, timestamp, witness or approver | 100% reproducible winner record |

| Template change control | Compliance Manager | Any copy update after sign-off | Version history, reason for change, final approved copy | 0 live message changes without approval log |

| Incident handling | Programme Lead | Within 1 working day of issue | Incident note, risk rating, mitigation, revised date | Issue log updated within 24 hours |

Phase 3: reconciliation and reporting

After the campaign ends, do not just zip the folder and hope for the best. Build the case study as a reconciled report: planned controls versus actual controls, expected volumes versus actual volumes, incidents versus mitigations, and any open residual risk.

This is also the right point for a frank admission if the effort estimate was off. I was wrong about one Q4 2025 integration; the data feed was trickier than expected, and we needed more buffer between winner validation and payment release. The updated plan added a 48-hour reconciliation step and a second approval gate before finance release. Bit tight on time, yes, but much better than paying the wrong records and explaining it later.

Your post-campaign pack should answer, at minimum, these points:

- How many valid entries were received, by cut-off date.

- How winners were selected or judged, with evidence.

- How many payouts were approved, sent, failed and reissued.

- Which messages were sent, on which dates, from which approved templates.

- Which risks materialised, who owned mitigation, and whether the final status is green, amber or still open.

Owner: Programme Manager. Date: within 30 days of campaign close. Acceptance criteria: signed reconciliation by Finance, Marketing and Compliance, with archive location recorded.

Pitfalls to avoid

The common failures are usually dull, which is why they get missed. An unowned suppression check. A payout file exported manually because someone was covering leave. A template tweak made late on Friday without version control. None of that sounds dramatic until audit asks for proof.

Two issues come up repeatedly. The first is vague acceptance criteria. “Winner selection should be fair†is not a test. “The randomisation script must use the approved method, log execution time, exclude duplicate entries, and save output to the evidence repository†is a test. The second is hidden manual work. If a control depends on one person remembering a spreadsheet step, it is a risk, not a safeguard.

Set measurable checks early. For example: payout file error rate below 0.1% per batch; approval logs present for 100% of live template changes; incident notes raised within one working day; archive completeness checked against a 12-item evidence list before closure. If you cannot measure the control, you will struggle to prove it worked.

Checklist you can reuse

Use this as a starter. Replace placeholders with actual names and dates before kick-off. If the owner cell is blank, the item is not ready.

| Phase | Checklist item | Owner | Due date | Acceptance criteria |

|---|---|---|---|---|

| Pre-launch | Campaign mechanic confirmed as prize draw or competition | Marketing Lead | Kick-off date | Mechanic documented in brief and T&Cs |

| Pre-launch | Data flow and consent path approved | Data Architect | Sprint 1 planning | Diagram version-controlled and signed off |

| Pre-launch | T&Cs, privacy notice and message templates signed off | Legal Counsel | Go-live minus 2 working days | Approved versions archived with timestamps |

| In-flight | Live page screenshots captured | Campaign Manager | Launch day | Screenshots stored with date and URL |

| In-flight | Suppression check completed before send and payout export | CRM Manager | Before each release | Run log saved, exceptions approved |

| In-flight | Winner selection evidence stored | Lead Developer | Draw date | Output reproducible and timestamped |

| Post-campaign | Payout reconciliation completed | Finance Ops Lead | End date plus 10 working days | Approved, paid, failed and reissued values match |

| Post-campaign | Final evidence pack signed off and archived | Programme Lead | End date plus 30 days | Archive complete against evidence checklist |

Closing guidance

The point of this framework is not paperwork for its own sake. It is to make payout and messaging decisions defensible while the campaign is moving, not after the fact. Good trust architecture marketing work is usually a bit unglamorous: tighter owner fields, cleaner logs, fewer assumptions, more traceability. That is fine. It is how teams stay out of avoidable trouble.

If you are reviewing a regulated reward campaign this quarter, start with one live workflow: payout approval, winner messaging or suppression handling. Name the owner, set the date, define the acceptance criteria and keep the change log tidy. If you want a second pair of eyes on the control design or the evidence pack, contact Holograph. We can help you get the path to green clear before audit asks the awkward question.