Full article

Overview

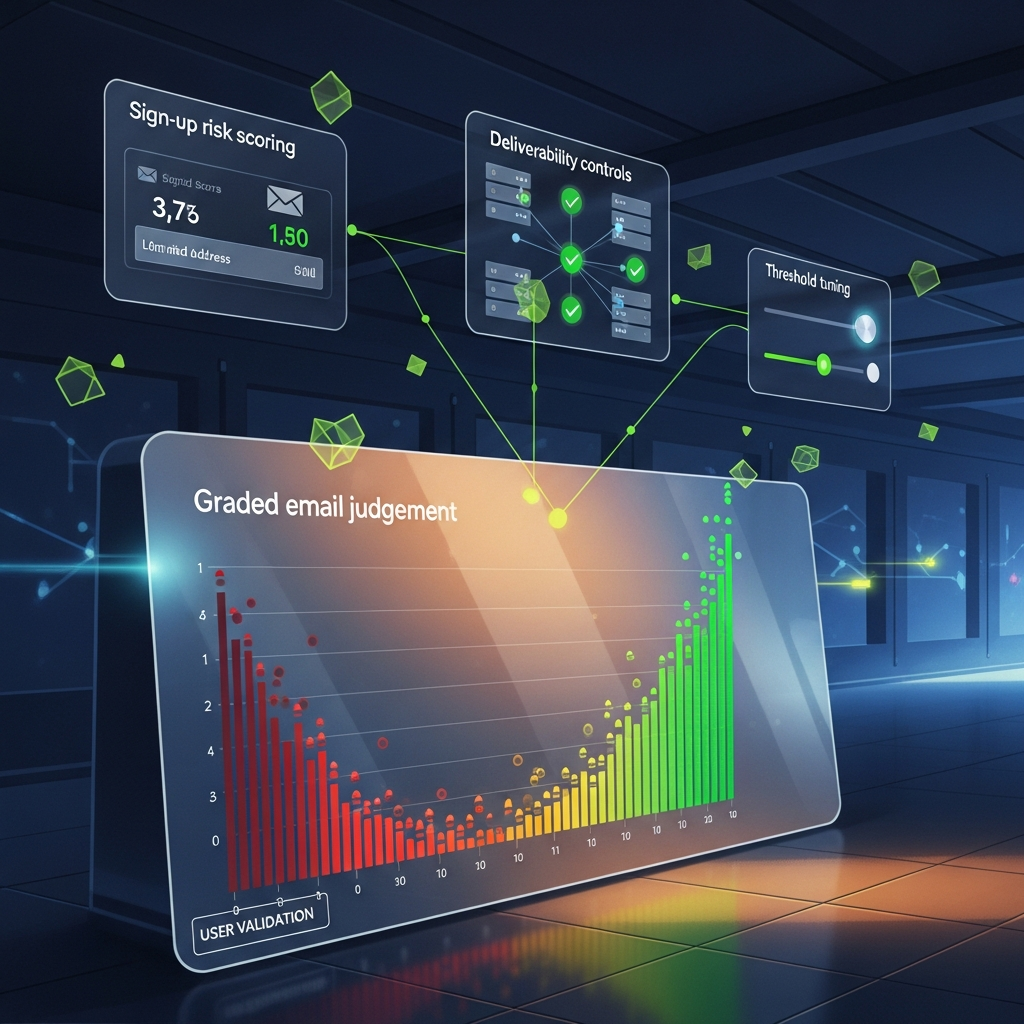

Most teams do not have an email problem. They have a threshold problem. Set the bar too low and poor-quality sign-ups leak into CRM, muddy attribution, and dent deliverability. Set it too high and you quietly choke healthy demand, irritate legitimate users, and create a support queue nobody fancied dealing with.

From the founder side of the desk, the pattern is familiar: a team buys a scoring tool, labels it email judgement, then assumes one threshold can govern every journey. It cannot. A newsletter sign-up, gated content form, free trial and checkout account each have different upside, different abuse pressure and a different tolerance for friction. If a platform cannot explain its decisions, it does not deserve your budget.

Quick context

Last Tuesday, in a chilly office in East Sussex with the kettle working overtime, I reviewed a funnel where acquisition had dipped 14% week on week after a so-called safety improvement. Fancy that. The actual change was simpler than the slide deck suggested: one global email threshold had been tightened across every entry point. Ordinary mobile users with typos, new domains or slightly messy behaviour were pushed into extra verification on low-risk journeys, and plenty of them simply left.

That is the key systems insight. Email trust is not a binary gate. It is a routing layer. Good email judgement should decide what happens next, not merely who gets in and who gets rejected. In practice, that means different trust thresholds for different journeys, with each threshold tied to a commercial objective and a clear treatment.

The logic is not exotic. GOV.UK guidance for driving examiners works from defined fault categories and observed behaviour, not hunches. Your trust model should do much the same: observable signal, defined treatment, auditable threshold. Not vibes.

There is a deliverability trade-off here too. Google and Yahoo sender requirements introduced in 2024 remained the practical baseline for bulk senders through 2025: proper authentication, complaint control and list quality all matter. Let too many low-trust addresses into welcome and nurture flows, and your sender reputation pays the bill later. Add too much friction too early, and acquisition efficiency falls. Both costs are real. The job is to choose them deliberately.

Step-by-step approach

The cleanest way to tune thresholds is to stop asking for the one right score and start designing a decision system. Between 09:00 and 11:30 during a recent audit, I mapped one live journey and found six teams making implicit trust calls: paid media, web ops, CRM, sales ops, support and analytics. That fragmentation is a bit of a faff, and it usually produces threshold drift.

1. Define the cost of false accepts and false rejects for each journey.

A false accept is a risky address you let through. A false reject is a legitimate user you block or friction up. For a newsletter sign-up, the false reject is often more expensive than teams assume because the acquisition spend is already gone. For a free trial, the false accept may cost more because it consumes product resources, pollutes CRM and wastes support time.

Put numbers against both sides, even if they are rough. If paid social drives trial starts at £18 CPA and 8% of legitimate users abandon after an extra verification step, you have burned £1.44 per attempted trial before looking at downstream conversion. If junk trial accounts create 200 bogus sign-ups a month and each costs £3 in infrastructure and service overhead, that is £600 in avoidable cost. Once the economics are visible, the threshold conversation improves quickly.

2. Build response bands, not a single pass-fail line.

A practical model usually has four bands:

This is where email judgement becomes useful rather than theatrical. The score itself is rarely the clever bit. Response design does the heavy lifting.

3. Combine email signal with context signal.

Email-only scoring misses too much. A new domain alone might be harmless. A new domain plus a velocity spike, proxy use and repeated typo variants is another matter. I usually start with five signal families: address syntax, domain age or reputation, mailbox behaviour, session context and intent signal.

You do not need invasive data collection to do this properly. Privacy-preserving architecture is usually enough: event timestamps, source campaign, completion speed, consent state and simple device hints can tell you plenty. Defaulting to more data than you need is sloppy engineering, not sophistication.

4. Test thresholds by source as well as by form.

One of the better fixes I saw in Q4 2025 came from a B2B SaaS funnel where LinkedIn traffic was strong, affiliate traffic was mixed and broad paid search was noisy. Rather than tightening the whole site, the team applied stricter thresholds only to higher-abuse source segments. The result: 11% fewer suspicious sign-ups entering CRM, while trial starts fell by less than 1.5%. That is a trade-off worth shipping.

5. Measure delayed outcomes, not just form completion.

If you optimise only for completion rate, you will let too much through. If you optimise only for purity, you will starve acquisition. Track at least 30-day outcomes by threshold band and source: confirmation rate, first-session activation, hard bounce rate, complaint rate, unsubscribe rate, sales acceptance and revenue contribution where available.

ETBrandEquity's March 2026 reporting on AI in marketing points towards the same operational truth: measurable gains come from disciplined use of data, not magical automation. Quite right too. Automation without measurable uplift is theatre, not strategy.

6. Keep the logic inspectable.

If a provider cannot show which inputs pushed an address into a risk band, challenge them. A black-box score may look tidy in procurement. It is much less charming when growth asks why conversion dropped 9% after rollout and nobody can explain the change. Threshold reviews are operational, not philosophical. People need to know what moved, where, and with what effect.

- Low risk: allow straight through.

- Moderate risk: allow, but suppress from high-value automations until a second signal arrives, such as confirmation or product activity.

- Elevated risk: ask for a lightweight step-up, such as a one-time code.

- High risk: block or route to manual review where the margin justifies it.

Pitfalls to avoid

The recurring mistakes are rarely technical. They are governance problems wearing a martech badge.

Using one threshold across the whole estate. It looks neat on paper and creates terrible incentives in practice. Teams end up tuning for the loudest stakeholder, usually the one shouting first when volume dips or spam rises. Separate thresholds by journey value and abuse pressure.

Letting one function own the decision in isolation. CRM sees downstream list quality. Growth sees acquisition cost. Product sees abuse and resource consumption. Support sees friction. Analytics sees contamination in reporting. If one team owns threshold policy alone, blind spots are inevitable. In one retail subscription review last month, support tickets tagged verification issue had risen 22% after a scoring change. Nobody in CRM had seen it until support brought receipts.

Treating disposable or privacy-led addresses as an automatic block. Some are poor quality. Some belong to users being perfectly sensible about privacy. A blanket ban creates friction without much nuance. Better to allow low-value access where appropriate, then limit privileges until stronger evidence arrives.

Ignoring copy and UX. If the challenge flow says, “Your email could not be processed,” legitimate users assume the site is broken. Clearer copy works better: “We need to verify this address before we finish setting up your account.” In a February 2026 onboarding test I reviewed, that small change lifted successful completions in the challenged cohort by 7.8%.

Not testing edge cases before rollout. University domains, shared corporate inboxes, sub-addressing, non-Latin characters and freshly registered business domains can all trip lazy rules. If the tool flags anything slightly unusual as risky, that is not intelligence. It is a shortcut.

Checklist you can reuse

If you need a working operating checklist, start here. It is the version I keep coming back to over a cup of tea because it catches most failure points without turning into process theatre.

If you want one shortcut, start with the moderate-risk band. That is usually where the money is. High-risk traffic is often obvious enough. Low-risk traffic tends to behave. Moderate risk is where overblocking hurts acquisition and under-managing hurts downstream performance.

A sensible rollout sequence for most teams looks like this:

That last point matters. If you change threshold, source mix, copy and verification flow all at once, you learn very little.

- Map live forms and acquisition sources.

- Define journey-specific business cost.

- Set initial bands conservatively.

- Test on one live journey for two weeks.

- Review quality and conversion together, not separately.

- Adjust one major variable at a time.

Closing guidance

The strongest teams treat trust thresholds as living operating rules, not fixed platform settings. A stricter trial threshold may reduce abuse and improve CRM quality while shaving a point off raw conversion. A looser newsletter threshold may grow reachable audience while demanding tighter suppression later. Both can be sensible if the trade-off is explicit, measured and reviewed.

If your growth and CRM teams want a practical benchmark, ask us to review one live acquisition journey and show how EVE would score it. We can look at where friction is helping, where it is costing you, and what to tune first without breaking flow. That tends to be more useful than another vendor demo, and considerably less of a faff. Cheers.

Invite growth and CRM teams to review how EVE would score one live acquisition journey.