Full article

Created by Marc Woodhead · Edited by Marc Woodhead · Reviewed by Marc Woodhead

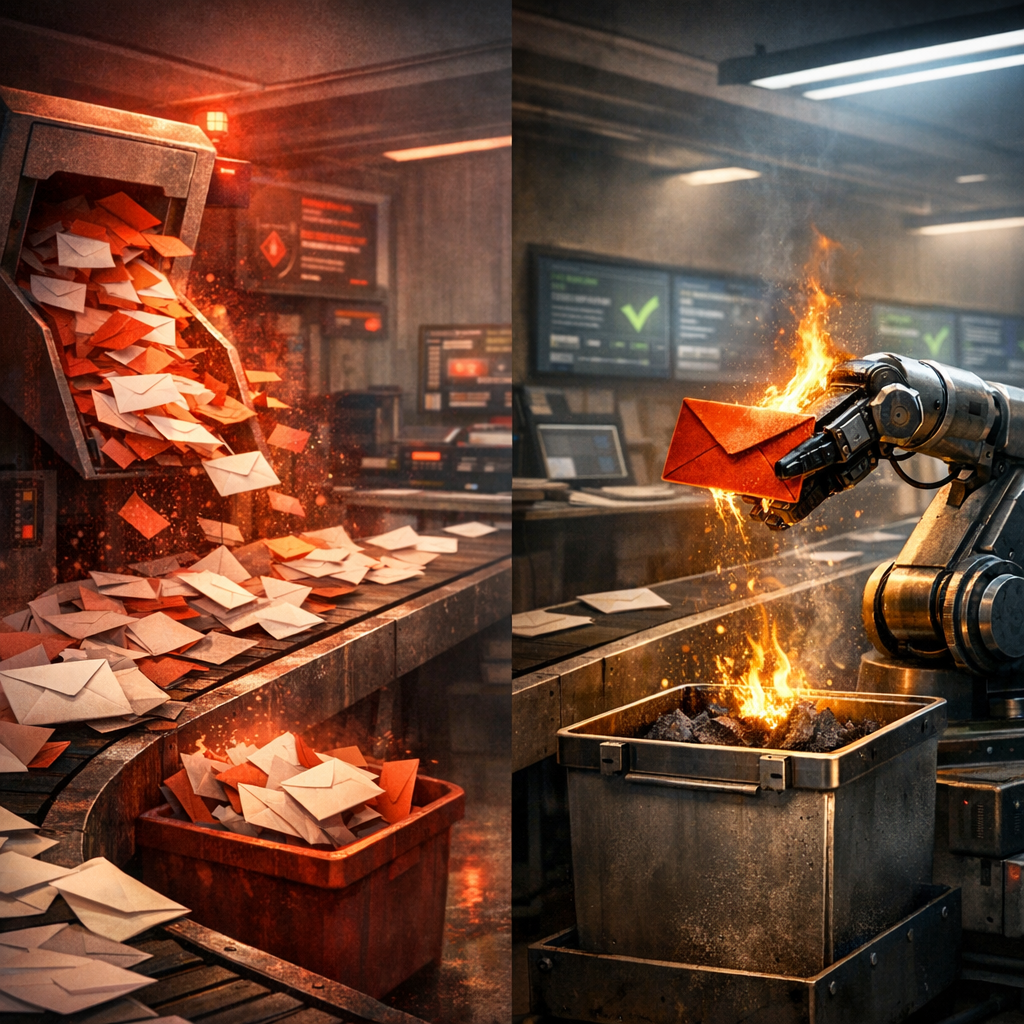

Email Risk Watch: Weekly intelligence for UK marketersEmail validation often targets spelling errors, but adaptive fraud and redirect-led sign-ups now pose larger risks. A stricter validation tool can worsen outcomes if it lacks explainable decisions. Without disciplined governance, automation risks masking toxic data in the CRM.

Referral-heavy sign-up journeys demand operational controls: authentication, suppression hygiene, and auditability determine validation resilience.

Real-time judgement versus static checks

Static checks like regex and allow-lists handle housekeeping. Regex catches malformed input, and allow-lists fast-track trusted partner domains. They do not constitute a risk strategy. They answer a narrow question: does the address look valid?

Signals this week demonstrate insufficiency. Domains such as rpeu-zcmp.maillist-manage.eu and link.ittnewsletter.com are not inherently fraudulent. In referral-heavy retail and publisher journeys, they appear in pathways with suspicious redirects or bot-assisted entries. A static check misses this context. The better question is wider: does this sign-up behave like a legitimate record worth trusting?

Fake competition entries and low-quality newsletter sign-ups produce higher bounce rates, noisy segmentation, and poor complaint outcomes. Judgement-based validation compares multiple signals. EVE uses over 30 detection methods, including domain patterning, alias unmasking, keyboard walks, and behavioural fingerprinting. This provides a probability model and basis for intervention, moving from whether an address is valid to whether it is useful, risky, or worth manual review.

Where validation fails: governance and control

False confidence is the key implementation risk. A validation engine like EVE can be technically sound but misleading without proper controls. EVE's speed supports fast sign-ups, but operational trust depends on governed decision-making with auditability.

Many implementations fail here. Teams focus on the API response but ignore the operating model: who reviews flagged entries, how long suppressions persist, and which exceptions are allowed. Common failures include:

- Stale suppression lists. Suppress too aggressively and you block legitimate customers. Too loosely, and you recycle junk into automations. The right approach is governed review with expiry windows, reason codes, and auditable overrides.

- Weak authentication. If SPF, DKIM, and DMARC controls are weak, mailbox providers will treat your mail more cautiously, regardless of list quality.

- Poor consent evidence. If sign-up records lack a timestamp, source, and wording version, compliance becomes shaky.

These details decide whether validation supports growth or merely creates a cleaner-looking problem.

A practical model for governing email risk

A sensible model uses tiered decisioning. Low-risk entries pass with minimal friction. Medium-risk entries may need a confirmation loop. High-risk entries should be held for review or blocked where bad data costs are high. This keeps the journey moving for most users while preserving scrutiny where signals justify it.

The trade-off is speed versus intervention discipline. EVE works best when teams define where human judgement should sit, not when assuming one score solves every case. This holds for referral-heavy campaigns and promotional spikes where legitimate behaviour briefly resembles abuse.

Measurement should be plain. Track false positives, bounce rates, complaint rates, and downstream engagement, not just pass rates at capture. If you use ad-level quality signals such as CQS, CQR, and QSR, use them as companion measures. They can show acquisition quality drift, but they cannot tell if an individual record belongs in your CRM.

What to monitor next week

Start with source domains. If referral traffic drives registrations, review which domains appear repeatedly and whether redirect patterns correlate with poor-quality entries. Then check suppression performance: what was blocked, what was overridden, and what later bounced or engaged? If your review process cannot answer these questions, simplify the system.

Next, audit authentication and consent. Confirm SPF, DKIM, and DMARC alignment. Check that each sign-up path records the timestamp, source, and consent wording. Finally, look at form-level behaviour for velocity spikes, alias clustering, or abrupt changes in completion rate. This work keeps sender reputation intact and stops acquisition spend leaking into dead records.

UK marketers need accountable validation that supports campaign performance and protects compliance. If you want a clear view of where your intake controls help and where they let risk through, book a frictionless validation walkthrough with our solutions team. We can map weak points, trade-offs, and quickest fixes worth making.