Full article

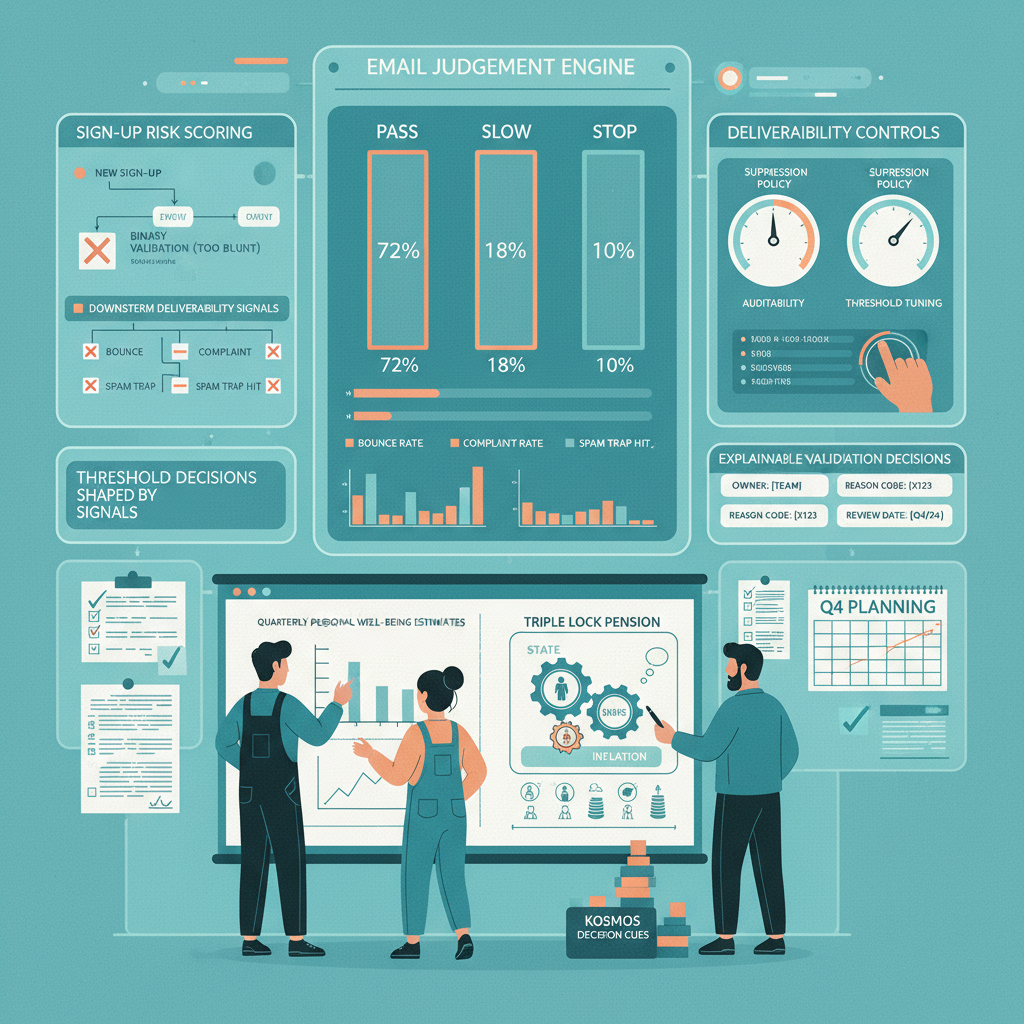

Executive summary: Treating email validation as a binary pass or fail is tidy on paper and messy in production. It blocks genuine people, lets risky sign-ups through, and pushes the cost downstream into bounce rates, spam complaints, support tickets and wasted media spend. A better approach is graded email judgement: decide whether each address should pass, slow for extra checks, or stop, based on evidence rather than guesswork.

This delivery assurance note sets out the practical version. Owners need to be named, review dates need to exist, and each rule needs acceptance criteria you can test. If your plan has no named owners and dates, it is not a plan, fix it.

What you are solving

The real problem is not whether an address looks valid. It is whether accepting it helps or harms future sending performance. That is the bit teams miss when they rely on a single API response or a blunt blocklist.

Overly strict rules create false positives. On 15 October 2025, one launch flow blocked an estimated 5% of legitimate registrations because a disposable-domain rule caught a newer mainstream provider. Support volume went up the same day and the acquisition team paid for clicks that could not convert. Bit tight on time, and entirely avoidable.

Loose rules create the opposite failure. In Q4 2024, a campaign segment that had passed standard checks still produced a 15% bounce rate. The addresses were syntactically fine, but too many sat on inactive or low-trust domains. The sending IP was throttled and the team had to spend time warming infrastructure instead of shipping the next campaign. That is why deliverability has to shape threshold decisions. Good-looking input data is not enough if downstream inbox placement goes red.

Yesterday, after stand up, ticket EV-2025-001 was blocked by a misfiring domain rule. A quick call with the support lead cleared it. New review date set for 20 October 2025. Small example, same lesson: email judgement needs a feedback loop, not a shrug.

Practical method

The workable model is a three-way decision:

- Pass: low-risk addresses move straight into CRM and onboarding. Typical signals include established mailbox providers, known corporate domains, valid syntax and no conflicting risk markers.

- Slow: uncertain addresses are not rejected, but they do face one extra checkpoint. That might be double opt-in, CAPTCHA, rate limiting, or delayed reward fulfilment. As a control, keep this queue small and measurable; for most programmes, less than 1% of sign-ups is a sensible starting checkpoint.

- Stop: clearly risky or invalid entries are rejected at source. Typical triggers include broken syntax, known disposable services, repeated abuse patterns or impossible combinations of signals.

The value sits in the middle state. A lot of teams either wave everything through or block too much. The slow path gives you room to reduce false positives without giving fraud a free run.

Ownership must be explicit. In one implementation model, Fraud and Compliance owns the Stop criteria and Growth owns the Pass criteria. The shared decision is the Slow queue, reviewed on the first Monday of each quarter with one agreed change log. That split matters because the operational tension is real: Growth wants less friction; Risk wants fewer bad records. Neither side should tune thresholds alone.

Acceptance criteria should be plain enough for support, CRM and engineering to test:

- Every Stop decision stores a reason code.

- Every Slow decision maps to a defined next step within 5 minutes of sign-up.

- No rule change goes live without a rollback option and an owner.

Decision points that actually matter

Threshold tuning is not a one-off setup task. It is ongoing operations. I was wrong about the effort on an earlier rollout; the form-feed mapping was trickier than expected, so the plan needed a two-week buffer before go-live. Better to say that early than pretend integration risk will sort itself.

Start with a small ruleset you can explain and measure. If the logic cannot be explained to support in one sentence, it is probably too clever for week one.

| Signal | Observed data point | Initial decision | Acceptance criteria |

|---|---|---|---|

| Syntax validity | Fails basic format check or missing '@' | Stop | Reject immediately and log reason code; support can reproduce outcome from the stored input |

| Disposable provider match | Matches maintained disposable-domain list | Stop | Reject with plain-language message; blocklist version recorded in change log |

| Very new domain | Domain age under 30 days | Slow | Require confirmation click before CRM activation; measure confirmation completion rate weekly |

| Low-friction trusted pattern | Established provider or known corporate domain with no conflicting flags | Pass | Route to welcome flow immediately; track bounce rate and complaint rate by cohort |

Metrics should drive the next decision, not decorate a dashboard. The minimum useful pack is:

- hard bounce rate by cohort: pass, slow, stop override

- spam complaint rate by cohort

- false positive estimate from support cases and reinstated sign-ups

- conversion rate from sign-up to first meaningful action

A practical checkpoint is simple: if the slow cohort produces materially better bounce performance than the untreated baseline without killing confirmation completion, keep it. If not, rewrite the rule. Cheers, next.

Common failure modes

Set and forget. Abuse patterns move faster than approval decks. In January 2026, one campaign form bypassed the judgement layer and the first send returned a 15% hard bounce rate. Emergency change request CR-451 followed. The new control was blunt but necessary: no sign-up form goes live without a delivery readiness check owned by engineering and signed off before launch.

No explainability. If support cannot answer why a sign-up was slowed or stopped, the process becomes theatre. Every decision needs a logged reason, a timestamp and the rule version that triggered it. That is good operations and useful auditability.

No feedback loop. Teams often watch validation rates and ignore downstream inbox signals. That is backwards. Deliverability is the operational measure that tells you whether your thresholds are helping. Between two recent sprint windows, acceptance criteria for a slow-path confirmation story were rewritten after an increase in spam complaints from that cohort. Tests passed once the duplicate-confirmation edge case was covered. The point is not the anecdote; it is the discipline. Observe, adjust, document.

Buying a black box too early. Tools help, but outsourcing judgement before you understand your own failure patterns is expensive guesswork. Build the first rules from your last 90 days of sign-up outcomes, then decide what should be automated or bought in.

Action checklist

If you need a path to green, keep it operational and dated:

- Audit the last 90 days of sign-ups

Owner: Data analyst

Date: end of next sprint

Acceptance criteria: cohort file created with bounce, complaint, conversion and support outcomes tagged. - Draft version 1 of pass, slow and stop rules

Owner: Cross-functional lead from CRM, Fraud and Engineering

Date: within 10 working days of the audit

Acceptance criteria: each rule has a reason code, owner, rollback step and success metric. - Implement the decision layer

Owner: Tech lead

Date: target in Q2 2026, with two-week buffer for data mapping and testing

Acceptance criteria: rules can be updated without a full release; slow-path events are logged within 5 minutes. - Stand up reporting

Owner: CRM manager

Date: from launch week

Acceptance criteria: weekly report includes hard bounce rate, complaint rate, false positive estimate and conversion rate by cohort. - Run threshold review

Owner: Head of Growth with Fraud counterpart

Date: first review in the first full month after launch, then quarterly

Acceptance criteria: every review ends with a decision log, named actions and the next review date.

What good looks like

A sound email judgement engine does not promise perfection. It gives you explainable decisions, lower avoidable friction and a cleaner route from sign-up to inbox. More importantly, it gives the team something testable: who owns the rule, when it changes, what metric moved, and what the mitigation is if results slip.

If your current setup still treats every email address as simply valid or invalid, Holograph can help you design a graded approach that your growth, CRM and fraud teams can actually run. contact Holograph and bring the awkward bits: the bounce spike, the slow queue, the rules nobody trusts. We will help you turn them into a usable threshold plan with owners, dates and a realistic path to green.