Full article

Overview

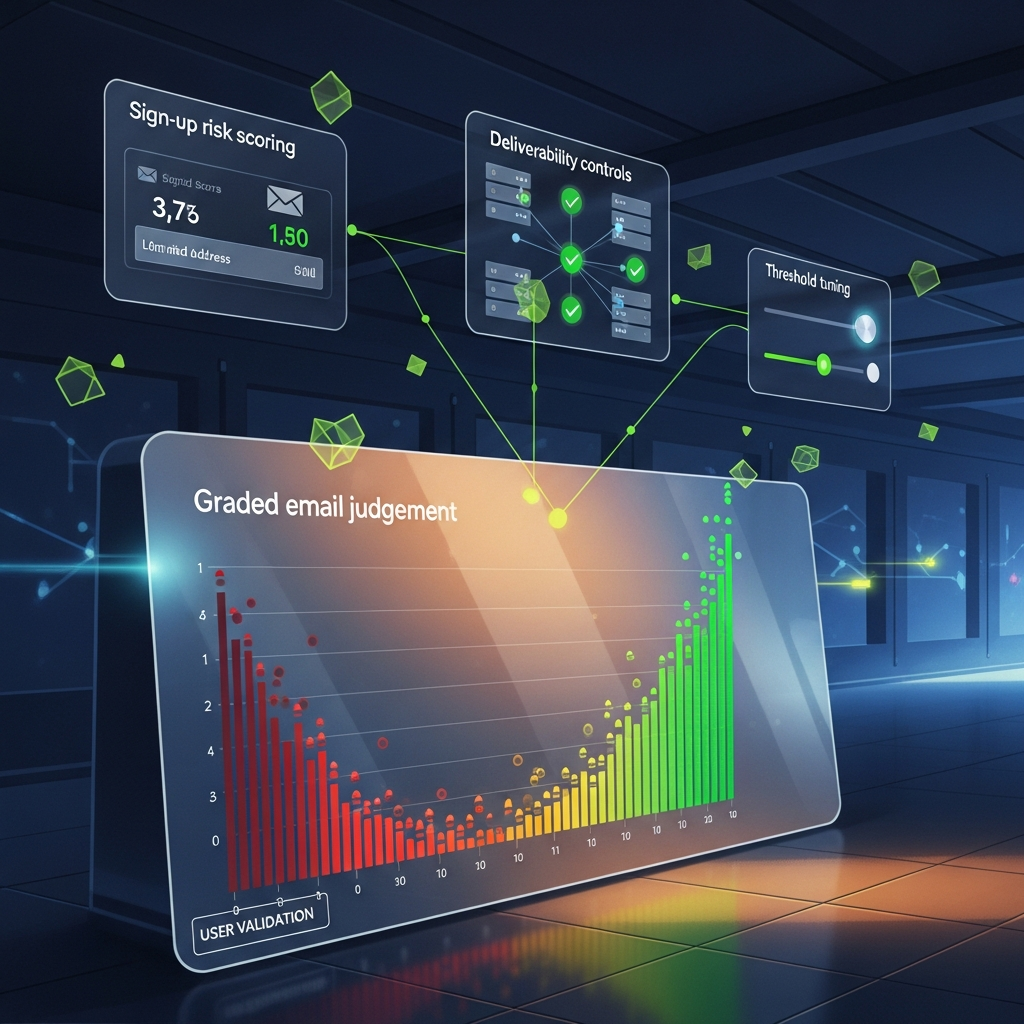

Acquisition teams are still asked to prove two things at once: volume and quality. A simple valid-or-invalid email check at sign-up helps catch typos, but it does not tell you whether the record is worth routing into CRM, nurture and sales follow-up. That gap is where email judgement needs to become explainable, operational and measurable.

This delivery assurance note sets out a practical way to move from binary validation to risk scoring you can defend. The method is straightforward: define signals, assign owners, set dates, test the routing logic and report on outcomes that matter, such as hard bounce rate, double opt-in completion and sales acceptance. If your plan has no named owners and dates, it is not a plan. Fix it.

Quick context

For years, the default check at the point of capture has been technical only: does the email address exist and can the domain receive mail? Useful, yes. Sufficient, no. A technically valid disposable address used to grab a lead magnet is not equivalent to a corporate address from a buyer who matches your target account list, yet a binary check treats both as acceptable.

The downstream impact is usually visible in the numbers. Low-quality intake can inflate hard bounces, suppress engagement and waste sales time on records that were never likely to convert. In one recent B2B software campaign, 15% of records that passed a basic validity check came from known disposable providers. The issue only became obvious when the first nurture send returned a 12% hard bounce rate and the ESP flagged the account. That is not a quality story you want to tell the board on Friday afternoon.

The fix is not to guess harder. It is to score risk in a way that can be explained. Instead of saying, “these leads were valid”, you can say, “850 leads met low-risk acceptance criteria, 120 were routed to double opt-in, and 30 were held back because they matched high-risk signals”. That is a more useful decision trail, and it gives marketing, sales and ops something concrete to work with.

A step-by-step approach

You do not need a six-month transformation programme to get started. A tight five-week delivery window is realistic if scope is controlled, owners are clear and acceptance criteria are agreed up front.

Acceptance criteria: one agreed signal list, one owner per signal source, and one change log entry showing version 1.0 signed off by the Head of Marketing.

Acceptance criteria: the model is documented, test cases exist for at least 10 sample records, and scoring outcomes are reproducible by a second reviewer.

Acceptance criteria: each tier has a documented action, SLA and named owner. No orphan states, no “someone will look at it later”.

Acceptance criteria: staging deployed, API responses logged, all tier-routing tests passed, and one rollback plan agreed before production release.

Acceptance criteria: dashboard live, source data reconciled, and baseline metrics captured for the first reporting period.

- Define the risk signals (Owner: Marketing Ops Lead, date: week 1). Run a short workshop with marketing, sales and data stakeholders. The output should be a prioritised list of five to seven signals, each with a plain-English definition. Good starting signals include:known disposable or temporary email domainsvery new domains, for example registered in the last 90 daysIP reputation issues, proxy usage or sign-ups from territories you do not servegibberish usernames such as random keyboard stringsmismatches between stated company details and the email domainAcceptance criteria: one agreed signal list, one owner per signal source, and one change log entry showing version 1.0 signed off by the Head of Marketing.

- Assign weights and scoring rules (Owner: Data Analyst, date: week 2). Not every signal means the same thing. A disposable domain may justify +50 points, while a newly registered domain may justify +15 because it could be a legitimate start-up. Keep the first model simple and cumulative.Acceptance criteria: the model is documented, test cases exist for at least 10 sample records, and scoring outcomes are reproducible by a second reviewer.

- Set risk tiers and routing actions (Owner: Head of Marketing, date: week 2). Tiers only help if they trigger a decision. A practical first pass is:Low risk (0\xE2\x80\x9310): route to CRM and marketing automation as normalMedium risk (11\xE2\x80\x9340): require double opt-in or queue for brief manual reviewHigh risk (41+): hold outside the main database and keep away from sales follow-upAcceptance criteria: each tier has a documented action, SLA and named owner. No orphan states, no “someone will look at it later”.

- Integrate and test the logic (Owner: Tech Lead, date: week 4). This is where the API, form logic and routing rules get wired together. Build scoring into the sign-up flow, middleware or automation platform, then test it against real edge cases. Yesterday, after stand-up, ticket PROJ-271 was blocked by a missing API key. A quick call with David on the vendor side cleared it. New UAT date set for Friday. Nothing glamorous, but that is how projects get back to green.Acceptance criteria: staging deployed, API responses logged, all tier-routing tests passed, and one rollback plan agreed before production release.

- Report outcomes that matter (Owner: Marketing Ops Lead, date: week 5). Build a dashboard that shows sign-up volume by source, score tier and campaign. Pair that with operational metrics such as hard bounce rate, double opt-in completion rate and sales acceptance rate for low-risk leads.Acceptance criteria: dashboard live, source data reconciled, and baseline metrics captured for the first reporting period.

- known disposable or temporary email domains

- very new domains, for example registered in the last 90 days

- IP reputation issues, proxy usage or sign-ups from territories you do not serve

- gibberish usernames such as random keyboard strings

- mismatches between stated company details and the email domain

- Low risk (0\xE2\x80\x9310): route to CRM and marketing automation as normal

- Medium risk (11\xE2\x80\x9340): require double opt-in or queue for brief manual review

- High risk (41+): hold outside the main database and keep away from sales follow-up

Pitfalls to avoid

The first risk is over-engineering. Teams often try to launch with every possible signal, plus enrichment, plus intent data, plus a heroic amount of logic that nobody can explain six weeks later. Mitigation is simple: start with three to five high-value signals and a model that a non-specialist can understand in one read. One client wanted to include social profile matching in phase one. It added four weeks of development and no immediate gain in routing quality, so we parked it. Result: the core controls went live a month earlier.

The second risk is sales resistance. If the change is framed as “marketing blocking leads”, it will go sideways fast. The better route is to agree shared acceptance criteria before launch. For example, if low-risk leads are expected to produce a higher sales acceptance rate than the historic baseline, put that metric on the dashboard and review it weekly for the first month. Owner: Sales Director. Date: launch week. Metric: acceptance rate by tier. Nice and testable.

The third risk is poor source data. A scoring model cannot rescue inconsistent forms, duplicate fields or CRM records with half the company name missing. The mitigation is a short data hygiene audit before build starts. Check field naming, required inputs, source tagging and destination mapping. If the audit adds a week, fine. Better a week now than a month of arguing over whether the model is wrong when the data is the real problem.

Checklist you can reuse

Keep the project visible and boring in the best possible way. Boring is good. Boring means it is under control.

Closing guidance

Moving to explainable email judgement is not about making acquisition slower or more complicated. It is about giving your team a defensible way to prove quality, protect deliverability controls and route effort where it has the best chance of paying back. Keep the first version small, measurable and owned. That is enough to get started, and enough to learn from.

If your current process still ends at valid or invalid, you are probably missing the detail needed to explain results when pressure lands. If you want a clear framework with owners, dates and acceptance criteria that your team can actually run, we can help you get it sorted. A short conversation is usually enough to map the risks, set the path to green and decide what should happen first. Get in touch.