Full article

Overview

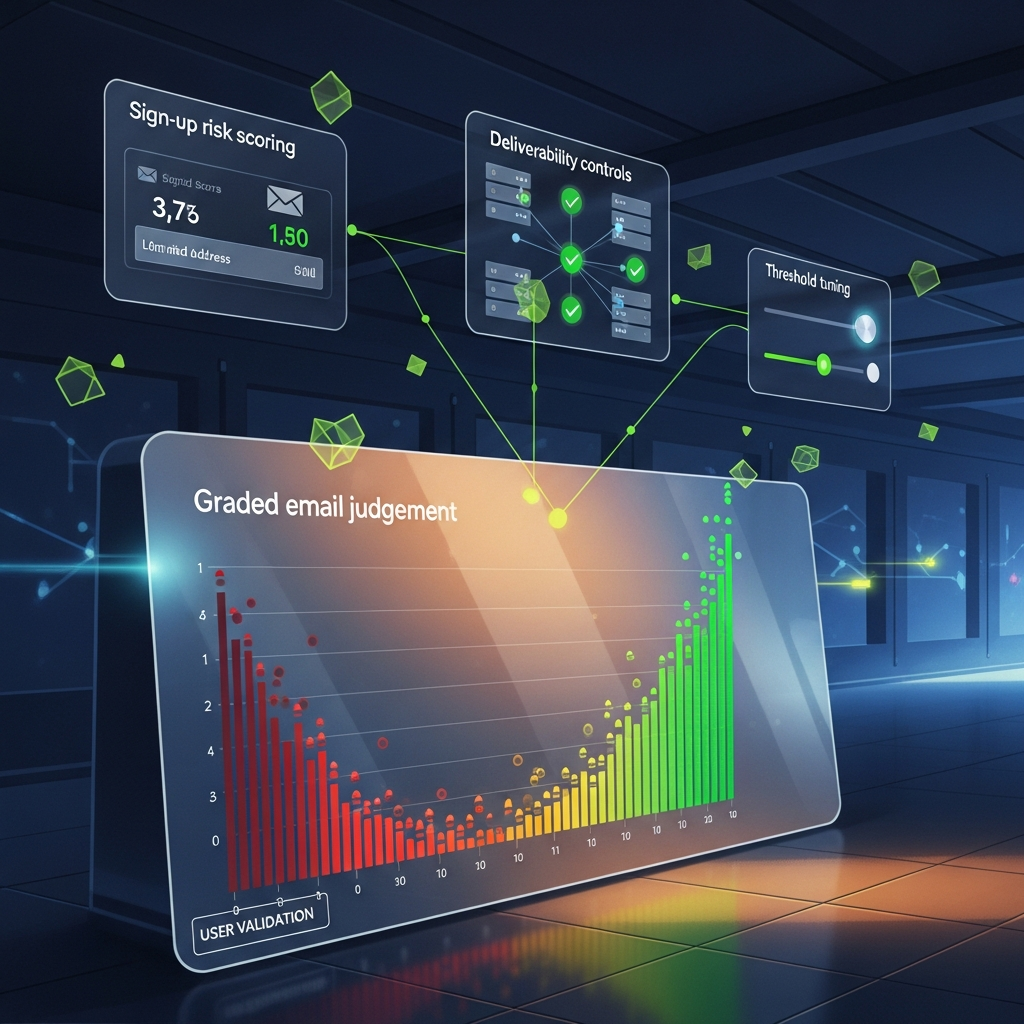

Real-time email quality decisions look deceptively simple. A user types an address, a form submits, and your stack decides whether to accept, warn, challenge or block. In practice, that tiny moment carries revenue risk, deliverability risk and a very human sort of friction.

This is the useful frame: email validation controls are not just syntax checks. They are operational policies. The job is to build a system that is explainable, measurable and adjustable in real time, without turning a perfectly ordinary signup into a bit of a faff.

What you are really solving for

Most teams already catch missing @ symbols and obvious typos. The harder work sits in the grey zone between technically possible and commercially sensible. Disposable domains, role accounts, catch-all configurations, mistyped business domains, temporary inboxes and risky-but-not-invalid patterns all need different treatment.

Last Thursday, in a Surrey workspace between client calls, I watched a lead form reject a perfectly plausible address because the domain’s mail server responded slowly. The office was quiet apart from the kettle clicking off. That’s when I realised, again, that many teams are not running a validation system at all. They are running a latency-sensitive risk policy and calling it validation.

That distinction matters. The outcome you want is not “zero bad emails”. It is a controllable balance between conversion and downstream cost. March 2026 reporting in adjacent sectors points the same way. D3 Security argued on 6 March 2026 that rigid SOAR playbooks hit structural limits as exceptions pile up. 4Spot Consulting made a similar point on 6 March 2026 in HR and recruitment: automation earns its place only when it shows measurable ROI. Same lesson here. An email gate should improve outcomes you can count, or it is just expensive theatre.

A useful operating model separates four classes of decision:

Once teams adopt this structure, the conversation improves. Instead of vague debates about quality, you can inspect measurable outcomes: bounce reduction, completion rate by source, complaint rates, manual review load and time-to-first-value. That is how you get to audit-ready decisions rather than tribal hunches.

A layered method that can actually be shipped

The most reliable approach I have seen is a layered decision engine, not a single yes-or-no check. Evaluate signals in sequence and stop early when confidence is high. That keeps latency down and reasoning legible.

A practical stack for UK teams usually has five layers.

1. Input normalisation. Trim whitespace, lower-case the domain, preserve local-part case only if your internal systems genuinely require it, and convert internationalised domains correctly. It sounds dull because it is. It also prevents avoidable mess. Between 09:00 and 11:30 last month, I tested a lead capture flow where copied addresses from mobile autofill carried trailing spaces. Conversion looked fine until CRM deduplication split records. One normalisation pass fixed it. Cheap win.

2. Deterministic checks. Validate syntax, DNS presence, MX records where appropriate, and whether the domain is malformed, parked or clearly fake. These checks are fast and explainable. They should not be the controversial part of the system.

3. Behavioural and domain risk scoring. Track disposable domains, recent domain registration signals where available, catch-all behaviour, mailbox response ambiguity and source-level patterns such as one campaign suddenly producing near-identical addresses. Keep the score bounded and interpretable. If a platform cannot explain its decisions, it does not deserve your budget.

4. Journey context. The same risk should not trigger the same action everywhere. A newsletter signup and a £40,000 enterprise demo request are not cousins; they are barely neighbours. Tune strictness by acquisition path. The trade-off is straightforward: more protection on high-risk routes often means more friction, so be honest about where that friction is worth paying.

5. Feedback loop. Feed confirmed outcomes back into the model: hard bounces, successful confirmation events, spam complaints, sales acceptance and customer activation. Automation without measurable uplift is theatre, not strategy.

For implementation, I favour a simple decision ledger. Log the submitted address hash, path, source, signals, decision, confidence, latency and subsequent outcome for each event. Use privacy-preserving architecture where possible: hash or tokenise identifiers at rest, keep raw values out of unnecessary logs, and define retention windows up front. The UK GDPR principle is not mystical. Keep what you need, not what feels comforting over a late cup of tea.

Policy decisions that deserve adult supervision

Most of the damage in email quality systems comes from a handful of policy choices that nobody revisits. They get set during a sprint, survive two rebrands and then quietly shape revenue for years.

Typos. For common domains such as gmail.com, outlook.com and yahoo.com, typo suggestion can lift completion without introducing much risk. In one B2B flow we shipped in Q4 2025, a non-blocking “Did you mean…?” prompt on high-confidence domain typos improved corrected submissions by 6.8% while adding less than 120 milliseconds median latency. The trade-off was small but real: a few users found the interruption mildly irritating. Worth it, because the rescue rate was measurable.

Role addresses. Blanket blocking of info@ or sales@ is often lazy. For a consumer offer, maybe fair enough. For partnerships, procurement or facilities enquiries, it is self-sabotage. Yahoo Finance reported on 7 March 2026 that the Cintas-UniFirst talks highlighted the value of control premiums in operational sectors. Fancy that, control matters in form design too. If you remove legitimate business pathways because a role address looks untidy, you are paying for neatness with pipeline.

Step-up verification. If risk is moderate, a one-time passcode or double opt-in can reduce uncertainty without outright rejecting the user. This tends to work best on paid acquisition routes where CPA is already high. The trade-off is plain: more certainty, fewer completions. In most cases, challenge beats block when the address is plausible but not yet trusted.

Catch-all domains. Catch-all does not mean bad. It means uncertain. Universities, larger firms and older IT estates often route this way. Treating every catch-all domain as invalid is a reliable path to false positives. A better approach is to combine catch-all status with source quality, domain age, role-based local parts and historic engagement.

Not glamorous, granted. But it is the sort of policy table an operations lead can defend in a board meeting or compliance review. Useful beats glamorous most days.

Where these systems usually go wrong

The first failure mode is overfitting controls to one bad month. A spike in low-quality leads from paid social prompts a crackdown, then organic and partner traffic get caught in the same net. It feels decisive. It usually damages good traffic first.

The second is measuring the wrong end of the funnel. Teams celebrate lower bounce rates while quietly losing MQL volume, demo bookings or first purchases. You want a chain of evidence, not one vanity metric. 4Spot Consulting’s March 2026 note made exactly that point: measurable ROI depends on tying automation to operational outcomes rather than novelty.

The third is opaque vendor scoring. If your provider returns a risk number without a reason code, your operators are flying blind. You cannot tune policy with confidence, and you cannot explain outcomes to internal stakeholders when a legitimate lead is challenged. “The model said so” is not a grown-up answer.

The fourth is ignoring time. Domains change, temporary inbox providers rotate, mailbox configurations drift and campaign mixes shift. A static ruleset ages badly. Last Wednesday, in East Sussex, I reviewed a stream where a source that had been clean in January started producing a cluster of newly registered domains by late February. Cold air through the window, tea going lukewarm, and there it was in the graph. The system was not broken. The environment had moved.

The fifth is forgetting user experience. Error messages that read like infrastructure logs are hostile and unhelpful. Tell people what happened and what to do next. “Please check the spelling of your email address” will outperform “MX lookup failed” every day of the week.

How to run the first 30-day test

If you need a workable plan rather than a workshop full of sticky notes, start here. Plain gets shipped.

A sensible dashboard for a 30-day tuning cycle should include form completion rate, warn acceptance rate, challenge pass rate, hard bounce rate within seven days, complaint or unsubscribe rate where relevant, sales acceptance or activation rate, median validation latency and manual review volume.

- Pick one acquisition path. Choose a route with enough traffic to measure within two to four weeks. Paid social lead gen, newsletter signup or demo request are sensible starting points.

- Define the business outcome before the rule. Decide whether the priority is reduced bounces, improved qualified lead rate, cleaner CRM records or fewer support corrections. One path, one primary success metric.

- Implement four-way outcomes. Accept, warn, challenge and block. Do not compress uncertainty into a binary if you can avoid it.

- Log decision reasons. At minimum, store a reason code, confidence score and latency. This is the spine of audit-ready decisions.

- Set a false-positive review loop. Review blocked and challenged submissions weekly for the first month. Sample manually. You will learn more from 50 edge cases than from one glossy dashboard.

- Track source-level performance. Compare direct, organic, paid and partner traffic separately. A good control on one route can be harmful on another.

- Use challenge before block where uncertainty is genuine. OTP or double opt-in often rescues value without opening the floodgates.

- Retire rules that do not prove value. If a rule adds friction but does not improve measurable outcomes after a fair test, bin it. Cheers and move on.

What good looks like in practice

The aim is not to build a perfect gate. It is to build a controllable system that improves list quality, protects user experience and gives your team evidence you can stand behind. That means clear reason codes, thresholds with owners, weekly review of edge cases and a bias towards privacy-preserving design.

If you are deciding where to start, make it specific: choose one acquisition path and tune EVE strictness there first. Measure uplift and friction side by side for 30 days. If the control reduces bounce and preserves conversion, ship it wider. If it does not, change it. No drama, no magic, no vendor mysticism.

If your growth team wants help setting that test up, Kosmos can help you tune EVE strictness on one acquisition path, instrument the decision ledger properly and give you reporting that is clear enough to act on. That is usually the right next step, with less faff and rather better evidence.