Full article

Overview

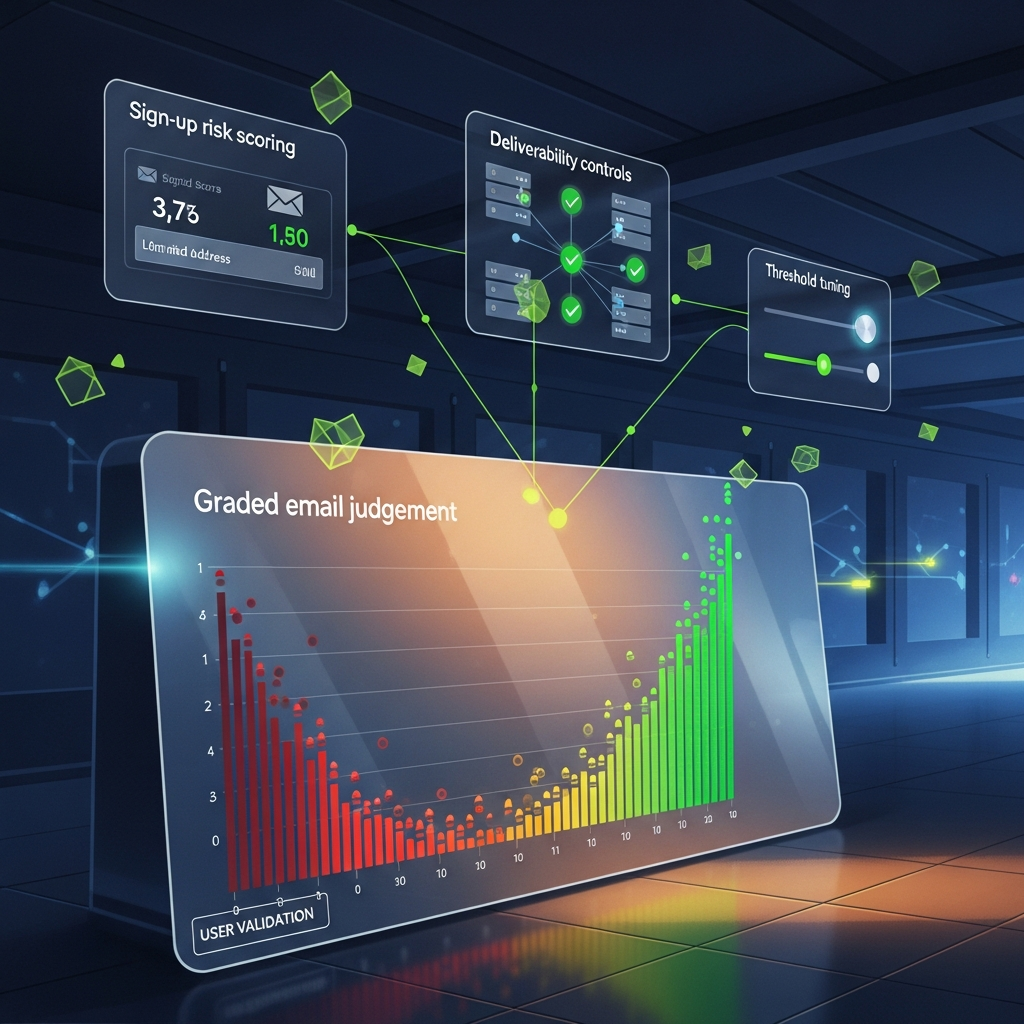

Real-time email quality decisions look simple from the outside. A visitor types an address, a form either accepts it or nudges for correction, and the team moves on. In practice, that tiny moment sits at the junction of acquisition cost, CRM hygiene, deliverability and compliance. Set the control too loose and rubbish enters the system. Set it too tight and you block good prospects who were ready to buy.

The useful question is not, “Which vendor gives us perfect answers?” There is no such thing. The better question is, “What measurement framework lets us tune strictness, prove outcomes and explain each decision later without a bit of theatre?” For UK teams using email validation controls, that is the difference between a clean system and an expensive faff.

Quick context

Last Thursday, in our studio between client calls, one acquisition form started rejecting addresses that had worked perfectly well a week earlier. The kettle was still hot, the IDE was open on the right-hand screen, and the culprit turned out not to be the form at all. It was a rule change upstream that treated a previously tolerated pattern as suspicious. That is the thing with email quality: it is not a static checklist. It is a living control surface.

The commercial stakes are measurable. Google and Yahoo tightened sender expectations during 2024 around authentication, complaint rates and unsubscribe handling. For a UK growth team, low-quality addresses are no longer just untidy data. They drag on sender reputation, support workload and reporting accuracy. That is why I favour EVE: Evaluate, Verify, Explain. Not a slogan. An operating model for deciding what happens at entry, what gets checked asynchronously, and what evidence is retained for review. If a platform cannot explain its decisions, it does not deserve your budget.

In practical terms, the framework has four jobs:

The useful shift is to stop treating validation as a yes-or-no gate. Think in bands instead: accept, challenge, suppress and review. That gives you room to improve quality without turning your forms into border control.

Step-by-step approach

The strongest frameworks are dull in the right places. They define inputs, thresholds, outcomes and review loops. That sounds unglamorous because it is. It also works.

1. Define the event taxonomy before tuning the rules. Start with explicit states: accepted instantly, accepted with warning, corrected by user, challenged for confirmation, suppressed and manually reviewed. Add one reason code to every state. Useful examples include syntax anomaly, DNS or MX failure, disposable domain risk, role-based address, mailbox uncertainty and duplicate in a recent window.

Keep the code list short enough that a marketer can understand it on a Monday morning with one cup of tea. As a practical limit, I would cap it at 12 top-level reason codes. Beyond that, reporting gets muddy and trust drains out of the room.

2. Separate deterministic checks from probabilistic checks. Deterministic checks are the boring essentials: syntax, domain formatting, MX record presence, known typo correction and duplicate detection. Probabilistic checks are where vendors start inferring risk. Treat those differently. A deterministic failure may justify an immediate block. A probabilistic signal should usually trigger a challenge or a lower-confidence path, not a hard rejection.

This is where false positive reduction matters. Blocking a valid address because a model felt nervous is a costly own goal, especially on paid traffic. Between 14:00 and 16:30 last month, I tried a stricter scoring rule on a lead form and saw accepted submissions drop by 6.8%. Bounce rate improved, yes, but qualified demo bookings fell by 4.1%. Fixed it with a simple hack: deterministic failures still blocked, but uncertain scores moved to a confirmation step. Conversion recovered within 48 hours while list quality stayed materially better than baseline. Trade-off, observed and tested, not guessed.

3. Set thresholds by acquisition path, not globally. This is the bit many teams skip. Newsletter forms, gated content, checkout, partner referrals and sales demo requests do not deserve identical tolerance levels. A checkout form may justify a softer challenge because the user already has purchase intent. A free resource page fed by paid social usually needs tighter controls because typo-heavy and throwaway entries are more common.

The trade-off is obvious. Higher strictness can improve downstream hygiene while shaving the top off conversion. That is why you measure at path level, not in aggregate. Aggregate reporting hides bad bargains.

4. Tie each decision to downstream outcomes. A validation result becomes useful only when matched against what happened next. Did the address open the onboarding email? Did the welcome message hard bounce? Was there a spam complaint within seven days? Did the lead reach MQL or booked meeting status? Your framework should connect entry quality to complaint and bounce outcomes, not just form completion.

For most UK teams, I would track at least these metrics every week:

5. Keep the explanation layer human-readable. This sounds pedestrian until someone from legal, RevOps or leadership asks why a lead was blocked on 12 February. You need a concise explanation string, a machine-readable reason code, decision timestamp, lawful scoring inputs and the rule version used at the time. Under the ICO’s UK GDPR accountability principle, organisations should be able to demonstrate how decisions are made and governed. You do not need to retain every raw signal forever. You do need enough to explain the action taken.

Privacy-preserving architecture helps here. Log decision artefacts, not unnecessary personal payloads. Hash what can be hashed. Keep retention limits explicit. Clean systems are easier to defend and easier to ship.

- Low-risk path, such as checkout or account activation: accept on medium confidence, challenge only on clear anomalies.

- Medium-risk path, such as organic lead capture: accept on stronger confidence, challenge uncertain addresses, block deterministic failures.

- High-risk path, such as incentivised campaigns or affiliate traffic: stricter thresholds, more confirmation steps, faster suppression of repeat anomalies.

Pitfalls to avoid

The first pitfall is treating validation vendors as oracles. They are input sources, not truth machines. On 7 March 2026, Yahoo reported on Alphabet facing a Gemini lawsuit while expanding healthcare AI work with CVS. The specifics of that case sit outside email capture, but the operational lesson lands squarely here: when automated decisions affect business outcomes, explainability and governance stop being optional nice-to-haves.

The second pitfall is using one success metric. Teams often celebrate lower bounce rates while ignoring that they strangled acquisition. Or they celebrate higher form completion while quietly damaging sender reputation. Automation without measurable uplift is theatre, not strategy.

A third mistake is ignoring context. UK B2B leads still use role addresses in sectors like operations, procurement and facilities. Blanket suppression of addresses such as info@ or sales@ can remove noise, but it can also cut off a legitimate route into a buying committee. The right treatment is usually path-specific. For a webinar list, challenge role addresses. For a facilities-services enquiry form, permit them but segment them for follow-up. Fancy that, context matters.

The fourth pitfall is slow feedback. If you tune rules monthly but traffic quality changed last week, you are steering by the rear-view mirror. I prefer a light weekly review with monthly threshold changes unless an anomaly is obvious. On one campaign, a disposable-domain spike appeared within three days of a creative refresh. A same-week adjustment reduced suspect submissions by 18% while preserving booked calls within a 1% margin. That is a trade-off you can live with.

Last, do not bury the UX. Real users mistype on mobile. They use autofill. They swap letters. The system should help before it punishes. “Did you mean gmail.com?” is useful. “Invalid email” is lazy.

Checklist you can reuse

If you need something operational rather than philosophical, use this checklist. It belongs beside the sprint board, not framed on a wall.

If you are building from scratch, here is a sensible first-pass operating standard for EVE:

The trade-off here is speed versus certainty. A checkout team may accept more uncertainty to protect revenue. A high-volume lead generation team may choose tighter controls to protect list quality. Neither is inherently right. The measurement framework tells you whether the choice pays off.

- Define acceptance, challenge, suppression and review states before launch.

- Attach one reason code and one human-readable explanation to every decision.

- Use deterministic failures for hard blocks; use probabilistic risk for challenge flows.

- Set strictness by acquisition path, not as a single global threshold.

- Measure accepted rate, correction rate, hard bounces, complaints and activation outcomes together.

- Track overturns from manual review to spot over-blocking.

- Review rules weekly; change thresholds monthly unless anomalies justify faster action.

- Store rule version, timestamp and minimal decision evidence for audit-ready decisions.

- Limit retained personal data; prefer hashes and short retention windows where feasible.

- Test UX copy on mobile, particularly typo correction prompts and confirmation steps.

Closing guidance

Good email decisioning is less about catching everything and more about making sensible, explainable trade-offs with evidence attached. Build a system that distinguishes hard facts from soft signals. Tune by acquisition path. Watch downstream outcomes, not vanity metrics. Keep the evidence trail readable enough that product, compliance and marketing can inspect the same event and reach the same conclusion.

If you already have email validation controls in place, do not start with a grand rebuild. Pick one acquisition path, tune EVE strictness, and compare conversion, hard bounce and activation outcomes over the next two weeks. If you want a practical place to start, Kosmos can help your growth team test one path, tighten what works and leave the rest alone until the numbers justify it. Cheers , that is usually where the real improvement starts.