Full article

Overview

Real-time email quality decisions are no longer a tidy hygiene task tucked behind a form field. They now sit in the middle of acquisition economics, CRM integrity, compliance scrutiny and the daily tug-of-war between growth and operations. For UK teams, the practical question is not whether to use email validation controls, but how strict to make them at the point of capture without quietly harming conversion.

My short version: most teams do not have a validation problem, they have a control-design problem. Treat EVE as a decision system, not a magic filter. Define the risk you are trying to reduce, log why a decision was made, and test strictness on one acquisition path before rolling it across the estate. Cleaner data is useful. Blocking real buyers is expensive. That trade-off is the whole game.

Context for UK teams

Last Thursday, in a meeting room just off Old Street, a growth lead showed me a familiar pattern. Paid social leads looked healthy at first glance, yet pipeline quality was wobbling. The smell of burnt coffee in the corner did not improve anyone’s mood. Form completion was fine, but downstream engagement was thin and support staff were cleaning obviously weak addresses out of the CRM. That is when I realised, again, that the useful discussion is not “valid or invalid”. It is “what level of delivery risk are we prepared to accept on this path, at this moment, for this commercial objective?”

Email sits upstream of too many systems to treat casually. A poor address can damage sender reputation, waste sales effort and muddy attribution. A legitimate but unusual address can be rejected by over-strict controls, cutting off a perfectly real prospect. One side hurts deliverability quality; the other hurts conversion and customer experience. Fancy that, systems have consequences.

There is a broader signal in the market as well. On 7 March 2026, Yahoo Finance reported on the Cintas and UniFirst talks, highlighting the premium attached to operational control when conditions become less predictable. Different sector, same lesson: when uncertainty rises, well-designed controls become economically valuable. On the same day, Yahoo Finance reported that Alphabet faced a Gemini lawsuit while deepening healthcare AI work with CVS, which is a useful reminder that automated decisions attract scrutiny when they cannot be explained. If a platform cannot explain its decisions, it does not deserve your budget.

For UK teams, the safest architecture is privacy-preserving and explicit about evidence. Store the decision outcome, confidence band and reason codes. Avoid collecting more personal data than the workflow genuinely needs. That lines up with the ICO’s accountability and data minimisation expectations under UK GDPR, and it avoids turning a small quality-control project into a compliance faff later.

What is changing in real-time validation

The first change is speed. More teams are moving email checks from batch cleansing into live forms, lead routing and sign-up journeys. That changes the cost of being wrong. In a nightly batch, a false block is annoying. In a real-time flow, a false block can kill a campaign before your kettle has boiled.

The second change is expectation. Growth teams increasingly want one control plane to cover newsletter sign-up, demo request, checkout, account creation and partner referral. Efficient on paper, yes. In practice, a bit of a faff. These paths have different risk tolerances, so the same strictness setting should not govern all of them. A disposable address on a gated white paper may be mildly inconvenient; on contract-critical onboarding it may be a hard stop; on a retail receipt flow it may justify a softer prompt instead.

The third change is governance pressure. The ICO has long been clear that organisations should collect only what they need and be able to justify automated processing choices. The mechanics of email risk scoring are not spelled out for marketers in one neat clause, but the direction is obvious enough: if you automate a decision that affects access or treatment, be ready to explain the basis. In plain English, “the model said so” is wearing thin.

There is also a technical shift in vendor packaging. Older tools often reduced everything to syntax and basic domain checks. Newer systems blend domain reputation, typo detection, catch-all assessment, mailbox behaviour signals and historical patterns. Some of that is useful. Some of it is theatre with a shiny dashboard attached. Cross-source corroboration matters here. Vendor scores, CRM bounce data and your own form analytics should broadly agree before you change production thresholds.

How sound controls actually work

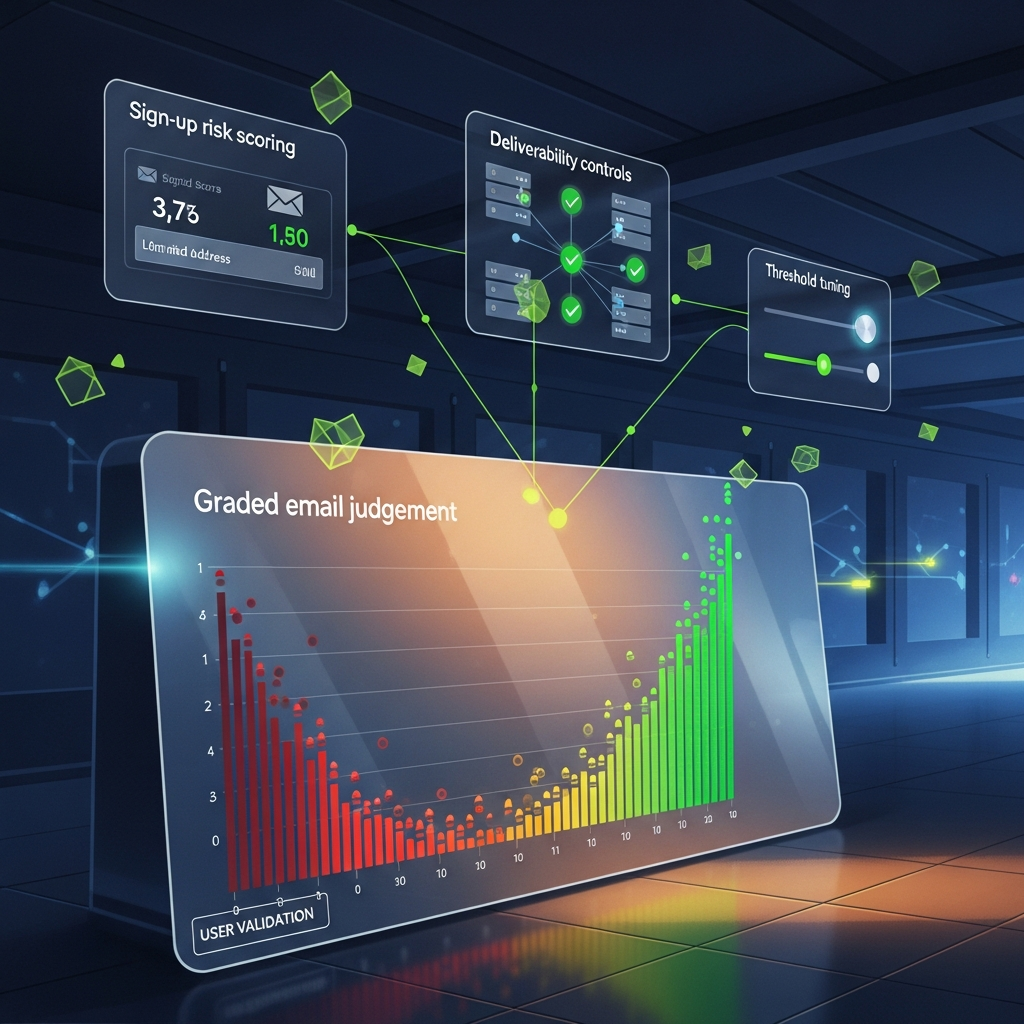

A reliable EVE model, meaning a real-time decision layer for email verification and evaluation, should be built as a sequence of small, explainable judgements rather than one dramatic yes or no. Start with certainty checks: syntax, domain existence, obvious typographic errors and malformed structures. Then move to risk indicators: disposable-provider signals, role-based addresses where inappropriate, suspicious subdomain patterns, catch-all behaviour and historical delivery anomalies. Finally, decide the intervention: accept, prompt, flag or block.

That middle ground is where most teams improve outcomes. Instead of hard-rejecting a questionable address, you might prompt the user with a confirmation message, suggest a likely typo correction, or permit submission while flagging the record for downstream review. This is where false positive reduction stops being a slide-deck phrase and becomes a commercial lever.

Between 10:00 and 12:30 one Tuesday, I tried a form flow where typo-correction prompts appeared only when domain confidence passed a fixed threshold and the edit distance was one character. The first pass was clumsy and flagged a few valid university domains; fixed it with a simple allowlist and a sector-specific domain dictionary. Support noise dropped, and conversion held steady. Nothing glamorous, but that is how you build something worth shipping.

The trade-off is clear. The more aggressively you intercept edge cases, the cleaner the list may look at capture, but the more likely you are to annoy genuine users. High-friction controls can improve top-line data neatness while quietly worsening revenue efficiency. Low-friction controls can lift form completion while loading cost into sales operations and sender reputation. There is no universal right answer, which is why strictness should be attached to acquisition path, not brand ego.

For implementation, insist on human-readable reason codes. “Rejected due to high composite anomaly score” is not much use. “MX missing at decision time, domain newly observed, pattern matched disposable-provider cluster” is better. Better still, log the timestamp, decision version, evidence group and fallback behaviour. That is what makes decisions genuinely audit-ready.

Implications for growth, ops and compliance

For growth teams, validation strictness is part of channel strategy. Paid social, affiliate, organic content, events and partner co-marketing do not produce identical address quality. A single global rule tends to overfit one path and underperform elsewhere. The useful question is whether a control removes low-value noise more efficiently than it deters high-intent humans.

For operations, the issue is workload design. If a real-time control simply pushes uncertainty downstream without labels, ops inherits the mess. If it routes uncertain records into a review state with clear reason codes, ops can act quickly. I have seen teams save hours each week by separating “likely typo”, “domain unresolved” and “high-risk provider” into distinct queues rather than one gloomy bucket called “bad emails”. Taxonomy helps. Not exciting, but useful.

For compliance and governance, explainability is no longer optional. Yahoo Finance’s 7 March 2026 reporting on litigation pressure around generative AI is not about email forms directly, but it does show the direction of travel. Opaque decision systems create legal and reputational drag well before any technical upside is fully realised. The trade-off is speed versus defensibility. Ship a black-box checker quickly, and you may move faster this quarter. Build a transparent control framework, and you are slower at first but far less exposed when a client, regulator or internal auditor asks how a lead was treated.

There is also a sender-reputation angle that gets missed. Poor capture controls do not merely let rubbish into the CRM; they can affect future inbox placement. Google and Yahoo tightened expectations for bulk senders in 2024 around authentication, spam rates and list quality. Those requirements do not prescribe one validation method, but they do reinforce the value of upstream list quality. Cleaner capture is not vanity. It protects reach later.

How to test without creating fresh faff

Start with one acquisition path, not all of them. That is the founder answer because it keeps the learning loop short and avoids turning a sensible control project into a six-week committee hobby. Pick a path with measurable volume and visible commercial impact, such as paid lead generation for demo requests. Define baseline metrics for 30 to 60 days: form completion rate, accepted submissions, suspected typo rate, hard-bounce rate, sales acceptance rate and time spent on manual cleanup.

Next, map decision states before choosing thresholds. A practical four-state model works well for most UK teams: accept, prompt, flag, block. Attach explicit reason categories and a review owner. If your vendor cannot export granular decision logs, that is a warning sign. Automation without measurable uplift is theatre, not strategy.

Then test strictness in controlled increments. For example, run one variant that blocks only clearly malformed and non-resolving domains, and another that also intervenes on disposable or high-risk patterns. Keep the test running long enough to capture delayed outcomes, usually at least two business cycles rather than two afternoons and a hopeful Slack message. Watch conversion shifts, but also downstream quality measures.

Document caveats alongside findings. SMTP-style checks can be inconsistent. Catch-all domains can hide real users. Corporate security gateways can make healthy domains look odd. New businesses often use fresh domains with limited history. When sources conflict, default to the least harmful intervention that still preserves data quality.

Finally, build a review rhythm. Monthly is sensible for most teams; weekly if volume is high or channel mix changes often. Bring growth, ops and compliance into the same half-hour. One screen, one set of metrics, one cup of tea each if possible. Ask three questions: which rules are catching genuine problems, where are we frustrating real people, and which decisions can we explain clearly?

What good looks like after 90 days

After roughly 90 days, a healthy programme does not usually produce fireworks. It produces fewer avoidable surprises. Hard-bounce rates should trend down. Manual cleanup should shrink. Sales should see fewer obviously unusable records. Conversion should remain stable, or improve if typo prompts are well designed. Most importantly, internal debates become sharper because the evidence is better. Instead of arguing about whether validation is “working”, teams can compare specific rule groups against specific outcomes.

Good programmes also become more selective about where they use strict controls. Not every path deserves the same friction. High-intent B2B demo requests may justify stronger real-time scrutiny than low-commitment newsletter sign-ups. Partner channels may need bespoke rules because list provenance differs. That selectivity is not inconsistency. It is simply systems thinking applied properly.

The enduring trade-off is precision versus flexibility. Tighter controls can raise immediate confidence in list quality, but brittle rules age badly as user behaviour, mailbox providers and acquisition channels shift. More flexible controls adapt better, but they demand stronger monitoring and cleaner governance. My bias is to choose explainable flexibility, because it keeps the machine honest and the humans in charge.

If you are reviewing email validation controls for real-time quality decisions, start small and make the decision trail visible. Pick one acquisition path, tune EVE strictness, measure the lift and the friction, and keep only the rules you can explain with a straight face. If you fancy a practical test rather than another strategy deck, Kosmos can help your growth team tune EVE strictness on one acquisition path and prove what genuinely improves deliverability quality without frustrating real customers. Cheers.