Full article

Decision-makers do not need another glossy success story. They need proof that a campaign was delivered as planned, what shifted in market response, and whether the outcome can survive contact with operations. That is the real job of a strong campaign case study in the UK: turn activity into evidence, then turn evidence into the next commercial move.

What you are solving

Most campaign write-ups fail in one of two ways. They either over-index on the idea and leave out what was actually delivered, or they list outputs without proving commercial movement. Neither helps a reader decide whether your method is transferable.

A useful case study solves for three audiences at the same time:

- Commercial leaders, who want to know whether revenue, pipeline, qualified demand or retention moved.

- Operational teams, who need to see how delivery held together across timing, approvals, tracking and dependencies.

- Procurement or governance stakeholders, who look for evidence that the approach was controlled, measurable and compliant.

This is where many brands wobble. A strategy that cannot survive contact with operations is not strategy, it is branding copy. If tracking was patched in late, approvals delayed launch, or audience exclusions were not in place, that belongs in the story. Not because it makes the work look weaker, rather because it makes the evidence more credible.

The current market rewards this level of honesty. Data governance is now part of performance. As it stands, the market is less tolerant of vague reporting. Yahoo Finance reported on 6 March 2026 that Reddit was weighing a UK children’s data fine alongside a healthcare advertising opportunity. That pairing is worth a closer look because it captures the live reality: growth options now sit beside scrutiny on compliance and execution quality.

| Case study element | What to include | Why it matters |

|---|---|---|

| Starting point | Baseline performance, date range, channel context | Prevents inflated before-and-after claims |

| Business objective | Named target such as leads, bookings, cost efficiency or share of search | Keeps the story commercially grounded |

| Delivery evidence | Assets launched, audiences reached, workflows completed, timings met | Shows execution quality, not just ambition |

| Measured outcome | Quantified shift with method stated | Lets a reader judge causality with care |

| Constraint | Budget, legal, platform, timing or data limitation | Builds trust and transferability |

Practical method

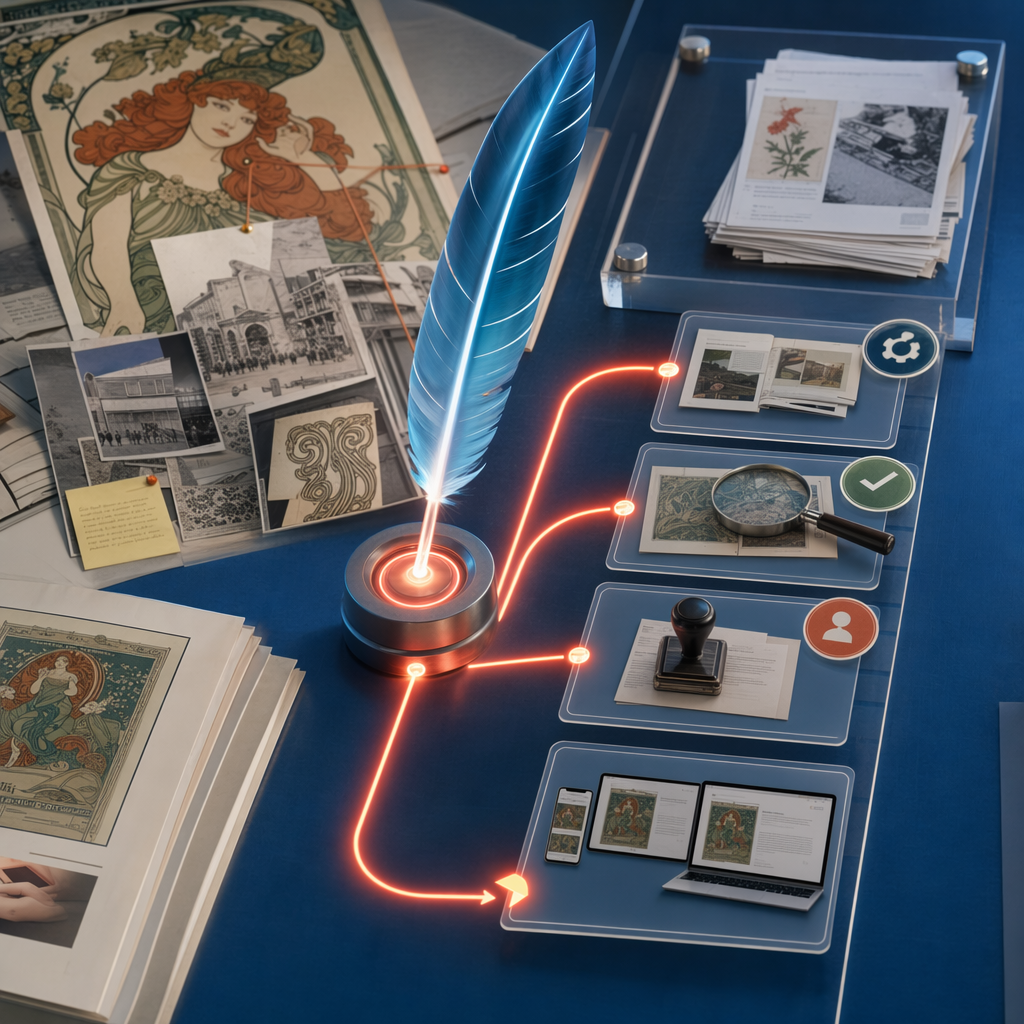

The cleanest format is a five-part sequence: context, objective, method, evidence, implication. It sounds plain because it should. To be fair, plain writing tends to outperform polished theatre when a prospect is trying to evaluate risk.

Here is a practical template for building delivery evidence into the body of the piece.

- Set the market context. Name the commercial moment. For example, a brand may be entering a crowded category, responding to rising acquisition costs, or repairing weak conversion. Add a date range.

- Define the decision. Show the option set. Did the team choose to prioritise one audience over another, move budget, or simplify the launch sequence to protect data quality?

- Show the build. List what was actually delivered: landing pages, audience segmentation, CRM routing, creative variants, reporting setup, governance checks. This is often the missing middle.

- Report the outcome against a baseline. Use percentages, volumes, costs or time savings only if you can state the comparison clearly.

- State what changed next. Explain how the evidence informed the next move, whether that meant scaling, narrowing or pausing.

In a strategy call this week, we tested two paths and dropped one after the first hard metric came in. The lesson was familiar: the more complex route looked stronger on paper, though the simpler route reached reliable measurement first. That is exactly the sort of trade-off a case study should reveal. Readers learn more from a decision point than from a tidy victory lap.

Example evidence block

Client situation: Lead quality had weakened across a six-week period after a platform change.

Objective: Restore qualified enquiries while preserving volume.

What was delivered: Tracking audit, revised form routing, two audience exclusions, three creative variants, weekly reporting cadence.

Constraint: Existing CRM fields could not be rebuilt inside the quarter.

Outcome: Qualified lead rate improved against the prior reporting window, while operational rework reduced.

Commercial implication: Spend could be reallocated with more confidence because signal quality improved first.

Notice the discipline here. No heroic language. No unsupported certainty. Growth claims without baseline evidence should be parked until the data catches up.

Decision points

A strong case study is really a record of decisions. The outcome matters, though the decision logic is what makes the piece reusable for buyers and internal teams. Readers want to know not just what happened; they want to know why one option beat another.

There are four decision points worth documenting in most UK campaign reporting.

First, measurement design. Nature published research on 7 March 2026 examining how on-road mobile monitoring design influences model outputs and later inferences. The subject there is environmental exposure, not marketing, though the strategic lesson transfers neatly: measurement design changes what you can confidently conclude. In campaign reporting, if attribution windows or tracking rules changed mid-flight, your case study should say so.

Second, delivery sequencing. A plan looked strong on paper, then one dependency moved, so we re-ordered the sequence and regained momentum. That pattern turns up constantly in activation work. Explain the order in which creative, media, analytics and CRM were locked.

Third, channel trade-offs. If paid search delivered lower volume and higher intent, while paid social delivered reach and weaker conversion, put that tension on the page. It is far more useful than pretending every channel pulled equally.

Fourth, governance and suitability. This matters more than many teams admit. For on-pack promotions, like the Ribena Monopoly campaign where entry goals were overshot by 258%, showing auditable fulfilment mechanics builds partner confidence. A UK case study should signal how targeting, consent, exclusions or platform controls were handled.

| Decision point | Option A | Option B | Chosen route | Reason |

|---|---|---|---|---|

| Audience focus | Broad reach | High-intent segment | High-intent first | Faster signal quality within limited budget |

| Reporting cadence | Monthly | Weekly | Weekly | Allowed earlier correction after launch |

| Landing journey | Single page | Segmented paths | Segmented paths | Reduced misrouted enquiries |

| Scale timing | Immediate | After validation | After validation | Protected spend while baseline stabilised |

Common failure modes

The easiest way to weaken a case study is to confuse activity with evidence. “We launched across multiple channels” is activity. Evidence would be: launch completed across search, paid social and email in a nine-day window, with reporting live from day one.

Failure mode one is missing baselines. If you say conversion improved, compared with what period, segment or source? Without that, the claim is decorative.

Failure mode two is selective reporting. Teams often report top-line lead growth while omitting lower lead quality or fulfilment strain. The better route is to report the trade-off plainly.

Failure mode three is causality inflation. A case study should not imply that every gain came from the campaign if pricing, seasonality or distribution also shifted. In practice, if several factors moved together, state that clearly.

Failure mode four is hiding constraints. Budget caps, legal approvals, legacy systems and internal bottlenecks are not embarrassing details. They are part of the operating environment. Readers trust a case study more when it admits the edges.

One useful test is simple: could a delivery lead, a marketing director and a finance stakeholder all read the same case study and extract something practical? If not, tighten the evidence.

Action checklist

When preparing a publication-ready case study, use this as the working sequence. It keeps the piece readable and commercially useful.

- State the business problem in one sentence, with date range and market context.

- Name the objective and the success metric before describing tactics.

- List what was actually delivered, including tracking, approvals and hand-offs.

- Include one hard constraint, such as budget, timing or system limitation.

- Report outcomes against a stated baseline or comparison window.

- Show at least one trade-off, not just a win.

- Explain what the evidence changed in the next phase.

- Keep claims proportionate to the quality of measurement available.

The strategic advantage is straightforward. A disciplined campaign case study in the UK does more than prove past work. It shortens future sales conversations, aligns internal teams around what actually moved, and filters out weak assumptions before they become expensive habits. If your current template leans on adjectives more than evidence, it is probably time for a rebuild. To see how Kosmos helps structure delivery evidence that commercial leaders trust, contact Holograph for a practical next move.

If this is on your roadmap, Holograph can help you run a controlled pilot, measure the outcome, and scale only when the evidence is clear.