Full article

If a regulator asks who approved an AI-influenced campaign decision, what can your team actually produce? For a UK team, the first thing to understand is straightforward: under the EU AI Act, campaign approval cannot rest on informal sign off, shared memory, or a trail split across messages and spreadsheets. If AI shapes content, routing, personalisation or prioritisation, the approval chain needs a named owner, a date, and a clear basis for release.

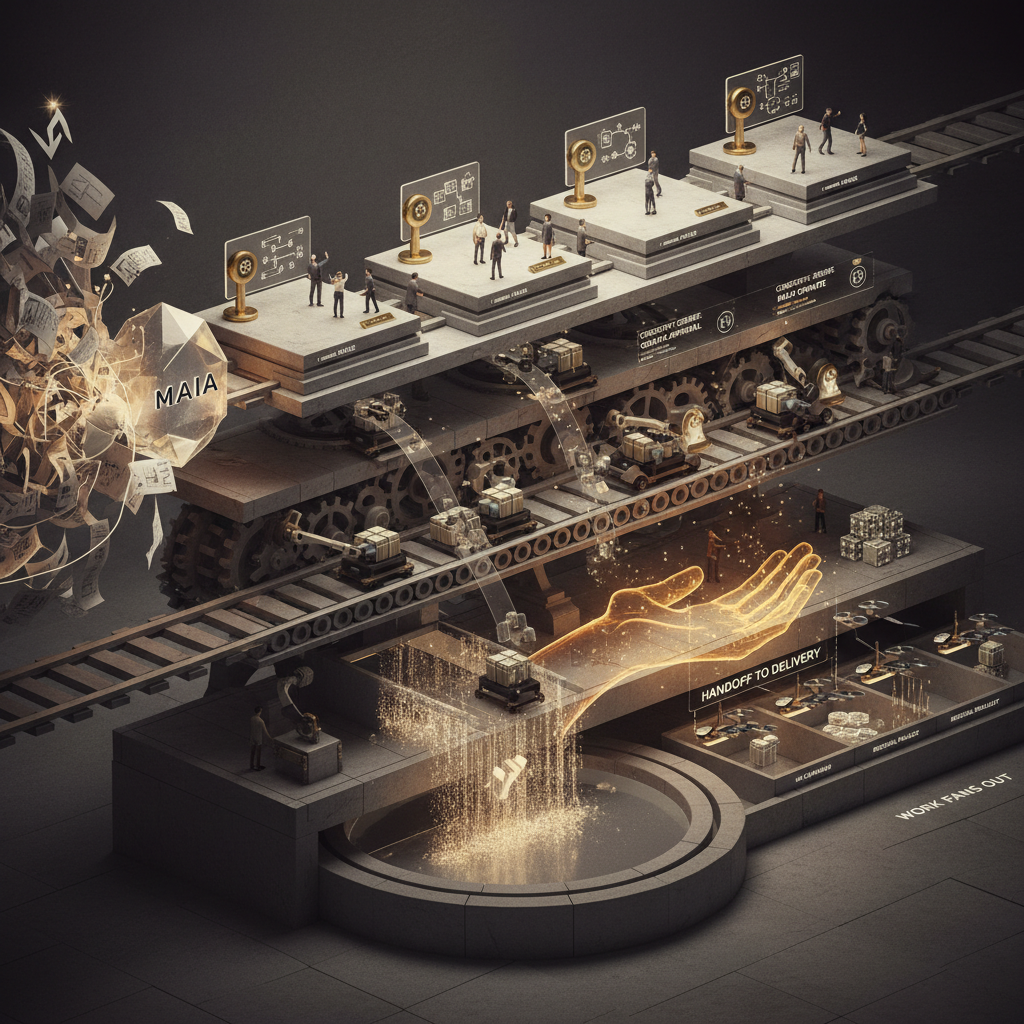

That is the operating shift. In a loose model, approval is scattered across inboxes, trackers and habit. In a governed campaign operating model, the campaign owner can show the owner, the checkpoint, the output and the hand off before anything reaches production. The risk has not gone away. It has become easier to see, and harder to ignore.

The short answer

The campaign owner now carries more practical risk when AI is part of the route to launch, but not alone. The pressure lands at the approval checkpoint where legal, brand and delivery accountability meet. Leave that checkpoint vague and responsibility disperses until it is useless. Define it properly and the team can show who approved what, when, and before which production step.

That is why MAIA matters here. Not because it makes regulation disappear. Because it gives teams a campaign operating model with visible owners, checkpoints, outputs and dependencies before work splinters into channels, tools and side conversations. That is a firmer basis for campaign planning accountability than brief by brief coordination held together by memory.

Signal baseline: where ownership breaks down

Approval chains usually fail in very ordinary places. Legal assumes brand has checked the claim. Brand assumes performance marketing has checked the audience logic. Delivery assumes somebody has approved the automated step that pushes content or targeting forward. Nothing looks badly wrong until the launch date gets close and the missing dependency starts to matter.

Automation can sharpen that problem as quickly as it can solve it. If a workflow moves work forward without recording the decision owner, the team gains speed and loses traceability. Later, the system may show that a task changed state. It may not show who accepted the risk, when they did it, or what evidence supported the release. That is the trade off worth naming plainly.

The useful comparison is not manual versus automated. It is governed campaign planning versus scattered notes and informal delivery memory. One gives you a route through approval. The other leaves you with fragments.

What shifts under the EU AI Act

The Act raises the bar where AI systems influence outcomes, disclosures or decision logic. For campaign teams, that lands as an operating change, not a policy slogan. If AI is used in campaign generation, personalisation, routing or prioritisation, teams need a clearer account of what the system is doing, which human checks sit around it, and who is accountable for release.

That does not turn every campaign owner into a compliance specialist. It does make launch governance much harder to wave through on trust. The owner may not write the technical documentation, but they still need confidence that the approval path is clear and that the supporting evidence exists before production hand off. Without that, the chain looks complete only until somebody asks for proof.

The proof question is blunt: can anything critical slip quietly between strategy, production and measurement? If it can, the approval model is still loose.

Who carries the risk now

The risk rarely sits with one person alone, but it settles more heavily on the campaign owner, strategist or delivery lead responsible for getting work to release. They may not own legal interpretation or platform configuration. They are still often the last clear control point before launch. In practice, that makes them the holder of campaign planning accountability when AI is part of the chain.

In a weak model, shared responsibility sounds collaborative and behaves like a gap. Legal signs one part, brand signs another, delivery keeps the dates moving, and nobody owns the combined release decision. In a stronger model, that final checkpoint has a named owner, explicit dependencies and a route to escalation when evidence is incomplete or the use case sits outside policy.

That is the shift worth paying attention to. The campaign owner is not carrying all liability. They are carrying more of the burden of proof that the chain is controlled, complete and ready for release.

What campaign teams need to stay aligned

A usable approval model does not need to be elaborate. It needs to be specific. At minimum, each checkpoint should show the approval step, the named owner, the decision date, the expected output and the blocked dependency, if there is one. Then the team needs a clear next move when the checkpoint is missed.

This is where accountability mapping beats informal planning. A note marked reviewed tells the next team almost nothing. A checkpoint tied to claim language, disclosure status, brand constraints or release readiness gives people something they can act on. It also makes delays easier to diagnose. If work is blocked, the owner and the reason should be visible without another round of chasing.

Where AI is used in content generation, for example, the chain should show who confirms permitted use, who checks brand and legal constraints, and who authorises release to production. The exact format will vary by team and channel. What should not vary is whether ownership, dependencies and hand off are explicit.

Governed approval chain versus brief by brief coordination

| Operating model | What the team can usually show | Where risk tends to sit |

|---|---|---|

| Brief by brief coordination | Partial sign off spread across inboxes, documents and memory | At release, when nobody can show the full chain or the missing dependency |

| Governed campaign planning | Named owners, checkpoints, outputs and hand offs visible before production | Earlier in the process, where blocked decisions can still be escalated and fixed |

This comparison matters more than any abstract argument about automation. Structured campaign orchestration gives teams a chance to catch the awkward dependency before production. Brief by brief coordination usually finds it when the date is already under strain.

Where MAIA fits best

MAIA fits at the point where a loose campaign brief needs to become a governed operating flow. It makes owners, checkpoints, outputs and dependencies visible in one route, so work does not scatter into disconnected documents and side conversations too early. That matters when strategy, compliance, activation and delivery each hold a different definition of done.

The gain is not theatre dressed up as compliance. It is cleaner execution. Teams can see who owns the next move, what is blocked, what is ready, and where hand off to delivery should or should not happen. That supports launch governance without pretending software replaces judgement.

For teams working across broader operating needs, the wider Holograph solutions picture matters as well. MAIA handles the planning and approval discipline. Quill, DNA and ONECARD can sit around different parts of the delivery and activation stack where content, data and fulfilment controls need to stay connected.

Accountability mapping versus informal delivery planning

| Approach | Control | Trade off |

|---|---|---|

| Informal delivery planning | Fast to start, light on process | Weak traceability, unclear ownership, harder hand off proof |

| Accountability mapping | Owners, checkpoints and dependencies are explicit | Needs discipline up front, but gives clearer release control |

The second model asks more at the start, and that is exactly the point. It moves the friction to a stage where the team can still do something with it. Under the current regulatory shift, that is usually the cheaper place to have the argument.

Actions and watchpoints

If you are reviewing approval chains now, start where AI influences campaign creation, routing or personalisation. Then check whether each approval point has a named owner and a decision date. After that, look for the dependencies that keep appearing late, especially where legal, brand or delivery readiness meet.

This review does not need to begin as a major programme. Pick one live campaign and map the chain from brief to production hand off. If the team cannot see who owns the next move, what is blocking release, or which checkpoint settled the decision, that is your baseline. From there, the work is usually less about adding process and more about making the existing process legible.

If your approval chains are still loose, or critical dependencies only appear when launch is close, the first step is to map the route properly. If you want a cleaner way to turn that into a governed workflow with clear owners and checkpoints, contact us. We can look at what a practical path to green looks like for your team.

If this is on your roadmap, MAIA can help you run a controlled pilot, measure the outcome, and scale only when the evidence is clear.