Full article

A UK retail CRM dashboard can appear cleaner while the email list loses trustworthiness. Bounce rates might stay low, but early retention signals drift. Welcome-series progression softens, and first-repeat purchases lag behind acquisition. Silent rejects flatter top-line numbers by hiding where good prospects are blocked or lost. For teams monitoring email risk in the UK, the lesson is to validate with visible reasoning, governed overrides, and a defensible audit trail.

What a UK CRM team should understand first about EVE

A strategy that cannot survive contact with operations is not strategy, it is branding copy. Testing two paths, the stricter gate at entry kept dashboards healthy but created blind spots. Evidence favoured a visible, evidence led model that separated toxic data from recoverable entries like typo variants or promotion spike signups.

EVE, built by Holograph, is designed for that middle ground. Its validation engine returns reasoning quickly, protecting the form experience while logging why a record was challenged. As it stands, the trade off matters when acquisition pressure is high but tolerance for deliverability damage is low. The advantage is not just catching bad data; it's keeping a usable record of what happened.

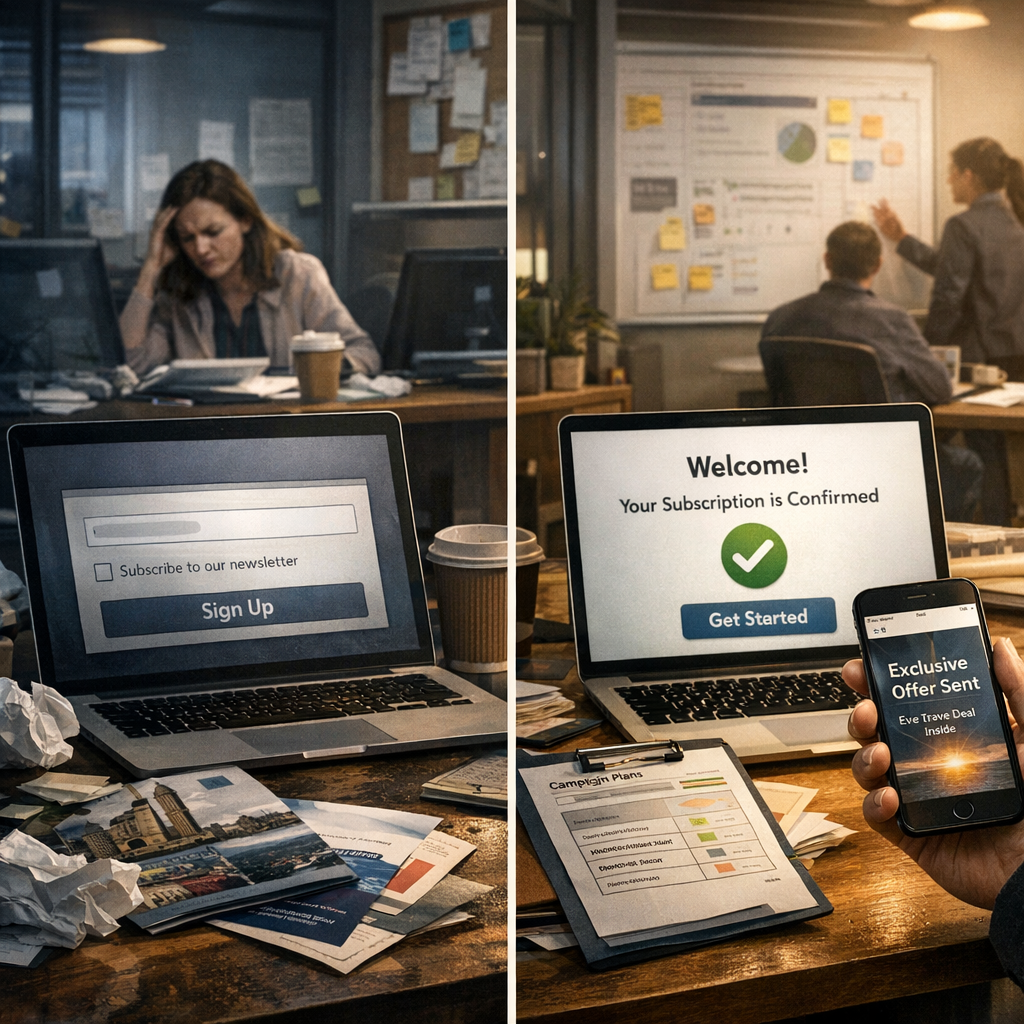

The week the dashboard looked healthier than the list really was

Before the team reworked its process, list health was judged by downstream metrics like hard bounces and send acceptance. Those tell a partial story. When problematic entries are filtered upstream without explanation, the dashboard improves because fewer risky addresses reach the ESP. The catch: genuine prospects can disappear too, especially during offer led signup bursts when behaviour is less predictable.

Manual spot checks found records customer support recognised, yet those addresses were absent from campaigns. To be fair, some blocks were correct; others were not clearly defensible. The team had suppression outcomes but no reasoning trail to support reinstatement or policy tuning. This isn't purely a fraud problem, it sits where fraud monitoring, deliverability, and journey design meet.

Where silent rejects disappear from the operating record

The operational failure was subtle, which is why it was missed. Risk decisions were made in form and middleware layers, but only a simplified pass or fail state reached the CRM team. A plan looked strong on paper, then one dependency moved, so the sequence was reordered to regain momentum. The fix: stop treating rejection as the end, and start logging reason codes marketing, ops, and compliance can review together.

Silent rejects vanish between systems, at form level validation, in middleware suppression logic, or during ESP audience preparation. Without joined up logging, they never become part of the learning loop. Consent compliance enters here too. Under UK and EU obligations, teams need a defensible record. EVE's audit oriented approach helps with probabilistic outcomes and no data retention, providing an inspectable trail.

What the team could actually inspect: logs, notes and early series exits

Switching to inspectable validation reasoning sharpened judgement. Baseline showed a gap between signup volume and welcome eligibility, but little evidence for why. Afterwards, they could inspect reason codes, override notes, and early series exits side by side.

Two practical details stood out. Early series exits became a proxy for hidden list quality drift; if contacts disengaged fast, the team reviewed original reason patterns instead of assuming creative fatigue. Override discipline improved when exceptions required documented notes. That sounds mundane, but it changes behaviour when people know the rationale will be reviewed against outcomes.

This does introduce some friction, but less than the cost of cleaning up sender damage or re running acquisition. The better comparison is between hidden effort and governed effort: one stays off the dashboard until something slips; the other creates an operating record you can work with.

Why visible validation reasoning changes override decisions

Visible reasoning beats silent automation when the commercial trade off is tight. Growth claims without baseline evidence should be parked until the data catches up. Once reasoning was visible, the quality of override decisions changed. The team could separate likely toxic data from ambiguous entries, routing edge cases into confirmation loops, holds, or monitored approvals.

There's still an unresolved tension: permissive thresholds can support acquisition but risk future deliverability issues; stricter thresholds protect reputation but can flatten growth. No elegant way round that, just an option set, a timing decision, and evidence you can defend.

From acquisition to retention: the metrics that started to line up again

The practical advantage appeared when metrics stopped pulling in opposite directions. With reason logging and governed overrides, the team could read acquisition, onboarding, and retention as one system. The result was alignment, not universal uplift. Deliverability protection no longer relied on invisible losses, and retention reporting no longer treated missing prospects as a mystery.

In UK retail, where campaign windows are short and list damage takes longer to unwind than a promotion to launch, that operational clarity matters most. If your dashboard looks healthier than your welcome and repeat behaviour suggests, review where rejects vanish, compare baseline against early series outcomes, and check if your validation logic is explainable outside engineering. Book a frictionless validation walkthrough with our solutions team to test where silent rejects may be distorting performance.