Full article

Overview

Your inbox pings and, fancy that, another vendor says its latest release will change everything. Marketing spots upside. Ops spots integration risk, retraining, and a week of avoidable faff. In most UK B2B SaaS teams, both sides are partly right.

The useful question is not whether an update looks clever. It is whether it earns a place on the roadmap now. The safest teams I know tie each release to a live business objective, estimate likely uplift in plain numbers, price the implementation cost honestly, and check data-handling implications before anyone starts to build.

What problem are you actually solving?

The real issue is resource allocation under uncertainty. Every hour spent implementing a new feature is an hour not spent fixing customer-reported bugs, improving onboarding, or shipping work tied directly to retention and revenue. The trade-off is blunt: novelty can be energising, but delivery capacity is finite.

Last Tuesday, in Manchester, a vendor demo landed in an ops discussion with all the usual polish. The room had that familiar hum of possibility; kettle still warm, dashboards open, everyone half-convinced the new module might save the quarter. On closer inspection, it required a schema migration, touched three live integrations, and offered no credible route to improving the metric we actually cared about that month: churn. That was the useful bit. Not the demo. The constraint.

Without a decision system, roadmap calls drift towards whoever tells the best story. With one, you move from opinion to evidence. That matters even more when a vendor cannot clearly explain what changed, how decisions are made, or what data is processed. If a platform cannot explain its decisions, it does not deserve your budget.

That scepticism is not theoretical. On 8 March 2026, FINANZNACHRICHTEN.DE reported Aqara’s Light + Building 2026 announcement around scaling professional-grade infrastructure and unified management, while ViaNews Market reported the launch of an SSL-secured algorithmic trading platform moving AI trading systems towards production on 7 March 2026. Different sectors, same signal: as platforms become more operationally central, buyers need clearer evidence on control, security and accountability, not just shinier release notes.

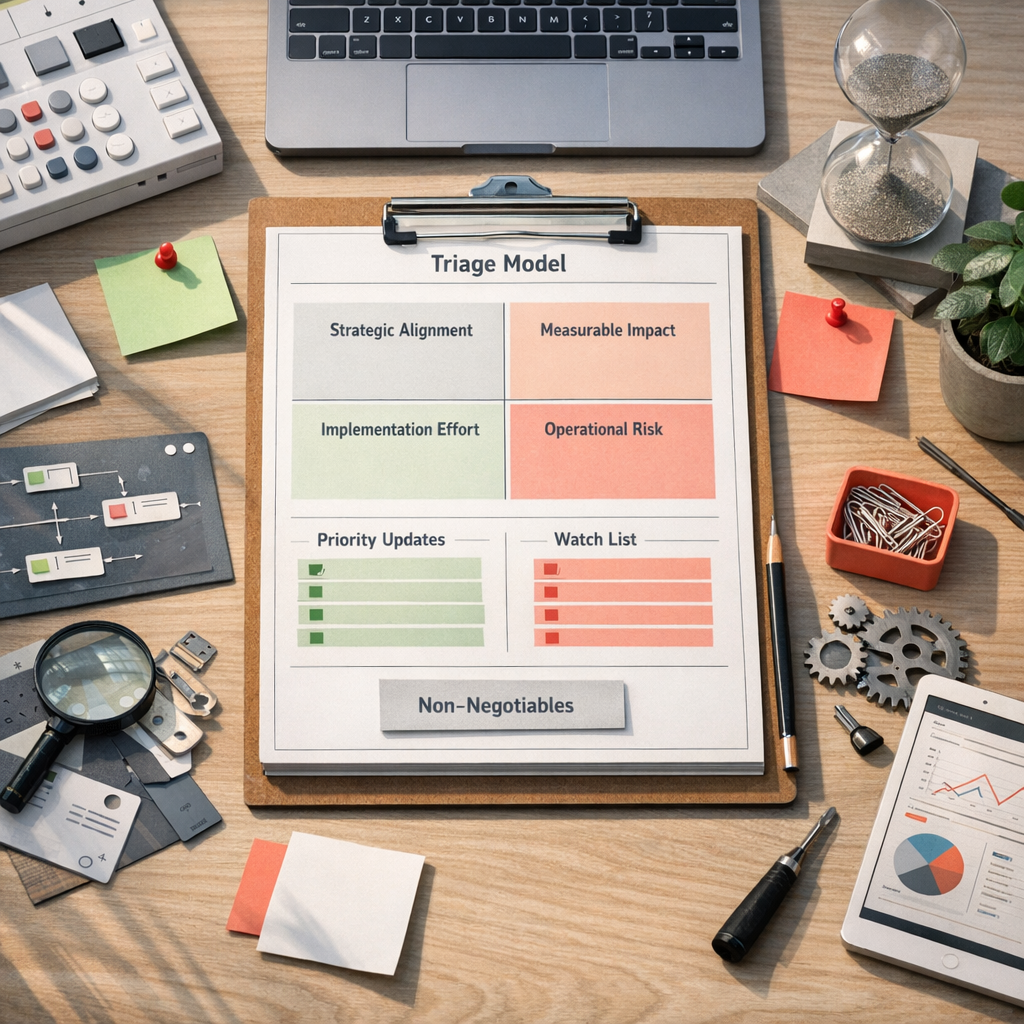

A simple triage method for MarTech updates

For a Kosmos platform update, or any comparable MarTech release, keep the filter simple enough to use in ten minutes and strict enough to stop impulse decisions. Four questions usually do the job.

Then add the question many teams still skip: what changed in the supplier dependency? If the update introduces a new model, external processor, or decisioning layer, log it properly. Recent scrutiny around opaque AI systems is a fair warning. Healthcare and public sector buyers have been asking sharper questions for good reason, and the rest of SaaS should catch up.

- Has the vendor changed what data is collected, retained or sent to third parties?

- Has any model behaviour changed, and can the supplier explain why outputs differ?

- Can your team switch the feature off without breaking core workflows?

- Is there a human review step for decisions that affect spend, routing or customer experience?

- What evidence exists beyond the release note: pilot data, error rates, or observed uplift?

When a Kosmos platform update belongs on the roadmap

Once an update has been scored, the next step is not more debate. It is a routing decision. In practice, most releases fall into four buckets.

Non-negotiables come first: security patches, compliance fixes, and defects that are already costing you money or exposing customer data. These go in immediately because the risk of delay is measurable. The trade-off is obvious: you may interrupt planned work, but that disruption is usually cheaper than a breach, failed audit, or broken buyer journey.

Strategic enablers come next. A Kosmos platform update belongs here when it supports a committed objective rather than merely sounding sophisticated. If sales efficiency is the target for Q2, an update to lead routing should be tied to a specific test, such as lifting MQL-to-SQL conversion by 10% over one quarter. No target, no priority. Simple enough.

Efficiency gains matter more than teams sometimes admit. If an update removes repetitive admin for five people and saves 30 minutes a day each, that gives you roughly 55 hours back a month. Worth doing? Quite possibly. Worth doing in the same sprint as a CRM migration? Probably not. There’s the trade-off.

Watch-list items are where you park the clever-but-not-yet-useful releases: dashboard cosmetics, experimental assistants, and features still looking for a business case. Log them, review them quarterly, and move on. A watch list is not indecision; it is controlled patience.

Where teams usually get this wrong

The first failure mode is automation theatre. The demo is smooth, the copy is breathless, and nobody can say what number should improve after launch. Automation without measurable uplift is theatre, not strategy.

The second is weak causality. Teams often assume more automation creates more value. Sometimes it does. Sometimes it simply moves the same mess into a faster system. If a new scoring feature makes lead assignment quicker but also less transparent, you may save ten minutes a day while making pipeline quality harder to trust for a quarter. Faster is only better when accuracy and accountability hold up.

The third is ignoring human adoption. Between 10:00 and 12:00 last Thursday, I tested a workflow handover process and managed to break the tidy version of it with one unclear field label. Fixed it with a painfully simple hack: rewrite the labels in plain English, record a two-minute walkthrough, and ask one person from sales ops to run it cold. Support tickets vanished. The technology was fine; the wording was the bug. Trade-off: fifteen extra minutes on enablement saved hours of confusion later.

The fourth is fuzzy ownership. One named owner should run intake, triage and communication. Not as a gatekeeper, but as the person who keeps the process honest. Where updates affect data handling or automated decisions, that owner should also confirm whether privacy-preserving options exist. Default to the least invasive architecture that still does the job.

What to document before you ship

If the update is likely to affect workflows, reporting, approvals or customer messaging, document the operational basics before rollout. This is not glamorous work, but it is what saves you when something goes sideways on a Wednesday afternoon.

That level of documentation matters most in regulated or approval-heavy teams, but frankly it helps everyone. A release note is not a governance system. It is just a starting point.

- Ownership: name the operational owner, technical owner and approver.

- Change scope: note which integrations, fields, automations or outbound messages are affected.

- Rollback plan: confirm whether the feature can be disabled cleanly and how long reversal would take.

- Data handling: record any new processors, destinations, retention rules or model dependencies.

- Success measure: define the metric, baseline and review window before launch.

A working checklist for the next release note

If you want a practical way to review the next update without making a meal of it, use this checklist.

A disciplined update process turns a noisy release cycle into something you can actually use. You spend less time reacting, more time building, and your roadmap starts to reflect real operating priorities rather than whichever vendor sent the glossiest email over your morning cup of tea.

If your team is weighing up whether the next Kosmos platform update should move now or wait, we can help you sort the signal from the sales patter and build a decision model around your real constraints. Have a word with us, and we’ll map out a practical next step that fits your stack, your governance requirements and the quarter you’re trying to ship.

- Log the update centrally. Use Jira, Linear, Notion or even a spreadsheet. The tool matters less than having one source of truth.

- Check for immediate risk. If it affects security, compliance, customer data or a broken live workflow, escalate the same day.

- State the intended outcome. Write one line: “We expect this to improve X by Y within Z period.” If you cannot write that line, park it.

- Estimate full effort. Include engineering, QA, training, documentation and rollback planning. This is where under-scoping usually creeps in.

- Review supplier accountability. Note data changes, model changes, third-party dependencies and whether human review remains possible.

- Choose one route. Do now, schedule next quarter, or add it to the watch list. Avoid the classic “we’ll see”, which is just procrastination wearing a tie.

- Close the loop. Share the decision and the reasoning with the people affected. Transparency cuts politics and reduces rework.