Full article

Overview

Most teams do not mean to build a brittle sign-up journey. They inherit one. A fraud rule gets tightened after an abuse spike, CRM adds a suppression layer after a deliverability wobble, and before long the whole thing behaves like an overzealous bouncer. Good people get turned away, bad actors still slip through, and nobody can say with a straight face why.

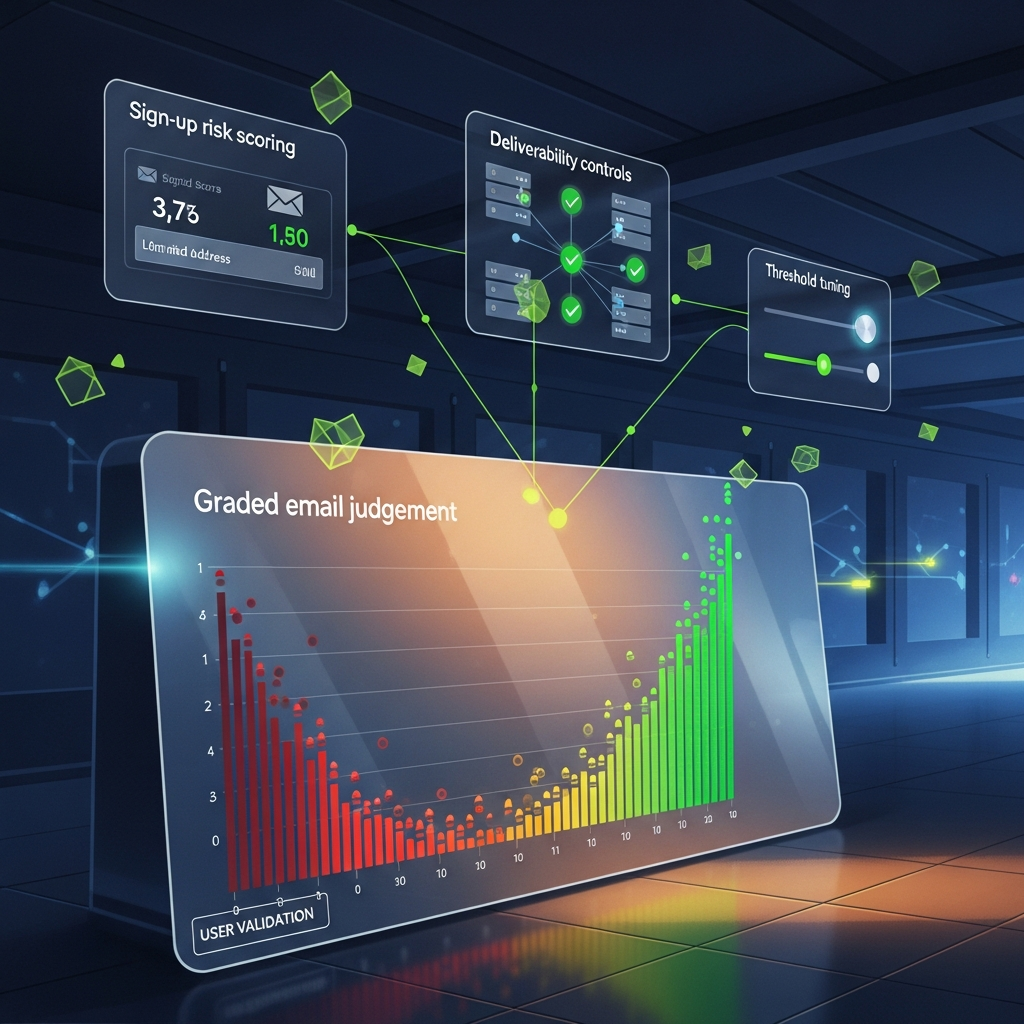

A calmer alternative is graded email judgement: decisions that can pause, limit, route or review rather than simply reject. The trade-off is plain enough. You take on a bit more operational design up front in exchange for fewer false negatives, better list health and outcomes a human can actually explain.

Context

Last Tuesday, in a small office in East Sussex, a client funnel looked healthy until we opened the exception logs. The fan on the test machine hummed, the kettle clicked off, and there it was: top-line conversion looked passable, yet a chunk of legitimate sign-ups had been stopped by binary rules written for a different abuse pattern six months earlier. That is when I realised the real problem was not detection. It was decision design.

This is a common systems failure. Email-led acquisition is now judged across several layers at once: syntax and mailbox validity, domain reputation, behavioural signals, prior engagement hints, consent confidence and downstream sending risk. Each layer is reasonable on its own. Wire them together badly and the journey becomes a bit of a faff for real users while still missing more adaptive abuse.

Even outside martech, good assessment design tends to avoid one vague pass-fail instinct. GOV.UK guidance updated on 10 March 2026 for car driving tests points to structured criteria and examiner judgement rather than a woolly single verdict. Different domain, same lesson: where a decision matters, you need observable standards, escalation paths and reasons someone can articulate.

There is a wider commercial pressure too. ETBrandEquity reported on 11 March 2026 that marketing practice is pushing towards more data-led personalisation and dynamic decisioning. Full text was unavailable in the feed, so caveat applied, but the direction is consistent with what teams are shipping in the wild. Once automation sits closer to acquisition, black-box rejection rules become harder to defend. Growth wants volume, CRM wants inbox placement, risk wants control. A binary gate makes those incentives collide.

If a platform cannot explain its decisions, it does not deserve your budget.

What is changing

The shift is from rejection to orchestration. Older sign-up flows asked one blunt question: allow or deny? Better ones ask several smaller questions in sequence. Accept now? Limit messaging? Delay an incentive? Request another signal? Route quietly for review? Watch early behaviour before upgrading trust? Subtle, yes. Usually more effective too.

Three changes sit underneath this.

First, scoring is increasingly useful as a routing tool rather than a verdict engine. A score of 42 out of 100 is not a decision. It is an input. One band might receive full access, another gets into the product but not the referral scheme, and a third is reviewed with a lightweight check. The trade-off is operational complexity versus recovering legitimate users who would otherwise be lost.

Second, deliverability controls now need to sit much closer to acquisition logic. Google and Yahoo tightened bulk sender expectations in 2024 around authentication and unwanted mail. By 2026 that is not news; it is plumbing. An address can be technically valid and still be a poor candidate for immediate promotional pressure. Graded handling lets CRM protect sender reputation without forcing growth to bin every uncertain lead.

Third, explainability has become a procurement question, not a nice extra. On 10 and 11 March 2026, announcements about a Kosmos Energy public offering were carried across MFN, StockTitan and Watch List News. The framing varied, but the core facts were corroborated across sources. That is a decent discipline for product decisions as well: trust rises when one conclusion is supported by multiple observable signals, with caveats where evidence is partial.

Between 08:00 and 10:30 last Thursday, I tried a deliberately harsh registration policy in a sandbox and watched first-session conversion sag. We fixed it with a simple hack: keep account creation open, suppress immediate promotional sends for the middle-risk band, and trigger a review route only when two independent signals agree. Fancy that. Fewer arguments, better data.

How graded decisions work in practice

A sensible model starts with bands, not absolutes. Low risk means proceed normally. Medium risk means proceed with constraints. High risk means do not grant the risky action yet, but still decide whether a legitimate review path exists. That distinction matters because account creation, promotional eligibility and messaging permissions are separate levers.

Take a practical example. A new sign-up from a well-configured domain with normal typing cadence and no conflicting history may land in a low-risk band. A sign-up using a disposable-style pattern, on a domain with weak engagement history and unusual velocity from one network, may fall into a constrained band. The response might be: create the account, block incentive issuance, hold non-transactional sends for 72 hours, and watch for confirmation or early product engagement. That is email judgement doing useful work, not theatre.

The implementation detail teams often miss is feature separation. Do not let a mailbox-quality signal masquerade as a fraud verdict, and do not let one behavioural anomaly rewrite your sending policy by association. One score can summarise the picture for routing, but the underlying reasons should remain visible: mailbox risk, behavioural risk, historic engagement similarity and policy restrictions. When support asks why someone did not receive a welcome offer, you want an answer in under 20 seconds, not a forensic exercise.

There is a trade-off here too. Granular logic creates maintenance overhead. Every extra branch needs monitoring. The answer is not to flatten the system until it becomes blind; it is to keep the tree short and measurable. In most cases, three to five outcome states are enough: allow, allow with sending limits, allow with commercial limits, review, and block where evidence is strong.

Automation without measurable uplift is theatre, not strategy. If your scoring layer cannot show lower complaint rates, stronger first-30-day engagement, reduced abuse cost or recovered legitimate conversion, then you have bought a dashboard, not an outcome.

Implications for growth, CRM and risk teams

For growth teams, the first implication is measurement. A hard reject policy can flatter short-term cleanliness while quietly shrinking addressable demand. A graded model often recovers users later rather than instantly, so day-zero completion is not enough. Look at activated users at day 7 and day 30 by decision band. Otherwise you will miss the lift and kill a useful system because the first chart looked untidy.

For CRM, the middle band matters most. That is where a fair bit of damage can be avoided. Rather than dropping every new address into a broad welcome journey, you can limit cadence, prioritise transactional over promotional messages, and wait for positive signals before increasing pressure. The trade-off is that some perfectly decent users get a slower nurture path. Usually that is preferable to dragging down list-wide sender reputation.

For risk teams, review routes create a humane buffer between suspicion and denial. They also expose where rules are too blunt. If reviewers keep overturning one class of decline, the model needs work. If medium-risk accounts rarely progress into healthy engagement, your thresholds may be too soft. That is why threshold tuning should be treated as an operating practice, not a one-off configuration task. Monthly is a sensible minimum. Weekly is often better during heavy acquisition periods.

There is a governance angle as well. Recent privacy lessons in adjacent data fields show that even summary scores can reveal more than teams expect if they are poorly governed. The sensible default is privacy-preserving architecture: minimise retained personal data, keep reason codes operational rather than intrusive, and classify the event rather than branding the person in perpetuity.

Actions to consider

If you are refitting this kind of flow, start with an audit of hard rejects across the last 30 days. Split them into four buckets: clearly invalid, likely risky, uncertain but plausible, and operationally blocked for historical reasons. In most stacks, that last bucket is larger than anyone expects. It is where old rules go to hide.

Next, define outcome states in plain English and tie each one to a business owner. For example: accepted normally; accepted with messaging limits; accepted with incentive restrictions; queued for review; blocked with reason. Growth should own incentive policy, CRM should own message handling, risk should own review criteria, and product should own journey copy. If nobody owns a state, it will drift within a quarter.

Then run live-shadow analysis before rollout. Put the graded logic alongside the current flow for two to four weeks and compare what it would have done. At minimum, track four measures: completed registrations, first campaign engagement, complaint or unsubscribe pressure, and confirmed abuse events. Named-source benchmarks are useful for context, but your own operational data should carry more weight than industry averages.

Every line above has a trade-off. More caution protects reputation but may slow growth. More openness can lift acquisition but increase review load. The job is not to eliminate trade-offs. It is to choose them consciously, then test whether they are worth the cup of tea required to maintain them.

- Use sign-up risk scoring to classify the event, not the person forever.

- Separate identity confidence from campaign eligibility.

- Apply threshold tuning by cohort, channel and offer type, rather than one universal line.

- Require at least two corroborating risk signals before a hard block, unless the address is plainly invalid or policy-prohibited.

- Log short reason codes that support analysts, support teams and compliance review.

What good looks like after launch

Once shipped, a healthy system becomes quieter. Support tickets about unexplained rejection should fall. CRM should see fewer risky addresses entering high-frequency programmes. Growth should recover a slice of users previously lost to blunt rules. Risk should spend less time arguing over edge cases and more time adjusting policy with evidence. Those are observable outcomes, not vendor poetry.

Watch for three warning signs in the first 60 days. One: review queues that only grow, which usually means your middle band is too wide. Two: high override rates by reviewers, which means the model reasons are poorly weighted or poorly described. Three: no measurable uplift in acquisition quality or list health, which means the extra complexity is not earning its keep. If that happens, simplify. Build, ship, test, then trim the faff.

The calmer alternative to hard rejects is not softness. It is design maturity. Graded decisions protect sender reputation, preserve more legitimate demand and give real people a fairer route through the system. If your growth and CRM teams want to see whether that works on an actual journey rather than a tidy slide, review one live acquisition flow with EVE and inspect how the scores, review routes and reason codes would behave in practice. Cheers, that is usually where the useful conversation starts.

Invite growth and CRM teams to review how EVE would score one live acquisition journey.